Research and Teaching

The Effect of Online Instruction in an Introductory Anatomy and Physiology Course and Implications for Online Laboratory Instruction in Health Field Prerequisites

Journal of College Science Teaching—May/June 2021 (Volume 50, Issue 5)

By Patrick Brown and Jonathan Peterson

Education in science, technology, engineering, and math (STEM) is increasingly online, even the laboratory components of STEM courses. As online laboratory education trends upward in terms of enrollment and variety of course offerings, the central question remains: Is online equivalent to a traditional face-to-face (F2F) lab experience? In this study we conducted a retrospective analysis of student performance in an asynchronous online introductory Anatomy and Physiology course with about half of the students opting for a traditional F2F lab and the other half an asynchronous online lab. Although student demographics and level of preparation (incoming GPA) were nearly identical, students enrolled in the F2F laboratory section outperformed their peers in two of three course exams and in both laboratory practical exams. Our data indicate that the type of cognitive task being asked of the student is the main determinant in the efficacy of an online laboratory experience.

Research questions for this study were (1) Do students in a completely online anatomy laboratory acquire content knowledge as well as students in a traditional face-to-face laboratory? and (2) Are there particular topics in which one mode of instruction is superior to the other?

The use of hands-on laboratory instruction has and will likely always be, integral to student learning in science. Several trends within higher education are driving some or all of that instruction into a virtual or online environment. Enrollments across higher education in the United States are growing, particularly in public institutions, which are receiving increasingly lower state funding, and a virtual laboratory experience is easier to scale. Additionally, offering a laboratory online frees funding that would normally go to equipment, specimens, reagents, and other high-cost essentials in delivering a face-to-face (F2F) laboratory. The central question in this trend of moving laboratory instruction into a virtual platform is: Is it as effective in producing the kinds of learning that takes place in a F2F laboratory class?

To date, the literature on the effectiveness of online or virtual laboratory instruction is quite mixed. One of the first large-scale reviews of online laboratory efficacy found that the results were mixed across the literature due largely to investigators valuing and therefore measuring different outcomes and objectives (Ma & Nickerson, 2006; Cancilla & Albon, 2008). If one’s primary objectives in laboratory education are social or affective then F2F would have an obvious advantage, whereas if the objectives are primarily knowledge acquisition or conceptual understanding, then perhaps a virtual environment with its theoretically infinite opportunities for repetition and practice would have the advantage. Brinson (2017) confirmed that indeed there is no real consensus across the literature in science, technology, engineering, and math (STEM). He did however, add that other confounders such as demography, the bias toward undergraduate education instead of K–12, and the siloed nature of STEM educational publishing (i.e., few cross-disciplinary journals), also contribute to the variety of results in measuring the efficacy of online laboratory instruction (Brinson, 2017). Faulconer & Gruss (2018) state that objectives (cognitive and affective) should absolutely be considered when attempting to measure the effectiveness of an online laboratory experience. However, they further argue that if student opinions or content knowledge assessments are one’s primary measures, then a well-designed nontraditional format can be effective, but that the needs and goals of the learner and the institution must be considered (Faulconer & Gruss, 2018).

Indeed, throughout the STEM fields, results do appear to be mixed when comparing the efficacy of an online/virtual laboratory experience to a traditional F2F one. Several studies in multiple STEM fields found no statistical difference between students who completed a virtual laboratory versus the traditional one (Bloodgood, 2012; Faulconer et al., 2018; Miller et al., 2018). One such study looked not only at performance, but also student attitudes and preferences and found no statistical difference between the online and F2F environment (Miller et al., 2018). However, some researchers found that one or the other format was indeed better. Gilman (2006), in looking at a single biology lab, found that the nontraditional (online) format was actually superior in developing students’ content knowledge. In contrast, Van Nuland and Rogers (2017) found that it was the F2F environment that produced vastly superior outcomes in student content knowledge.

Like many other disciplines within STEM, it makes sense that virtual options should be explored in the Anatomy and Physiology lab, especially in light of the cost of anatomical models, cadavers, and specimens for dissection. The nature of the anatomy laboratory in particular, is quite different from others in that the types of activities that take place are especially intense in spatial reasoning and benefit from opportunities for kinesthetic learning. The human body is a three-dimensional structure and the anatomy laboratory is largely an exploration of that structure and the relationships between the various sub-components therein. As in the rest of the STEM fields, the literature on the efficacy of virtual anatomy instruction is mixed and confounded by other variables. For example, in a study that compared a traditional lab to a virtual experience called Anatomy TV, the students in the F2F hands-on experience outperformed the virtual section in content knowledge acquisition (Mathiowetz et al., 2016). When the authors investigated other variables, they found that the students in the F2F experience had significantly more time on task than those in the virtual experience, so the mode of offering could not be definitively put forward as the primary reason for the differences they observed (Mathiowetz et al., 2016). Other investigators found no significant difference in student gains of laboratory content knowledge and that, once again, factors such as incoming grade point average (GPA) or level of student engagement were more predictive of student success than mode of instruction (Davidson, 2017; Attardi et al., 2018; White et al., 2019). Despite the availability of some very well-developed commercial resources, the F2F seems to have an edge, especially for the types of spatial and kinesthetic learning that needs to take place in the anatomy laboratory. In fact, the virtual experience actually disproportionately affects students who enter the course with low spatial reasoning ability (Van Nuland & Rogers, 2017).

In this study, we look closely at a particular STEM course (Anatomy and Physiology) in which we are able to eliminate most of the confounding variables that made a direct comparison between instructional methods more difficult in some prior studies. The implications of our data, however, go beyond our own discipline. The variance in performance between the two modes of instruction have implications for laboratory instruction in general.

Methods

We conducted a retrospective analysis on student academic performance in the first semester of a two-semester lecture plus lab traditional introductory Anatomy and Physiology course (typically taken by preallied health majors—nursing, dental hygiene, radiography, physical therapy, etc.). Students self-enrolled in an asynchronous online Anatomy and Physiology lecture course and elected to enroll in an F2F or asynchronous online laboratory. The lecture and laboratory components of the course were all taught by the same instructor, with over seven years of teaching the course F2F and online.

All analysis was approved from by our Institutional Review Board East Tennessee State University institutional review board (c0819.17e-ETSU).

Course design

Course delivery, student assessment of learning, and student engagement were managed through the university’s course management software Brightspace (D2L) Virtual Learning’s online learning environment. The course textbook was the open source Anatomy and Physiology by Openstax (Openstax College, 2013). Students were not proctored for quizzes and were encouraged to use their notes. Exams were proctored in a certified testing facility and notes were not allowed.

The lecture component of the course was administered asynchronously online for all students and was divided into three main components, each ending with an exam: The first component included an overview of the anatomical systems, cellular anatomy, and histology; the second included the integumentary system, the skeletal system, and the muscular system; and the third component included the nervous and endocrine systems. Each component of the course was subdivided into a series of prerecorded lectures (5–15 minutes, 39 videos in total), nongraded review questions (for each video), content area review quizzes (12 quizzes total), and an interactive discussion activity (12 total). Lecture content was assessed by approximately 80% knowledge based, 15% comprehension based, and 5% application-based, multiple-choice questions.

Both the F2F and online laboratory course were divided into 13 separate modules, as indicated in Table 1, with the first four modules corresponding to the content assigned within the first lecture exam, five modules for the second lecture exam, and three modules for the third lecture exam. Each module included a brief introductory video, a list of terms to identify, and a series of images of anatomical diagrams, anatomical models, and pro-dissected cadavers from multiple angles in which students were tasked with identifying the corresponding anatomical features. Each lab module also had a corresponding ungraded review activity where students were asked to identify the indicated anatomical features from a random set of anatomical images. Lastly, each lab module had a corresponding multiple-choice quiz. Laboratory material was 99%+ knowledge-based, multiple-choice questions primarily identifying the labeled component of an image or diagram using the same images that were provided to students to study.

The F2F laboratory session included the same study materials and online modules as the online laboratory, but additionally included access to anatomical models and a pro-dissected cadaver in a traditional F2F format. Additional nonsupervised time with the anatomical models and cadaver was available to all students (F2F or online) throughout the semester; however, almost no students online were observed to participate.

Student demographic data

Student demographic data were obtained in aggregate from university records.

Student assessment

Specific assessments included three lecture examinations, two laboratory examinations, and 12 specific content quizzes for lecture and laboratory respectively (24 quizzes total). All quizzes and examinations were administered through the course management software. The examinations were proctored, whereas students were not proctored for quizzes and were encouraged to use their textbooks and notes.

Student engagement

All students were enrolled in a single online lecture section. Additionally, the F2F lab students had access to all of the online material available to the online lab students. Student engagement was assessed for both the lecture and laboratory sections of the course based on the total time spent actively logged into the course website, number of independent course log-ins, and average quiz grades. While there might be some very slight differences between the F2F and online lab sections, all activities were completed online through the course website, so the F2F lab students were doing the same online activities as the online labs, only in a room with access to three-dimensional models, bones, and other artifacts.

Statistical analysis

Sex distribution and course completion frequency between the F2F and online courses were compared by the Kolmogorov-Smirnov Test. Correlations were determined by calculating the Spearman’s Rank Correlation Coefficient. All remaining data were checked for normality with the D’Agostino and Pearson omnibus normality test. Comparisons between data that passed the normality test were analyzed by an unpaired t-test, whereas skewed data were analyzed by a Mann-Whitney U test.

Results

Students demographics

One hundred and one students total enrolled in the course, 44 students enrolled in the F2F lab, and 57 enrolled in the online lab. Aggregate student demographic data are reported in Table 2. Data from students who did not complete the course or missed a regularly scheduled exam were not included in the analysis. In total, 83 students completed the course as scheduled, 38 F2F and 45 online, respectively. Although not quantified, reasons for students not completing the course included: changing schedule, medical withdrawals, or excused absence requiring a make-up exam. Students who completed a make-up exam were not included in the analysis, as make-up exams were different from the regularly scheduled exams. There was no difference in the number of students who did not complete the course between the F2F and online sections. All demographic data were collected in aggregate from university records office and were not able to be matched to individual students.

Overall performance

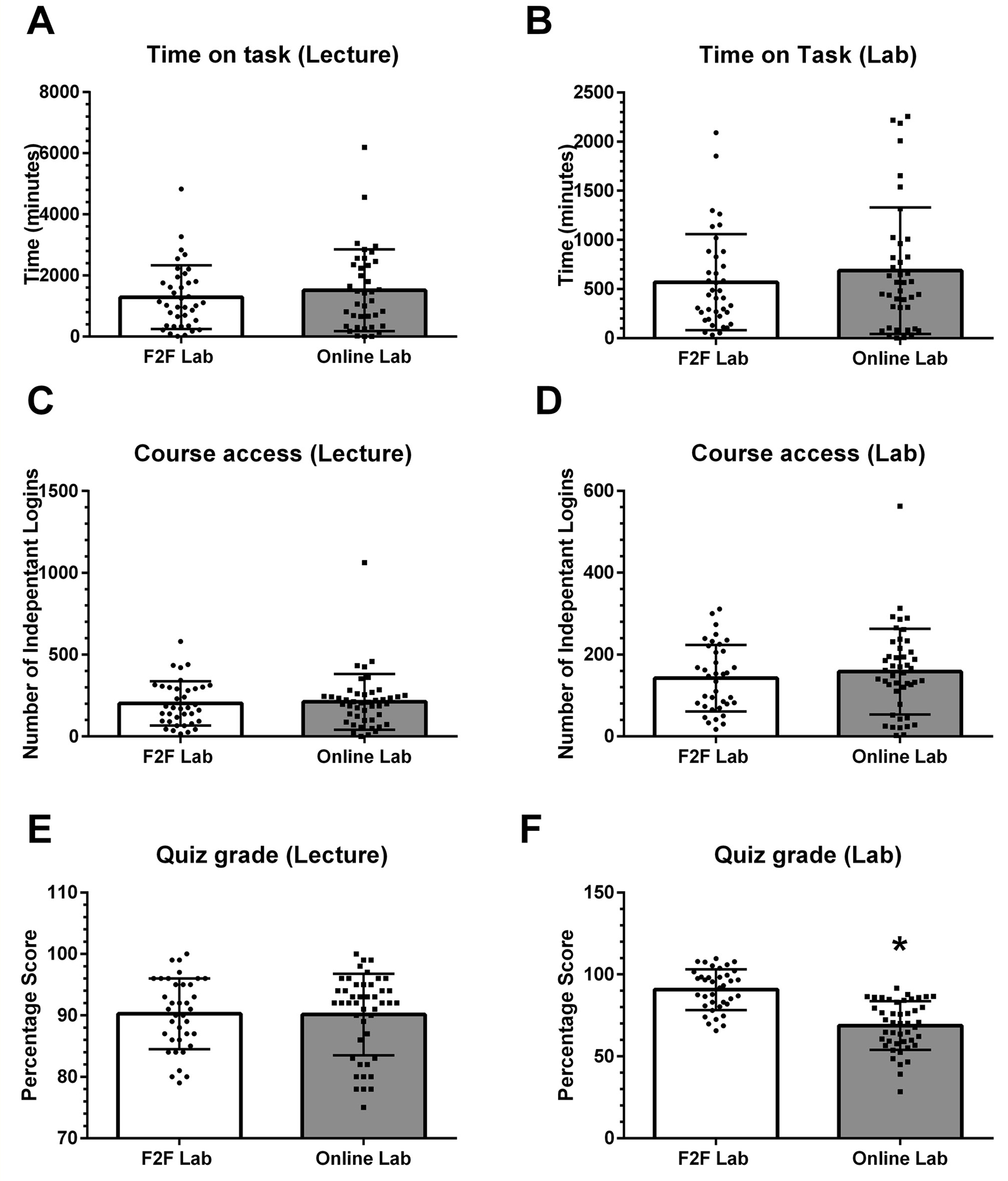

Overall, there was no significant difference between the course engagement (as measured by time spent logged into the online activities in the course website) between the online lab students and the F2F lab students in either the lecture or laboratory material (Figure 1A-D). Further, there was no difference between the two groups in the average lecture quiz grades (Figure 1E). However, there was a 22% decrease in the laboratory quiz grades in the online laboratory students compared with the F2F laboratory students (90.8 ± 12.4% versus 68.8 ± 14.9%; p = 0001; Figure 1f).

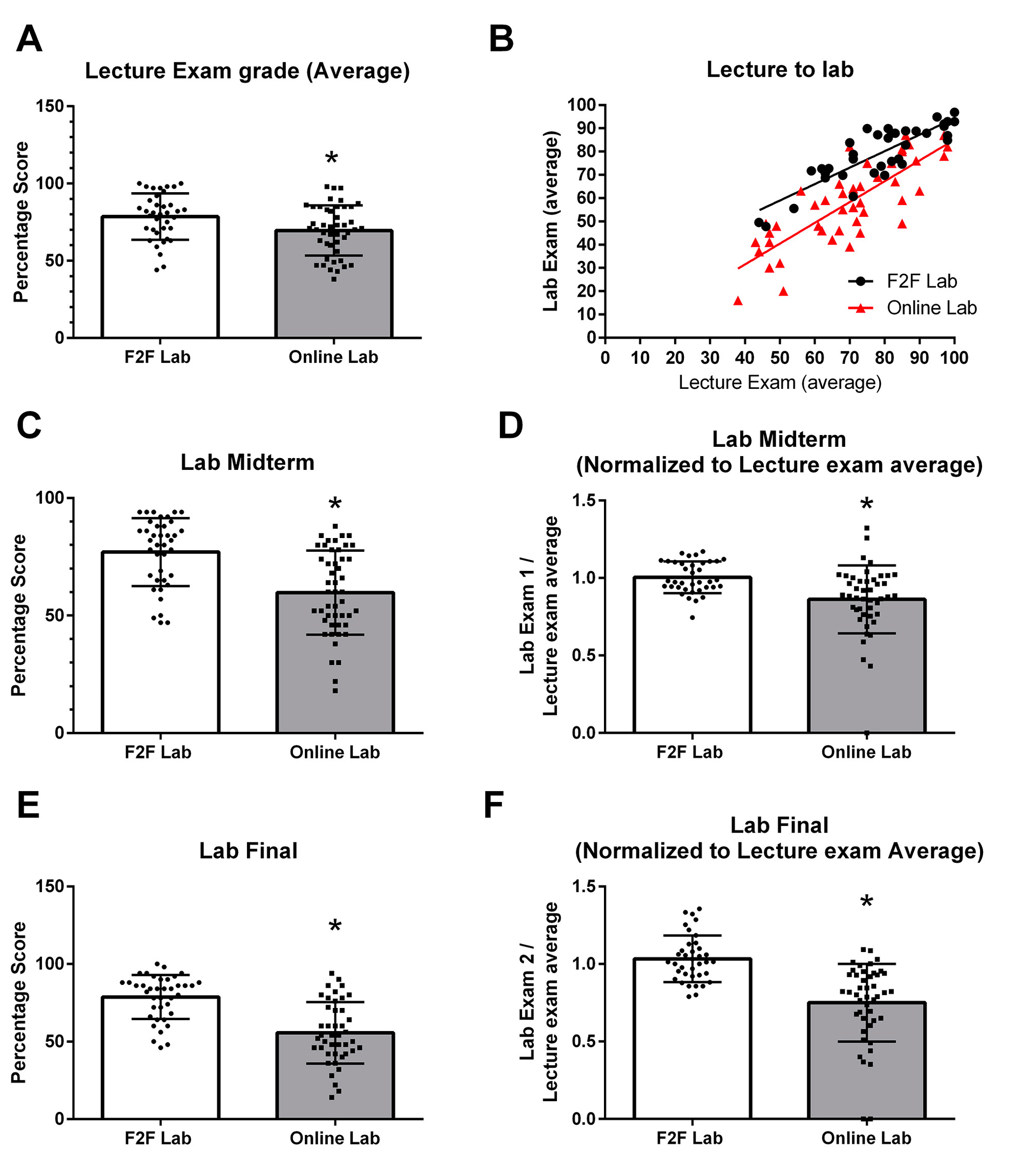

Students who enrolled in the F2F laboratory instruction had significantly higher average lecture examination percentage scores than students in the online lab (78.6 ± 15.0% versus 69.6 ± 16.1%; Figure 2a). In both groups there was a significant strong positive correlation between the student performances on the lecture exams and laboratory exams (Figure 2b). However, the online laboratory students fared worse on each of the two laboratory exams, and this reduction was still present for each laboratory exam even after normalizing the laboratory exam to the average lecture exam grade (Figure 2c–f).

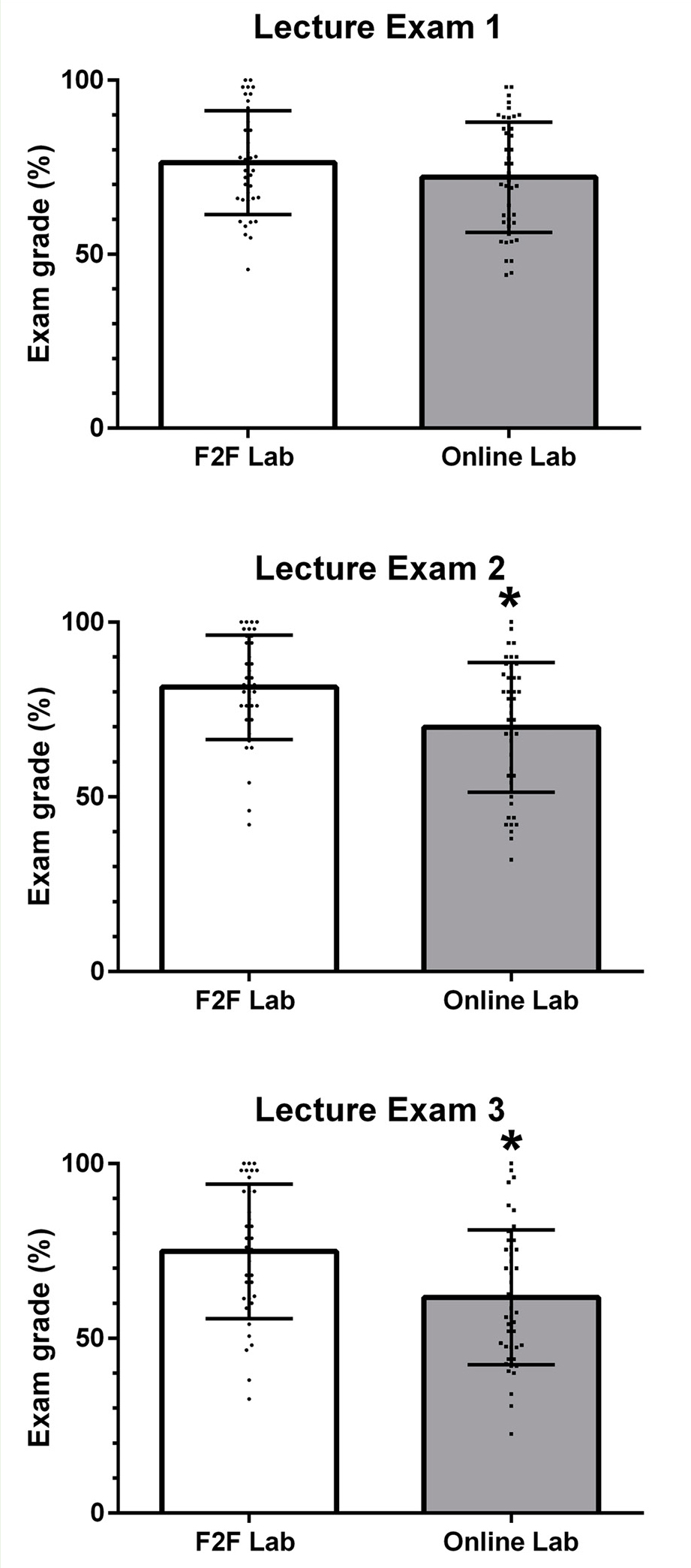

Lecture modules

The three lecture exams each corresponded to a specific area of the course. Interestingly, there was no difference between the two groups in the first lecture exam (regional anatomy, cellular anatomy, and histology). However, the online laboratory students scored on average 11% lower on the second lecture exam (musculoskeletal system; 81±15% vs 70±19%) and 13% lower on the third lecture exam (nervous system; 75±19 versus 62±19%) than students in the F2F laboratory version of the course (Figure 3).

Discussion

It is no surprise to anyone even remotely familiar with higher education in the United States that online instruction continues to trend upward in enrollment and number and diversity of course offerings. There are still some barriers to students who wish to complete prerequisites for health-related professional and graduate programs using courses with online laboratories. Many undergraduate, professional, and graduate programs across the United States will not accept prerequisite courses that were completed with an online lab component. This ambiguity in the degree to which online laboratories are acceptable to professional programs makes this particular study quite timely.

This study was unable to account for one difference in demography: the age of students enrolled. Because this was not a randomized controlled trial, and students were allowed to self-select, the online section did skew older by an average of just over two years. We were also unable to measure student motivation, so we cannot determine if there were significant differences in student motivation for taking the course in general, or for choosing the instructional delivery platform that they chose.

With the exception of average age, the two groups of students (online and F2F lab) are demographically very similar. The general level of preparedness as measured by incoming GPA (either high school or college to-date) was also not significantly different between the two groups, a variable that Attardi et al. (2018) believed to be the primary driver in the differences they observed. Additionally, instruction for all students was carried out by the same instructor and all students were assessed using identical assessment instruments. Surprisingly, there was also no significant difference in time-on-task in online activities between the two sections, a key factor in other studies that attempted to directly compare online versus F2F laboratory instruction (Mathiowetz, 2016). Therefore, this study allows us to better compare the effect of mode of delivery than others that had multiple confounding variables that make a head-to-head comparison more challenging.

Probably the most striking result from our study is the degree to which the cognitive task involved in a particular set of laboratory modules is predictive of the discrepancy in content knowledge between students enrolled in the online versus the F2F lab sections. Our data align well with the conclusions of Van Nuland and Rogers (2017) in that tasks that do not require a great deal of spatial reasoning or that are not typically acquired through kinesthetic learning seem to be learned just as well online as in the F2F environment. Students in this study showed no statistical difference in performance on the first lecture evaluation that assessed content knowledge related to human regional anatomy, basic cellular structure, and histology. The latter topics (cellular structure and histology) are two-dimensional by their nature and do not benefit from the kinesthetic learning inherent in a traditional anatomy laboratory. Student learning was also no different in the section on regional anatomy, and while human regional anatomy is three-dimensional, each student has in their own body a ready example in real three-dimensional space to reference.

It is only when the content becomes ideally suited for kinesthetic learning and requires a great deal of spatial reasoning ability that we observe significant divergence between the online and F2F sections. When students were tasked with learning anatomy of bones, muscles, and the nervous system, those in the F2F section who had access to physical specimens significantly outperformed their peers enrolled in the online lab. What is perhaps most striking about this discrepancy is that all students, regardless of mode of delivery, were assessed on their laboratory content knowledge using two-dimensional representations of the various structure on which they were being assessed. Even though students in the online lab were learning content in the same manner in which it was assessed (through two-dimensional images) they were still consistently and significantly outperformed by the students who were introduced to the content using three-dimensional artifacts.

Although our study was focused on a particular STEM course, the implications of our data, especially when combined with those of Van Nuland and Rogers (2017), are more broad-reaching. With the high degree of variability between professional and graduate programs in health-related fields regarding acceptability of prerequisites with online labs, more research is certainly warranted. However, we can begin to see that perhaps it is not the mode of instruction itself that can be detrimental, but it is the cognitive abilities being assessed that are a better determinant of whether or not online instruction is appropriate. Currently there simply are not enough studies to justify wholesale inclusion or exclusion of prerequisite courses with an online lab component. More work needs to be done in other fundamental STEM courses such as general biology, general chemistry, and microbiology to determine which types of laboratory knowledge, skills, and abilities can be acquired effectively in an online environment and which might still be best acquired in a more traditional setting. Additionally, more needs to be done to assess what improvements could be made in those areas of online laboratory instruction that are currently not as effective as their F2F counterpart across the STEM fields.

Conclusion

Although the drivers for more online or virtual laboratory instruction only continue to push more courses in that direction, the literature across the STEM disciplines is not very convincing one way or the other as to the efficacy of online laboratory instruction. Our experience in preparing this study suggests that for the spatial and kinesthetic tasks needed for a quality anatomy experience, there is no replacement for physically holding a three-dimensional specimen in one’s hands and being able to manipulate it in real space. More work is needed to determine what other cognitive tasks are perhaps best learned in a traditional F2F environment, and what improvements could be made in online laboratory learning. ■

Patrick Brown (brownpj@etsu.edu) and Jonathan Peterson are associate professors in the Department of Health Sciences at East Tennessee State University in Johnson City, Tennessee.

Biology Labs Technology Postsecondary