Practical Research

Adapting a Popular Technique in College Lecture Halls to K–12 Classrooms

Science Scope—July/August 2020 (Volume 43, Issue 9)

By J. Bryan Henderson

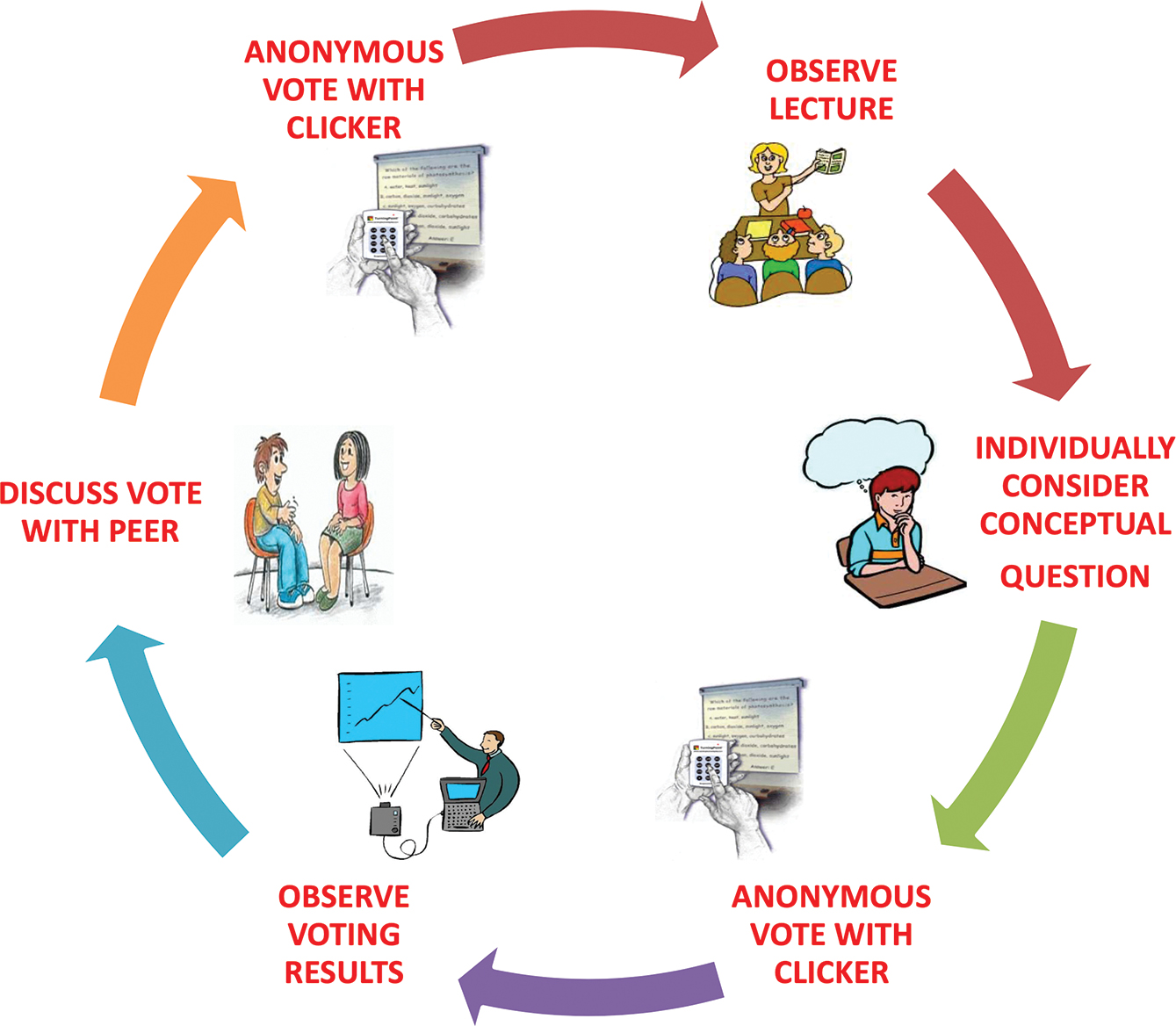

Peer Instruction (PI) (Mazur, 1997) is an increasingly popular technique to facilitate student discussion with handheld student-response technology, that is, “clickers.” During PI, students use clickers to anonymously vote on questions that teachers project to the front of the room. PI incorporates clicker votes into a feedback loop (see Figure 1). More specifically, clicker technology allows teachers to quickly compile each student vote, and then teachers project to students a display of class-wide voting trends. Teachers then ask their students to discuss their voting rationales with peers, and then after several minutes of peer discussion, teachers ask students to revote on the same question with the overarching goal of reaching classroom consensus. If the classroom does not reach consensus, the PI cycle repeats. Evidence indicates this PI cycle is associated with statistically significant improvements in conceptual understanding over traditional lecture instruction (Crouch and Mazur 2001; Fagen, Crouch, and Mazur 2002).

The Peer Instruction (PI) cycle. Students first observe instruction on a topic and then individually consider a topic-related question that is projected to the front of the classroom. Students then anonymously vote for their answer choice with a wireless clicker. The clicker technology tallies and displays votes for all students to the instructor in real time. If there is not voting consensus, the instructor asks students to form pairs to discuss the rationale behind their original votes. After peer discussion, students anonymously vote once again, and the cycle repeats until classroom consensus is reached.

Despite the reported successes of PI in many college classrooms, studies of PI in K–12 classrooms are rare. Yet, exploring the adaptation of PI to K–12 is worthwhile, as the critical and social reasoning skills demanded by the use of PI do not develop overnight—years of practice are needed to enculturate these important speaking and listening skills. Of further interest to science educators is evidence that classrooms utilizing the PI cycle can alleviate gender gaps that exist prior to instruction (Lorenzo, Crouch, and Mazur 2006). However, these potential affordances of adapting a popular educational technology (clickers) and pedagogy (PI) to K–12 does not come without the inevitable complexities involved with making a socially driven science classroom effective for a less mature audience.

Hence, the objective of this study was a multi-year implementation of PI in a K–12 setting. This study focused on the use of time spent between clicker votes for each PI cycle, and namely, how different activities during the time between clicker votes might result in different levels of learning.

One year of pilot study followed by two years of data collection was conducted with a science instructor implementing PI at a diverse public school. This same instructor taught four class periods in four different ways each day for an entire semester. More specifically, four class periods (two AM and two PM) were randomly assigned to one of four unique experimental conditions. The difference between each class period was how students were instructed to spend their time in between clicker votes during cycles of PI. Students in one condition would talk with a peer between clicker votes, in another condition students would write in a journal between clicker votes, and in a hybrid condition students would both write and talk between clicker votes. In a fourth and final “control” condition, students would just listen to their teacher provide additional explanation of the topic between clicker votes.

In each of the experimental conditions, the teacher read from scripts that provided specific instructions for how students were to spend time between clicker votes (the part of the script in all CAPS varied by condition):

Please rephrase the question in your own words [instructor would then wait about 30 seconds, using a stopwatch]. Identify the letter of your answer choice and the content of your answer [then instructor would wait another 15 seconds]. Explain your thinking [TO YOUR PARTNER; IN YOUR JOURNAL; FIRST IN YOUR JOURNAL AND THEN WITH YOUR PARTNER]. What physics or science is behind your answer? If there is no physics or science, describe your prior experience that influenced your thinking and your process of elimination.

Such a script was unnecessary in the control condition with supplemental instructor-based teaching, as students in this group were not provided the opportunity to talk with peers and/or write in a journal between clicker votes.

Approximately two to three clicker questions were posed for each class session. To account for variation in student attention and behavior associated with the time of the school day, the treatment assignments were flipped in a second year of the same study with the same teacher (i.e., the AM classes in year 2 did what the PM classes did in year 1, and vice versa). The result is a sample size of 250 students.

Results show that the average learning gains for all students in this study on the widely used Force Concept Inventory (Hestenes, Wells, and Swackhamer 1992) assessment was 33.7% of what could have possibly been gained from pretest to posttest. The Force Concept Inventory has 30 questions (e.g., if a student scores 24 at pretest, this means that the maximum possible gain at posttest is six additional correct answers. Hence, if a student improves to a score of 26 at posttest, this means they gained 2 points out of a possible 6 points, which is a 33% learning gain). Students in the experimental condition where they talked with peers between clicker votes had the largest learning gains. An important finding is that these students demonstrated a 6.6% improvement over students that instead received additional explanation of the topic between clicker votes. This suggests that the presence of clickers is not sufficient, but rather, the peer discussion encouraged by PI is a key ingredient in PI being successful.

Micki Chi’s (2009) Interactive— Constructive— Active— Passive (ICAP) framework provides a lens through which the potential benefits of PI can be understood. ICAP hypothesizes that as instruction moves from Passive → Active → Constructive → Interactive, theoretically there should be deeper learning outcomes as you move along this progression (Chi 2009; Fonseca and Chi 2010).

Recall the result that students provided with a chance to talk with peers between clicker votes learned more than students passively listening to additional instruction between clicker votes. Through the lens of ICAP, encouraging peer discussion between clicker votes is an Interactive learning activity that can facilitate deeper learning than additional teacher explanation of the topic, which is classified as a more Passive activity under ICAP (Table 1). This study provides evidence that the presence of technology alone is not sufficient to promote Interactive engagements. Rather, the findings of this study suggest that subtle changes in how a technology is used can result in significant differences in how students learn. While this study involved handheld clickers, much of what is discussed in this article is just as applicable to a new generation of cloud-based technologies that allow students to enter anonymous information via web-enabled devices. This is because while the names and the precise nature of the technology might deviate slightly across classrooms, what all these devices have in common is the ability to provide students an anonymous way to participate in the classroom, while simultaneously providing instructors with real-time capture of the information their students are submitting.

| Table 1. Interactive — Constructive — Active — Passive (ICAP) taxonomy for various overt learning activities (Chi 2009). | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

||||||||||||||||||

Purchasing technology is not enough. How that technology is used to support various forms of student engagement is key, and the ICAP framework provides a way to think about the depth of engagement that is taking place when asking students to use educational technology in different ways.

J. Bryan Henderson (jbryanh@asu.edu) is an associate professor at Arizona State University’s Mary Lou Fulton Teachers College in Tempe, Arizona.

Professional Learning old Research Middle School