feature

Why Does the Moon Look Like That Again?

Assessing Students’ Models of the Lunar Cycle

One of the biggest challenges we have faced in our district in shifting instruction, assessments, and curriculum to align with our state’s adaptation of the Next Generation Science Standards (NGSS) has been the science practice of Developing and Using Models. For us, and everyone, this practice represents a significant departure from the solar system representations, edible DNA, and paper mache insects that we have typically called models. Instead, we are working to engage students in creating and using models that explain the natural world (Krajcik and Merritt 2012).

In our district, sixth-grade teachers have been collaborating to deepen their understandings of this practice and create meaningful opportunities for students to create and revise models. In this article, we describe a modeling task and accompanying rubric that we designed for an existing unit about lunar cycles. We also share what we learned from students’ initial models and how this impacted subsequent instruction in the unit. We close with a discussion of students’ final models and how teachers describe the benefits for students of engaging in modeling.

Developing the task and rubric

We chose to integrate modeling into an existing unit on lunar cycles because they are not easily observed and can be modeled to explain how they work. In addition, the NGSS performance expectation MS-ESS1-1 explicitly includes modeling as the focal science practice (NGSS Lead States, 2013). We began our unit (see Online Supplemental Resources) by having students observe the Moon in the night (and day) sky. Each day for several weeks preceding the unit, students drew the shape of the Moon they observed in the nighttime or daytime sky. Students then met in small groups to discuss the patterns they noticed in their data and asked questions about these patterns. Some of the patterns students noticed were that the Moon appeared to get bigger and then smaller across the month, that the Moon appeared to “grow” toward a full Moon and then “shrink” and not be visible, and that the shapes of the Moon in the second half of the month appeared to be the reverse of what was observed in the first half of the month. Students also suggested that these patterns repeat every month. These data and patterns served as a compelling phenomenon for the unit as students were motivated to figure out why these patterns occurred. During this time, teachers also introduced students to what counts as a scientific model. They emphasized that scientists use the term model differently than we typically do in conversation. Instead of just being a representation, scientific models must explain how or why a phenomenon occurs. Students then created a model to answer the question: Why do we see a waxing crescent Moon in the sky every month?

Students worked independently during one 45-minute class period to develop a paper-and-pencil model to explain why this shape of the Moon occurs each month. Students created these models at the beginning of the unit and revised them at the end. The initial models served to inform teachers’ instruction of the unit and were an important opportunity for students to make their ideas visible. The revised models provided evidence of students’ understandings of the lunar cycle, and looking across both models demonstrated for teachers and students how students’ ideas changed over time. Because this was a short unit (11 days), an initial and final model worked well, but we suggest that longer units may necessitate at least one additional opportunity for students to revise their models during the unit.

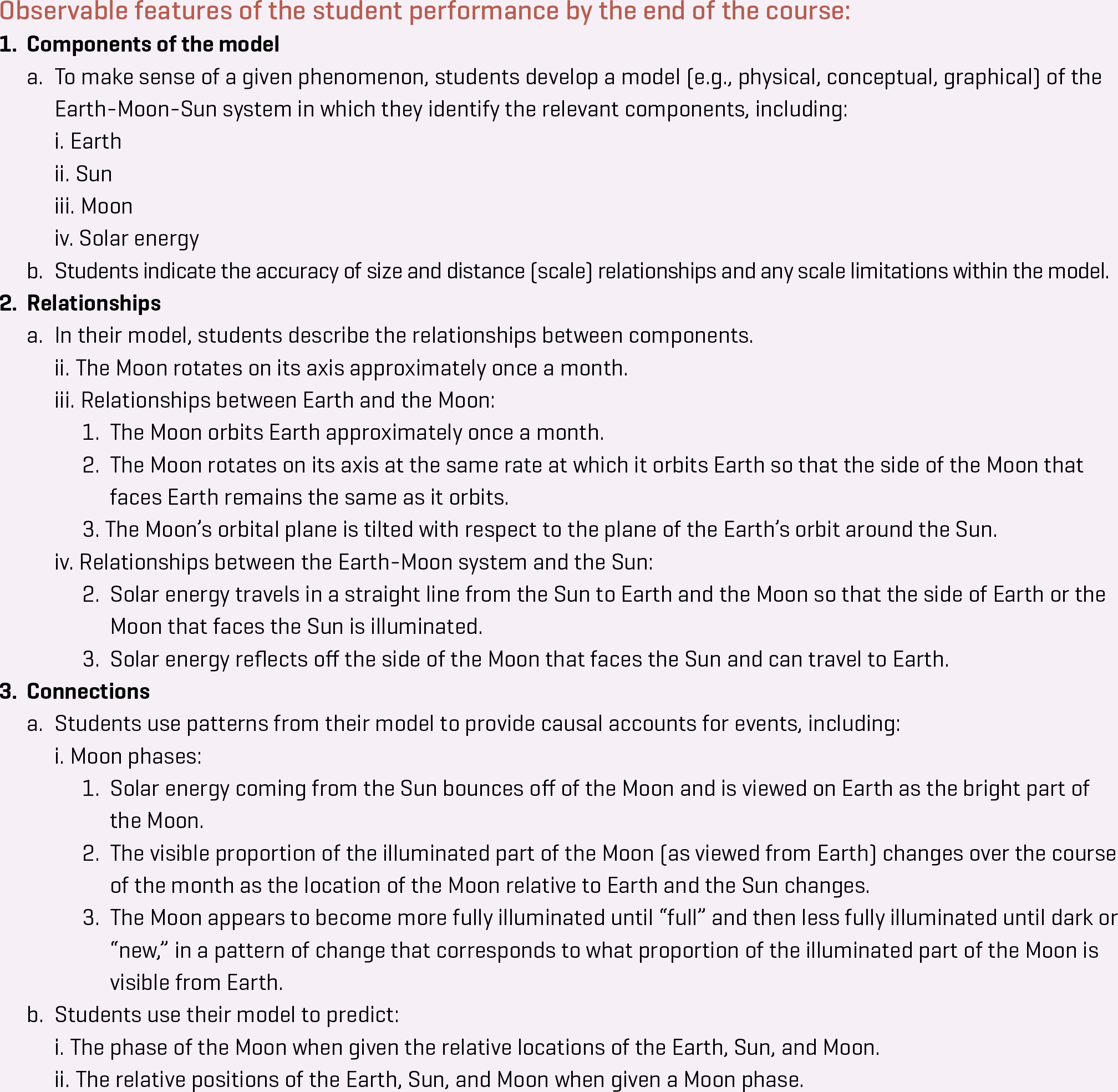

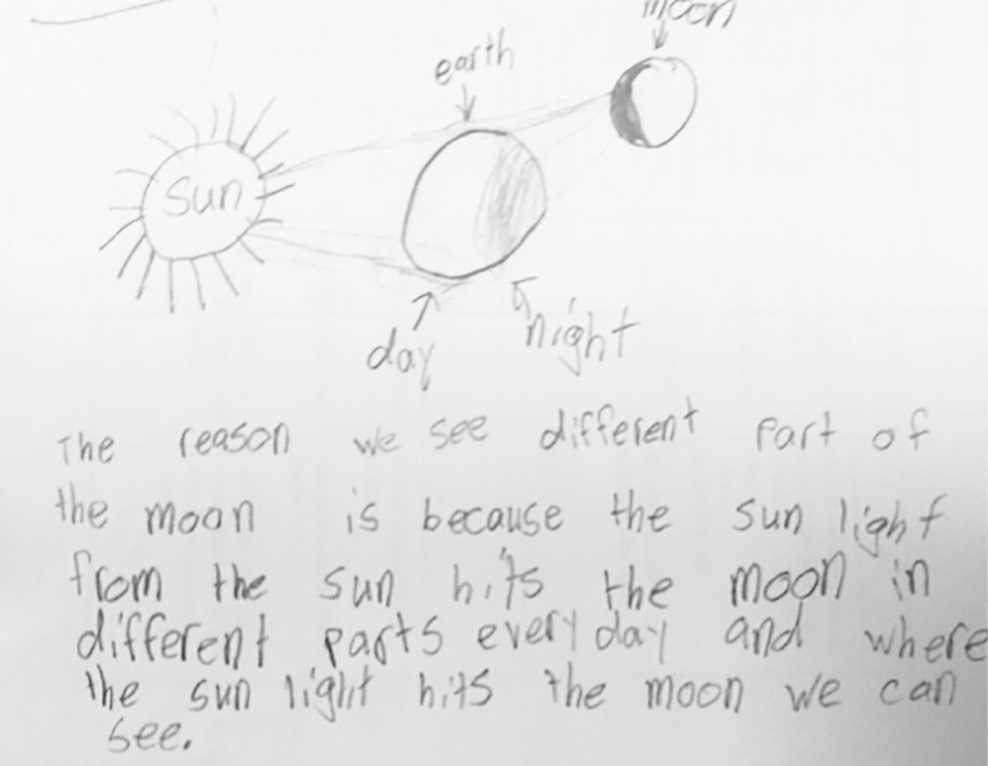

To assess students’ models, we developed a rubric. We decided to use a 4-point scale for the categories of our rubric, with 4 being the highest because this is the scale most familiar to our students. We began designing our rubric by examining the NGSS Evidence Statements (NGSS Lead States 2015), which contain key observable features of a proficient model. The Evidence Statements suggest three important aspects of models: (a) components of the model, the variables or factors in the model; (b) relationships, those between the components; and (c) connections, students’ reasoning about the causal mechanism that explains the phenomenon. Figure 1 contains selections of the NGSS Evidence Statement for MS-ESS1-1 related to the lunar cycle.

NGSS Evidence Statement for MS-ESS1-1 Related to the Lunar Cycle

Using these Evidence Statements, we created the first category of our rubric, which we also called components (see Figure 2). Similar to the NGSS Evidence Statements, our category focuses on how well students incorporated the Earth, Sun, Moon, and energy from the Sun, and on whether the components themselves and the distances between them are relatively to scale (see Figure 2). To design the rest of our rubric, we initially created separate relationships and connections categories as suggested by the NGSS Evidence Statement (see Figure 1). However, we found it challenging to separately evaluate how well students’ models showed the relationships between the Earth, Sun, and Moon, and how well they used these relationships to explain the lunar cycle. Therefore, we combined these categories into one accuracy of explanation of phenomena category (see Figure 2).

Rubric for providing feedback about student models

We then created a third category for our rubric, clarity of communication (see Figure 2), which is not a feature of the NGSS Evidence Statements. Models are “concrete artifacts that can be shared and critiqued by others” (Meyer and Krajcik 2015, p. 292), and we wanted to ensure that students’ models could be easily understood by peers and teachers.

Initial student models

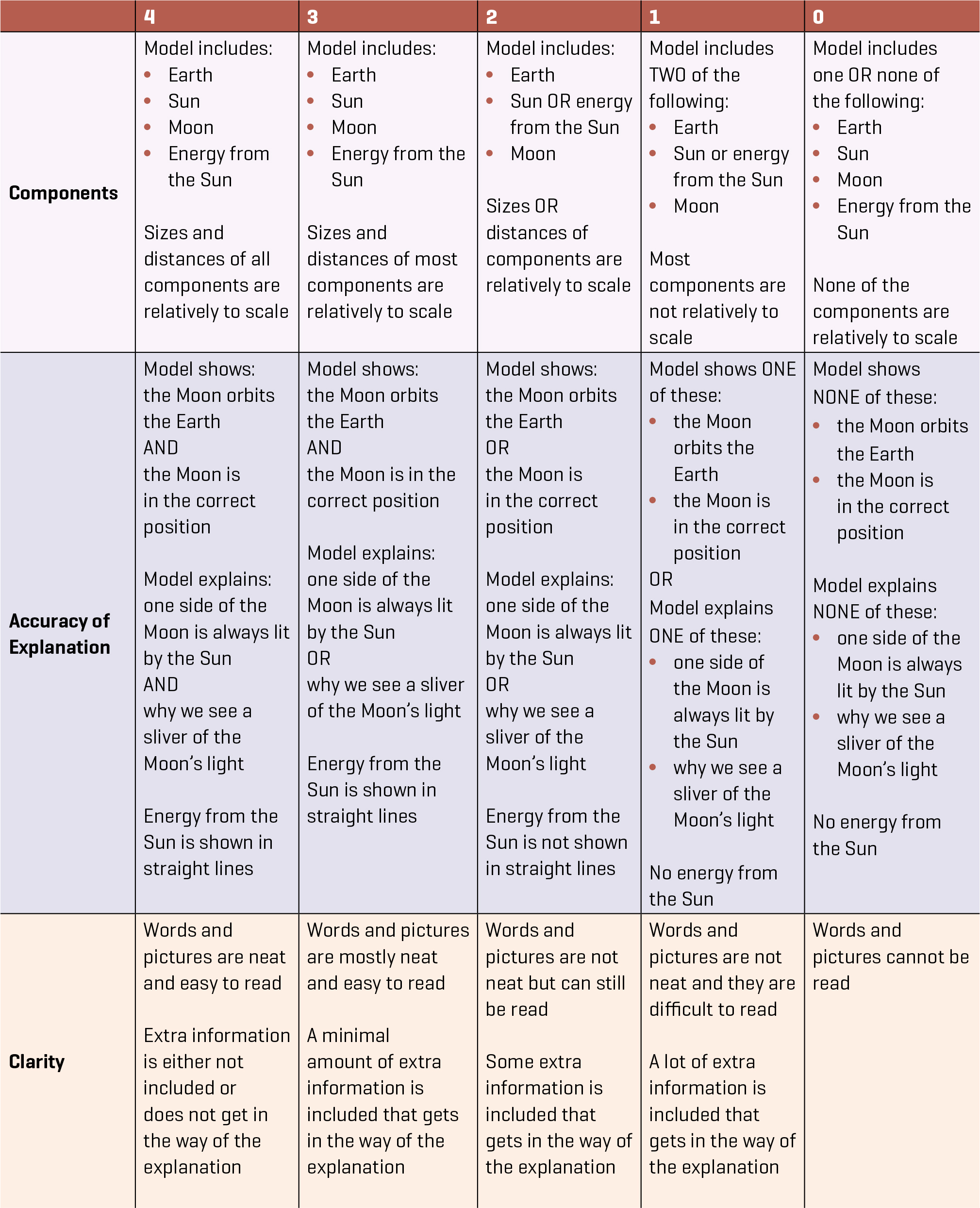

We used the rubric to assess students’ initial models to gather data to inform teachers’ instruction of the unit. We noticed several patterns of student conceptions about the lunar cycle. In regard to the components of the models, students typically included the Earth, Sun, and Moon, but these were often similarly sized and similarly spaced from each other. For the accuracy category, students generally did not explain why or how the lunar cycle occurs, simply that it does. Students also had several inaccurate conceptions, including that the Earth blocks the Moon, that the Earth casts a shadow on the Moon, and that different amounts of sunlight hit the Moon. However, most students did explain in their models that the Moon does not make its own light and instead reflects sunlight.

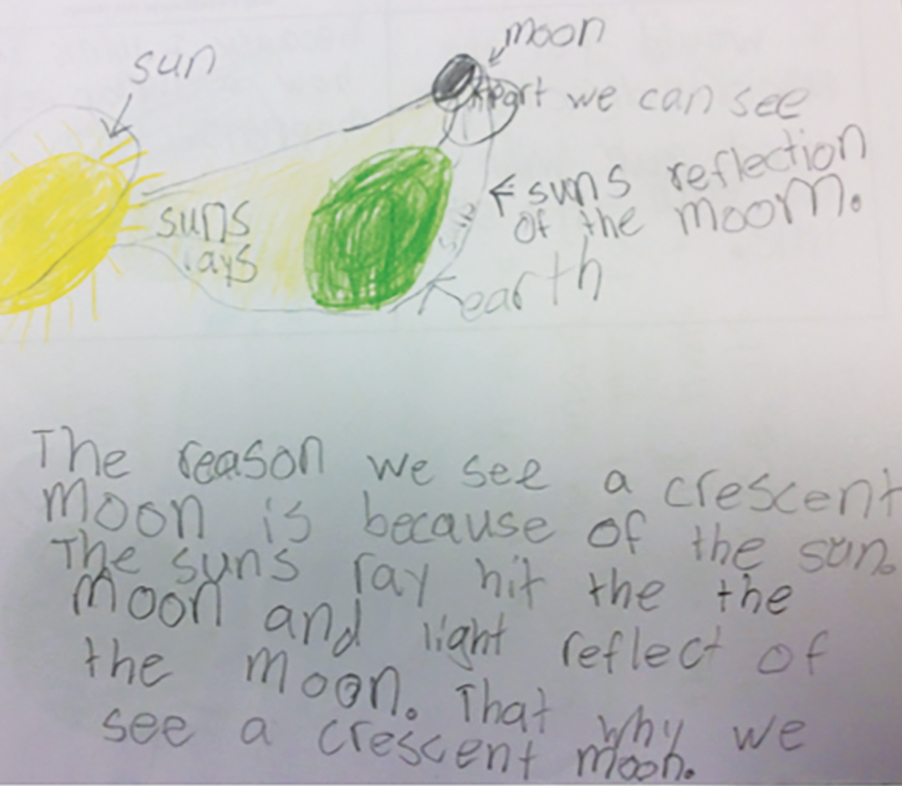

Figure 3 is a sample initial model. This student’s model accurately explained that the Moon’s light comes from the Sun and what we see of the Moon relates to this sunlight. However, the model also inaccurately explained that different parts of the Moon can be illuminated by the Sun, causing the different phases. The student also drew the Sun, Moon, and Earth at almost equal distances from each other and the Earth as the largest object, with similarly sized Sun and Moon. After assessing the initial models, teachers taught a unit focused on students exploring the Earth-Sun-Moon relationship using simulations, discussions, and readings.

Sample student initial model

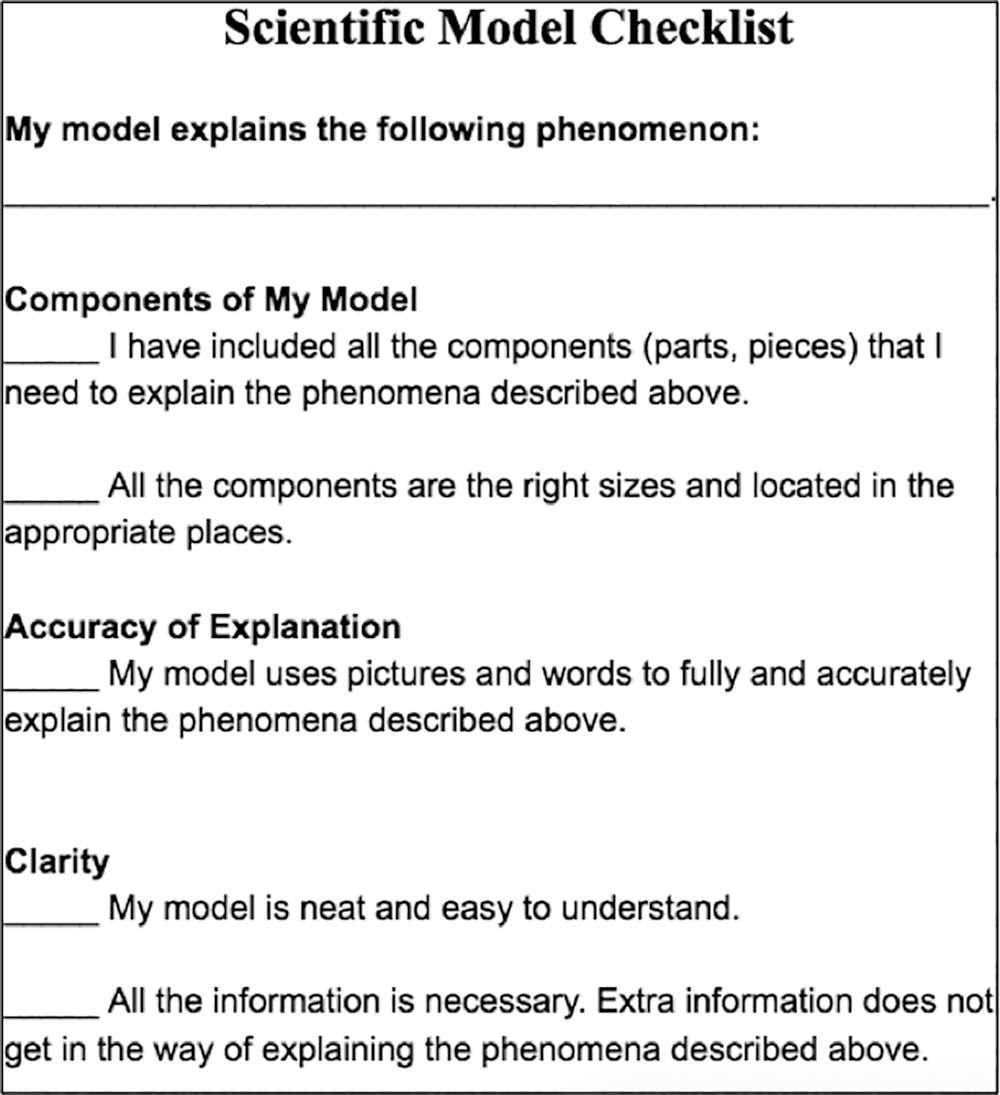

Teachers revisited what counts as a scientific model and used examples and nonexamples of models to reinforce the differences between pictures and scientific models. Teachers also introduced a checklist based on the rubric for students to use as they revised their models (see Figure 4). In addition, on the basis of students’ learning and language needs, teachers modified how students completed the final modeling task. Some students were encouraged to explain their models verbally, and other students were provided with a word bank that included Sun, Earth, Moon, and solar energy.

Student scientific model checklist

After students revised their models, teachers provided sentence frames to support students’ critiques of peer models (see Figure 5). Teachers emphasized that peer critique is intended to help improve everyone’s models in a manner that is honest, safe, and respectful. Students used sticky notes to provide two pieces of feedback to two peer models. They were then able to use the feedback they received to further revise their model. The teacher then assessed the final models using the rubric.

Sample sentence frames to support peer-to-peer critique

You might want to add…

One thing I don’t understand is…

You could change…

I understand this clearly because…

One question I have is…

Make sure you include...

You did a great job showing…because...

It might be helpful to...

Assessing students’ final models

When we assessed students’ final models, we found considerable growth. Students’ scores increased on average by 2.7 points, with the greatest growth in the accuracy and components categories. We theorize that we saw the most growth in these categories because students developed deeper understandings of why we have a lunar cycle. For the final models, most students maintained the clarity of their drawings and writing, but included far more accurate explanations of why the lunar cycle occurs.

Figure 6 is the model created by the same student whose initial model appears in Figure 3. This student improved his model in several ways. First, he had more accurate scaling of both the Earth, Sun and Moon, and their distances from each other (components) in this final model, although the spacing between the objects could be improved. Second, although the student drew the Moon in a reverse location for a waxing crescent, he did explain in words and pictures that sunlight is reflecting off the Moon, but we can only see the crescent from Earth (accuracy). In addition to locating the Moon in its correct spot, the student could continue to improve his model by showing the Moon orbiting the Earth and explain that one side of the Moon is always lit by the Sun. Students who demonstrated significant gaps in understanding met in small groups with the teacher for additional reteaching and an opportunity for further revision of their model.

Sample student final model

Lessons learned

This task and its associated rubric was our first attempt at engaging students in creating and revising models to explain a phenomenon. We were pleased to see growth in students’ abilities to explain the causes of the lunar cycle. Teachers also noticed that students developed stronger understandings of what counts as a model and the importance of considering the limitations of models to explain a phenomena. We believe that the peer-review portion of our instruction was especially important because it forced students to consider the strengths and weaknesses of peers’ models and use feedback to improve their own models. Engaging in critique increased students’ comprehension of the lunar cycle and aided them in appreciating the collaborative and sometimes critical work of scientists.

Teachers described growth in their own understandings of what it looks like for students to engage in the practice of Developing and Using Models. Instead of focusing on students drawing and naming the phases of the Moon, teachers engaged students in explaining why the lunar cycle occurs. They found this type of instruction to be far more challenging to enact in the classroom, but also much more rewarding for students as they were able to see how their ideas changed over time.

Rebecca Katsh-Singer (katshsingerr@westboroughk12.org) is the science curriculum coordinator for the Westborough Public Schools in Westborough, Massachusetts, and a Lecturer in Education at Brandeis University in Waltham, Massachusetts. Chris Rogers (rogersc@westboroughk12.org) is a sixth-grade teacher in the Westborough Public Schools.

Assessment Astronomy Earth & Space Science Middle School