Research and Teaching

Two-Stage (Collaborative) Testing in Science Teaching: Does It Improve Grades on Short-Answer Questions and Retention of Material?

By Genevieve Newton, Rebecca Rajakaruna, Verena Kulak, William Albabish, Brett H. Gilley, and Kerry Ritchie

The purpose of this study was to determine (a) if two-stage testing improves performance on both multiple-choice and long-answer questions, (b) if two-stage testing improves short- and long-term knowledge retention, and (c) whether there are differences in knowledge retention based on question type.

Student evaluations in higher level education have traditionally involved individual testing where students complete examinations independently. These evaluations allow instructors to directly assess student learning and provide an opportunity for students to demonstrate their individual knowledge. However, several disadvantages have been noted, such as student’s performance anxiety and low self-confidence (Zimbardo, Butler, & Wolfe, 2003). Additionally, with individual testing, there is often a delay between when students complete the test and when they receive feedback. This delay in feedback is problematic as students are less invested in the answers and are therefore unlikely to learn from their mistakes and retain course information (Epstein et al., 2002). To mitigate these issues, two-stage testing, which is a form of collaborative testing, has been researched extensively as an alternative testing method, in areas such as student’s academic performance (Bloom, 2009; Cortright, Collins, Rodenbaugh, & Dicarlo, 2003; Eaton, 2009; Gilley & Clarkston, 2014; Giuliodori, Lujan, & DiCarlo, 2008; Kapitanoff, 2009; Leight, Saunders, Calkins, & Withers, 2012; Meseke, Nafziger, & Meseke, 2010; Rao, Collins, & Dicarlo, 2002; Wiggs, 2011; Zimbardo et al., 2003) and retention of material (Bloom, 2009; Cortright et al., 2003; Gilley & Clarkston, 2014; Leight et al., 2012; Meseke et al., 2010).

Two-stage testing involves students taking a test individually and then immediately afterward completing the same or a very similar test again in groups, thereby allowing for discussion of answers and for other cognitive processes to occur beyond what individual tests alone usually involve. The first stage allows for individual assessment providing students with the opportunity to formulate answers on their own, which motivates discussion in the second stage when collaboration is expected to clarify or corroborate their original answers (Zipp, 2007). Moreover, a grading scheme that divides the weighting between the individual portion of the test and the group portion ensures that students come prepared for testing and rewards individual performance, while still providing the incentive to participate in the collaborative section (Stearns, 1996). Therefore, the two-stage testing format confers additional benefits in terms of learning compared with traditional individual testing, with relative ease of application.

Accordingly, two-stage testing has been used across a variety of disciplines and levels of higher education. However, research into the effects of two-stage testing on performance has primarily been done using multiple-choice questions (Cortright et al., 2003; Crowe, Dirks, & Wenderoth, 2008; Gilley & Clarkston, 2014), and the effect of collaborative testing on long-answer question performance is currently unknown. This is of particular importance as it is challenging to generate multiple-choice questions that evaluate higher order thinking skills, particularly at the evaluation and synthesis levels of Bloom’s Taxonomy, because potential answers are presented with the question (Zheng, Lawhorn, Lumley, & Freeman, 2008). Long-answer questions are more conducive for testing higher order thinking skills (students must generate rather than recognize an answer), and they provide a more reflective evaluation of student learning as the element of guessing is eliminated with this format (Crowe et al., 2008).

In addition to performance, retention of course content following examinations is another commonly researched effect of two-stage testing, although the findings in this area are equivocal. In the short term, Gilley and Clarkston (2014) found collaborative testing improved retention after three days. Similarly, Bloom (2009) observed improved content retention with two-stage testing in the long-term after a period of 3 weeks, whereas Cortright et al. (2003) noted improvement after 4 weeks. However, Leight et al. (2012) found no improvement in retention with collaborative testing after 3 weeks. There are notable differences in methodology between collaborative testing retention studies, such as the type of question used to measure retention and the time lapse between collaborative testing and retention measurement, which should be considered when evaluating outcomes. Clearly, there is some conflict with respect to retention effectiveness, at least in the long term. To our knowledge, no study has examined long-term retention beyond 4 weeks; therefore, the objectives of this study were (a) to examine the effects of collaborative testing on long-answer performance compared with multiple choice, (b) to determine whether collaborative testing improves both short-term and long-term (6 weeks) retention of material, and (c) whether there are differences in retention based on question type. The present study was devised with a similar experimental design to previous work done by our research group; details are found in Gilley and Clarkson (2014).

Methods

Course context

Two-stage testing was used during the fall semester of two distinct undergraduate science courses. One of the courses was a second-level Biochemistry course, generally taken by students in their second year of university education (n = 64), which explored biochemical foundations of human nutrition, exercise, and metabolism. The other course was a third-level Exercise Physiology course, taken by students in their third year of university education (n = 102), which examined physiological responses and adaptations to physical activity. Both were required courses for students enrolled in a 4-year program leading to a bachelor of applied science degree in Kinesiology. Students registered in the courses had learned the fundamentals of biochemistry and physiology in previous courses, and it was deemed by the researchers that these cohorts were at comparable levels of academic development. The instructors in both courses were also the researchers for the present study. The two courses spanned 12 weeks each and included a midterm, scheduled at the same time during the term, that was completed by all students. All students in each course were invited to participate in the research study; a total of 56 Biochemistry students and 94 Exercise Physiology students gave informed consent to have their data analyzed, and these are the students whose data were used in the present report.

Experimental design

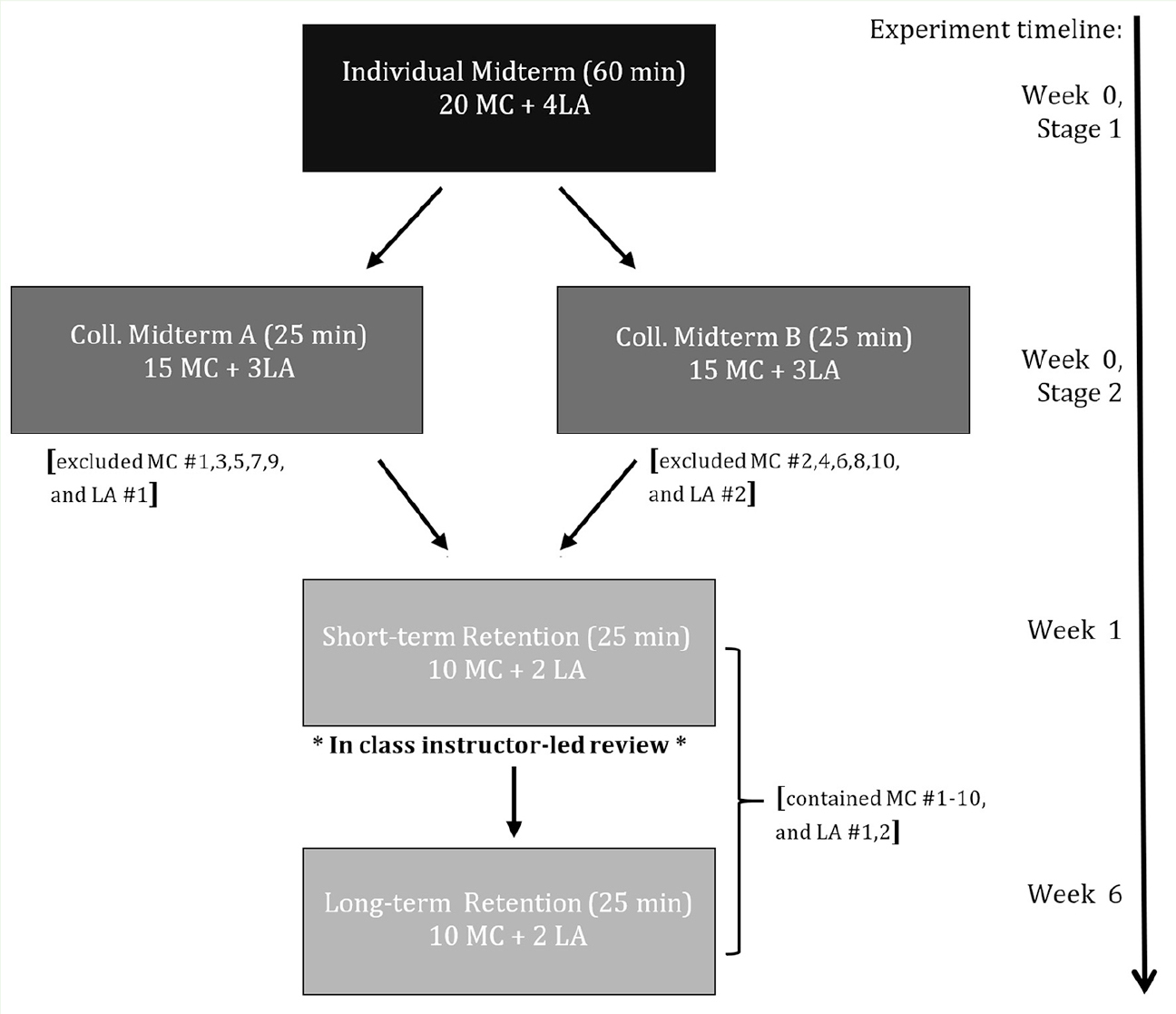

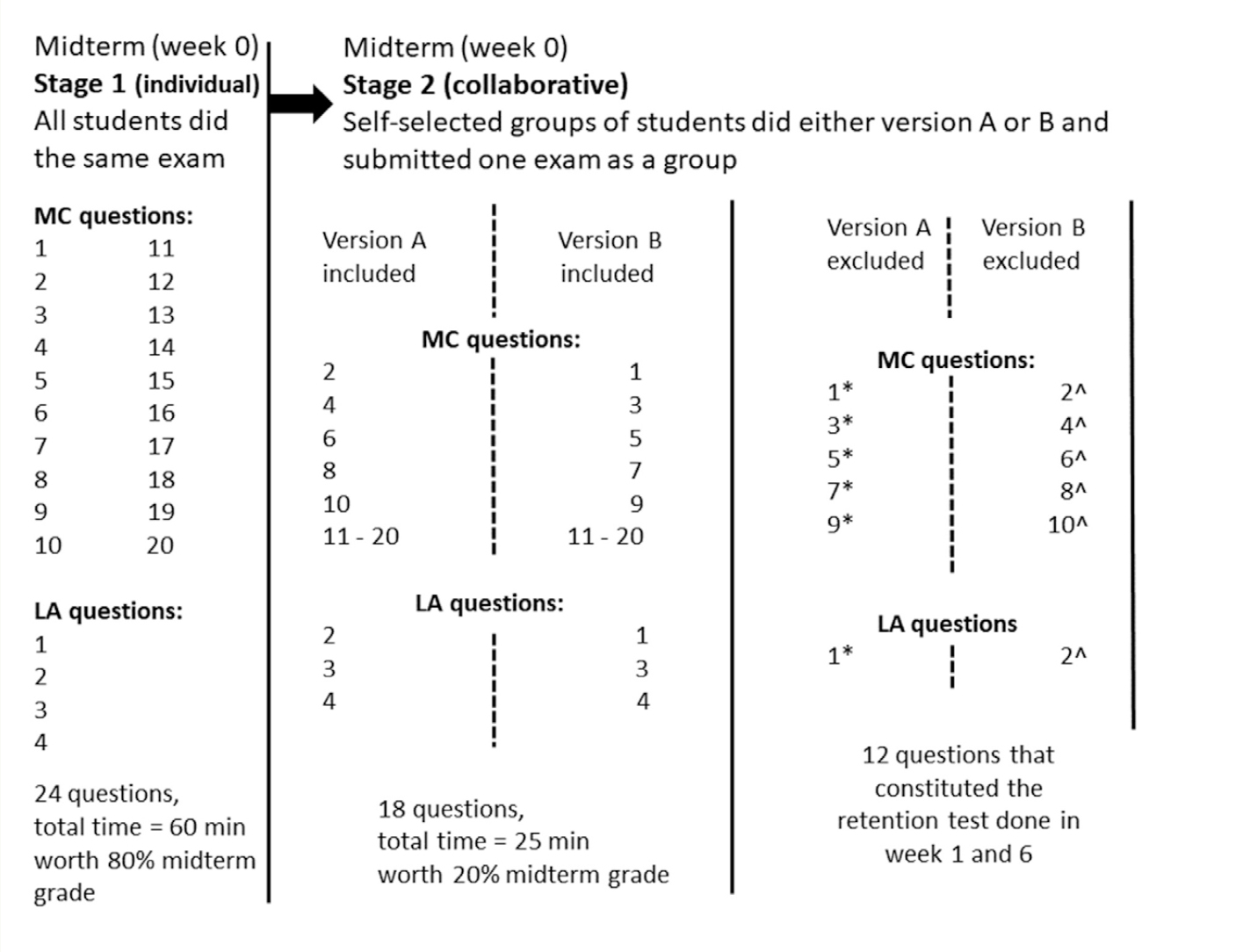

Performance

Identical testing protocols were used in both courses (Figures 1.A and 1.B). Six weeks into the course, the researchers administered a two-stage midterm that comprised an individual and a collaborative test done in succession. All registered students in the courses took the midterm, which was worth 30% of the final grade. Eighty percent of the midterm grade was allocated to the individual stage of the two-stage testing, with the remaining 20% allocated to the collaborative stage. Students were given 60 minutes to complete the individual stage, which was comprised of 20 multiple-choice questions worth one mark each and four long-answer questions worth five marks each; the individual exam was therefore graded out of 40 marks. Immediately after students handed in their completed individual midterm portion (Stage 1), students formed self-selected groups of two to five students and were given 25 minutes to complete a second test for the collaborative stage (Stage 2). There were two versions (A or B) of the collaborative test that was randomly distributed to the student groups. Each version of the collaborative test contained 18 questions, with 15 multiple-choice questions and three long-answer questions taken directly from the individual Stage 1 test. This means that several questions that were answered individually in Stage 1 were removed to prevent students from seeing them again in the collaborative stage during that midterm. On Version A, five multiple-choice questions (Questions 1, 3, 5, 7, 9) and one-long answer question (Question 1) were removed, whereas on Version B, five different multiple-choice questions (Questions 2, 4, 6, 8, 10) and a different long-answer question (Question 2) were removed. To ensure consistency between the level of difficulty in the different versions of the Stage 2 test, all questions were matched for topic area and Bloom’s taxonomy level, which is an index of skill required to answer a question (e.g., knowledge or application). This was done by two independent evaluators with experience using Bloom’s taxonomy. Also, all the grading was done using rubrics written by the instructors to maintain grading consistency. Grading was done by graduate teaching assistants who were not part of the research team. Overall, the second stage was 60% shorter in duration (lasting 25 minutes) and contained 75% of the questions originally included in the first stage (with 18 questions).

All the 12 questions that were removed from the Stage 1 midterm were later used in another test given to students 1 to 6 weeks after the midterm to compare individual performance and retention across time (more details on this rationale can be found in Gilley & Clarkston, 2014).

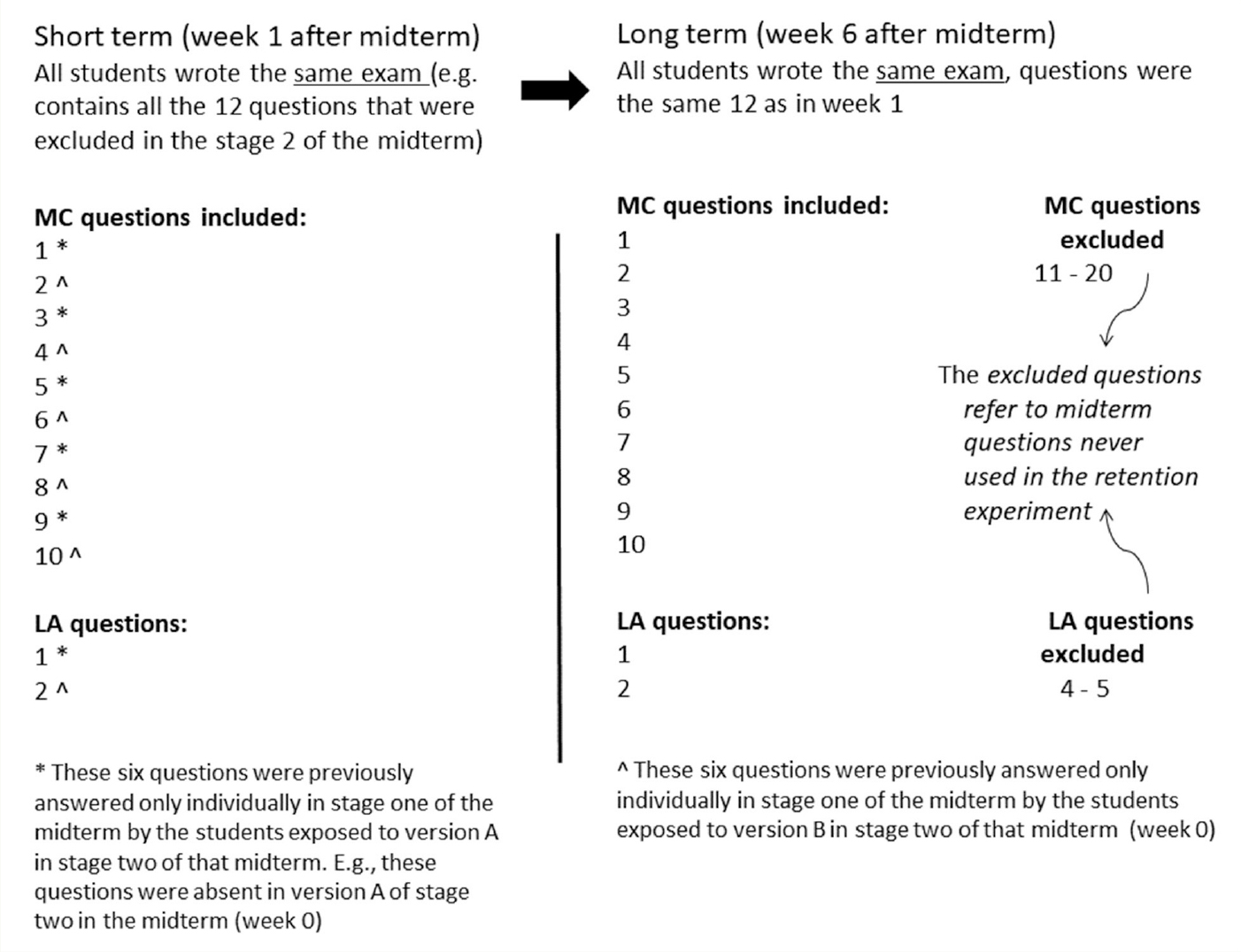

Retention

To assess short- and long-term retention of material following two-stage testing, a retention test was administered individually to all students during class time. The same test was administered twice during the course: the first time was at 1 week after the original midterm (short-term retention), and the second time was at 6 weeks (long-term retention) after the original midterm. The retention test was comprised of a total of 12 questions: the 10 multiple-choice and two long-answer questions that were removed from the original midterm when creating the two versions (A and B) of the collaborative test (refer to Figures 1A, 1B, and 1C). As such, all students wrote the same retention test, wherein half of the questions had been previously answered only individually (questions that were removed after Stage 1), and the other half of the questions had been answered both individually and collaboratively. This means that 50% of the question pool in the retention test was seen only once before, during the individual phase of the midterm by the students, whereas the other 50% of the question pool was seen twice. This experimental design allowed each student to act as his or her own control and to account for any potential differences in question difficulty because no question was exclusively “individual” or “collaborative” when considered in the whole-class context. On both occasions, the retention tests were unannounced and were administered during the first 25 minutes of class. Notably, immediately following the 1-week (short-term) retention test, the instructors in each course handed back the graded original midterms and led an in-class review to take up the correct answers to the entire midterm. Overall, the two retention tests done for research purposes equated to 50 minutes of the total time allotment of the 12-week courses (average 72 hours); this experimental intervention did not sacrifice the delivery of academic content.

Data analysis

All data were tested for normality using the Kolmogorov–Smirnov test, and it was found that the distributions were either normal or approximately normal. Significance was set at p less than or equal to 0.05.

Paired samples t-tests were used to assess performance, with analysis of grades on the individual and collaborative stage tests using comparisons based on overall performance and separated by question type. Performance data was analyzed as both a percent of total (test grade as a percentage) and the percent change from the individual to the collaborative stage. Grades were calculated based on the same 15 multiple-choice and three long-answer questions of the individual stage that were also completed on the respective collaborative stage.

A two-way repeated measures analysis of variance (ANOVA) was used to assess retention as measured by test grade as a percentage for multiple-choice and long-answer questions at three time points: original midterm, 1 week (short term) and 6 weeks (long term). This was done for questions seen under individual or collaborative conditions. To better visualize changes in performance across time, retention was also graphically expressed as the percent grade change between a student’s retention test at both Weeks 1 and 6 compared with their individual midterm. This analysis was repeated for each question type. The grade was calculated on the basis of the same 10 multiple-choice questions and two long-answer questions carried over from the individual midterm to the short-term and long-term retention tests. It should be noted that a smaller sample size of n = 85 was used when analyzing retention data over time, as not all students were present in class on the days of the unannounced retention test (whereas all students were present for the two-stage midterm). Also, if a student took the long-term retention test but not the short-term retention test, they were also excluded from the retention analysis as they would not have had the same exposure to the questions potentially confounding the results (i.e., the testing effect). Effect sizes for significant results were calculated as Cohen’s d.

Results

All data are reported as mean ± SE or F and p values where indicated. Both courses were originally analyzed separately (data not shown). However, no significant differences were seen between the two courses for any result, and the questions for the two groups were equivalent in their Bloom level; therefore, performance and retention data from the two courses have been combined and are presented below. All analyses were done in consultation with a University of Guelph statistician.

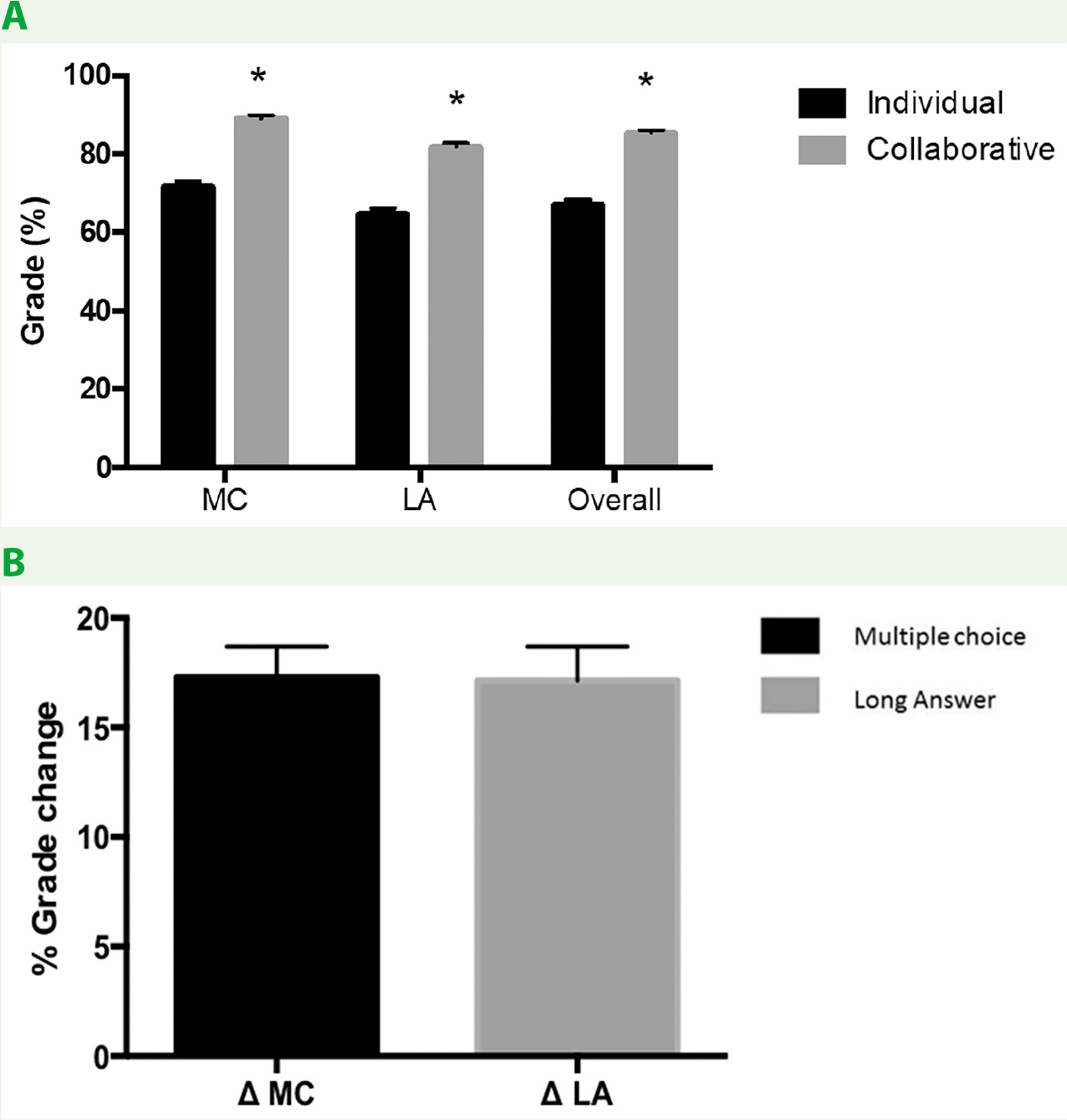

Performance

Students’ overall midterm grade improved significantly on the collaborative stage compared with their individual stage (68.2 ± 1.19% vs. 85.4 ± 0.73%, p < .0005). This improvement can be attributed to stronger performance on both the multiple-choice questions (71.8 ± 1.35% vs. 89.1 ± 0.84%, p < .0005) and long-answer questions (64.7 ± 1.54% vs. 81.8 ± 0.97%, p < .0005; Figure 2A). There was no difference in the percent change from the individual to the collaborative stage on the multiple-choice compared with long- answer questions (multiple choice 17.3 ± 1.4%, long answer 17.1% ± 1.55%; Figure 2B, 2C). Cohen’s d for the change in multiple-choice questions from individual to collaborative conditions was 1.25, and for the change in long-answer questions it was 1.09.

Retention

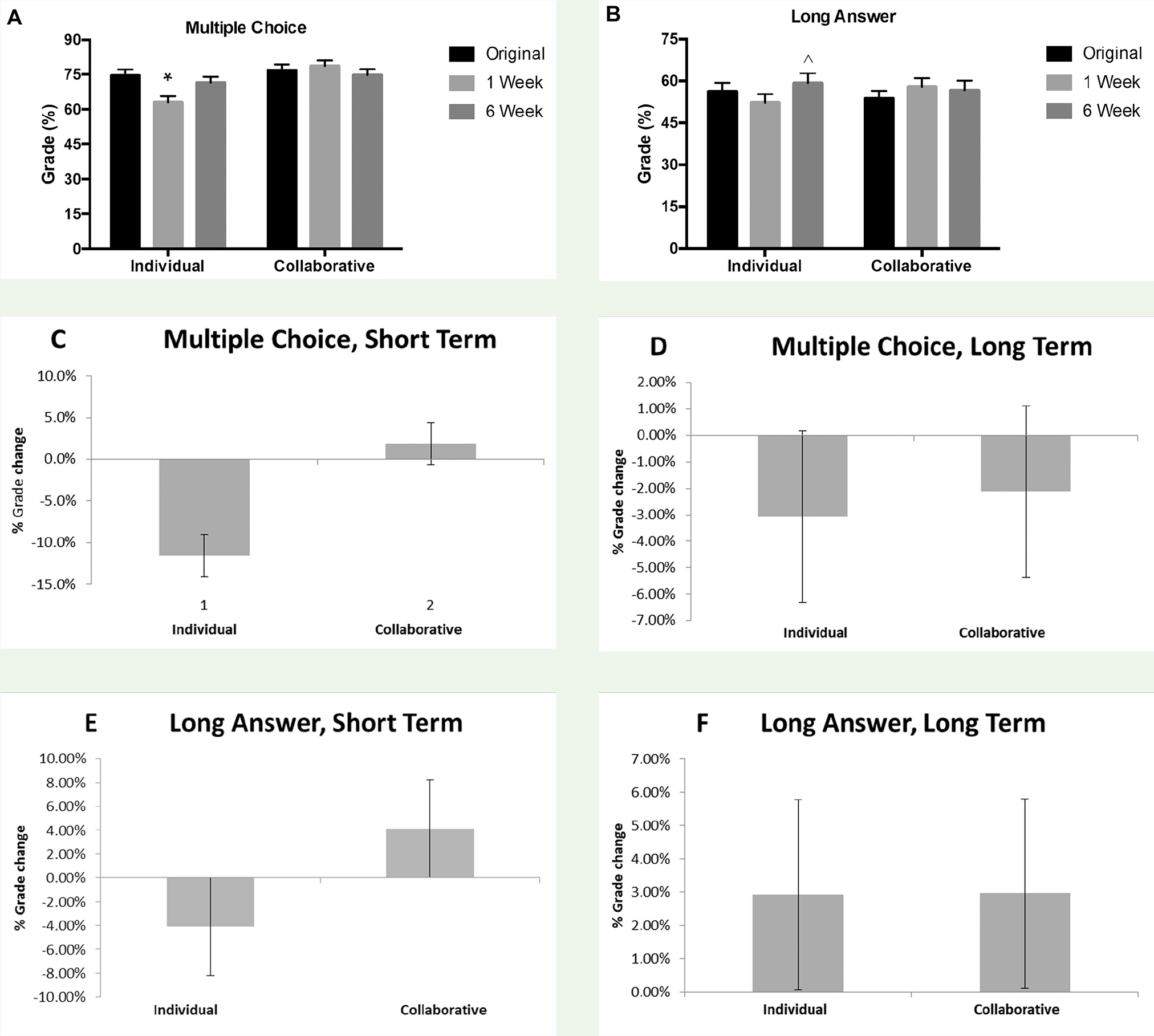

Collaborative testing in the present study maintained knowledge retention from the original midterm for both question types as measured in the short term. For both multiple-choice (Figure 3C) and long-answer (Figure 3E) questions, there was a negative grade change in questions answered individually from the original midterm to the short-term retention test administered at 1 week (multiple choice: Mean –11.53% ± 2.54%, p < .0005, d = 0.48; long answer: Mean –4.14% ± 2.43%, p = .275), indicating that there was a decay in knowledge across the short term. Looking at the overall performance, multiple-choice questions answered individually were significantly reduced from the original midterm as assessed by analysis of variance (ANOVA; Figure 3A), whereas long-answer questions answered individually were nonsignificantly reduced from the original midterm (Figure 3B). In contrast, this short-term decay of knowledge was prevented for both question types in the collaborative condition. Multiple-choice questions answered collaboratively showed approximately equal performance to the original midterm (Figure 3A, percentage grade; Figure 3C, grade change; multiple choice: Mean 1.88% ± 2.54%, p = 1.00), while long-answer questions answered collaboratively were nonsignificantly increased (Figure 3B, percentage grade; figure 3E, grade change; long answer: Mean 4.13% ± 2.56%, p = .333).

In contrast to the short-term benefits that were observed with collaborative testing, the learning gains for long-term retention were less apparent. We did not observe a significant change from the original midterm exam to the long-term retention test at 6 weeks for either the multiple-choice (Figure 3A) or long-answer (Figure 3B) questions either for questions answered individually or collaboratively. There was a slight nonsignificant decrease in grade from the original midterm for multiple-choice questions answered both individually and collaboratively in the long term (Figure 3D; individually: Mean –3.06% ± 3.24%, p = 1.0; collaboratively: Mean –2.12% ± 2.64%, p = 1.0), whereas there was a slight nonsignificant increase in grade for long-answer questions answered both individually and collaboratively in the long term (Figure 3F; individually: Mean 2.89% ± 3.04%, p = 1.0; collaboratively: Mean 2.97% ± 2.84%, p = .899). Therefore, following the initial decay in knowledge observed in the short term (1 week postmidterm) for both multiple-choice and long-answer questions answered individually (but not collaboratively), knowledge actually improved for both question types under both conditions, although the only significant differences were between long-answer questions at Week 1 (short term) and Week 6 (long term) in the individual condition and between multiple-choice questions at Week 1 (short term) and Week 6 (long term) in the individual condition. In other words, it can be said that knowledge improved between the short-term and long-term retention tests for both question types answered individually, whereas knowledge was maintained between the short-term and long-term retention tests for both question types answered collaboratively. As will be discussed later in more detail, it is important to note that long-term retention was measured after students partook in a comprehensive, instructor-led review of the midterm exam in class, which we believe may have influenced the measurement of long-term retention independently of collaborative testing.

Discussion

This study aimed to build on previous research to determine the effects of two-stage testing on long-answer in comparison to multiple-choice questions. Furthermore, this study examined whether two-stage testing improves short-term and long-term retention of material and whether there was a difference in retention between multiple-choice and long-answer questions. The main findings of this study are: (a) two-stage testing significantly improved performance on both multiple-choice and long-answer questions, with no differences in the magnitude of improvement between question type; (b) two-stage testing prevented a short-term drop in retention with multiple-choice questions; and (c) two-stage testing prevented a long-term drop in retention with multiple-choice questions, although we believe that the long-term results may have been influenced by other variables such as an in-class midterm review. It is worth noting that we chose to observe the collaborative stage under a self-selected group condition to allow students to feel more comfortable within their groups. These informal groups worked together for only a brief period during only one encounter. To our knowledge, there is no literature arguing that self-selected grouping confounds a study where interactions happen during one instance only (Brame & Biel, 2015).

It has repeatedly been noted in previous literature that collaborative testing can significantly improve student performance on multiple-choice questions, and the results of this study are consistent with those findings (Bloom, 2009; Cortright et al., 2003; Gilley & Clarkston, 2014; Meseke et al., 2010). A degree of improvement of 17.3% was seen in multiple-choice questions as a result of collaborative testing, which is consistent with previous studies that noted improvements between 10% (Leight et al., 2012) and 18% (Cortright et al., 2003) on tests that used multiple-choice questions.

We also investigated the effect of two-stage testing on long-answer question performance, and to our knowledge, demonstrated for the first time that collaborative testing also significantly improves performance on this question type. The improvement in long-answer question performance with collaborative testing was comparable to the improvement in multiple-choice questions. This is an important finding, because as previously noted, long-answer questions may be more conducive to the assessment of higher order thinking skills in comparison to multiple-choice questions (Crowe et al., 2008). Therefore, the results from this study show that instructors can effectively incorporate question types other than multiple-choice into collaborative testing, allowing them to experience the benefits of collaborative testing while assessing a wider range of thinking skills.

Although previous studies have examined the effect of collaborative testing on retention, the time frame used varies between studies. Although Gilley and Clarkston (2014) assessed retention over a very short period of only 3 days, Bloom (2009), Cortright et al. (2003), and Leight et al. (2012) examined retention after 3 to 4 weeks. In the present study, we defined short-term retention as 1 week following two-stage testing, and long-term retention as 6 weeks following two-stage testing. In the short term, there was a negative grade change in questions answered individually from the original midterm to the short-term retention test administered at 1 week, although the change for long-answer questions was nonsignificant. This is indicative of decay in knowledge in the week following the original midterm exam. This short-term decay in knowledge was prevented for both multiple-choice and long-answer questions answered collaboratively, with multiple-choice questions showing equivalent performance to the original midterm and long-answer questions showing a nonsignificant improvement. Therefore, it can be concluded that collaborative testing as used in the present study improved retention of knowledge in the short term for both multiple-choice and long-answer questions.

Although we also observed similar benefits to knowledge retention for both question types in the long term, the influence of collaborative testing on long-term retention is less clear, as improvements were also seen for questions answered only individually. The use of two-stage testing prevented both a short- and long-term decay of knowledge across time as demonstrated by the findings in the collaborative condition, but we also observed an unexpected increase in knowledge for questions answered only individually from 1 week to 6 weeks that could not be attributed to two-stage testing. Although it is uncertain why the long-term improvement in questions answered individually was observed, the most probable hypothesis is that knowledge was increased following an instructor-led midterm review that occurred immediately after completion of the short-term retention test. In each class, the instructor handed back the individual exams and gave students several minutes to review these on their own. Then the instructor facilitated a comprehensive review of the entire exam, going through each question and making sure students were aware of the correct answers. For questions that were answered correctly by a large majority of the class, this may have been a quick answer check, but for questions with poorer performance or clear evidence of misconceptions, more discussion and clarification took place, with both students and instructor often providing feedback. In fact, this large class review of the entire two-stage exam may have mirrored the collaborative experience of the two-stage exam, as during this session the instructor and class discussed answers and common misconceptions, and collaboratively came up with the correct answers. Alternatively, students may have gotten better at test taking, or perhaps the concepts tested on the retention test were reinforced throughout the last 6 weeks of class. Interestingly, however, no additional knowledge gains were seen in the collaborative group, which suggests that the most likely explanation for this finding is the comparability between the collaborative nature of the midterm review relative to the collaborative stage of the two-stage exam. However, experimental validation of this theory would be difficult to prove in educational research, as it is unethical to give differential treatment to two groups that could potentially have a strong likelihood of influencing summative course performance. Therefore, it remains unclear whether long-term retention is increased with collaborative testing independently of a comprehensive exam review. These findings are still relevant as they suggest that if instructors do not have the time or means to conduct a two-stage exam, reviewing an assessment in class could be just as beneficial to long-term retention of material.

The retention benefits associated with two-stage testing in the present study are consistent with the findings of Gilley and Clarkston (2014), Bloom (2009), and Cortright et al. (2003) and are in contrast with the findings of Leight et al. (2012), although methodological differences make it difficult to draw direct comparisons between studies. For example, both Leight et al. (2012) and Cortright et al. (2003) tested retention using similar questions on subsequent exams, in contrast to the present study that used identical exam questions on a targeted retention test. Because of its experimental nature, it is important to note that the retention tests used in the present study were unannounced and not for credit, which suggests that students would not have prepared or reviewed for the tests in any way and would not necessarily have had a very high level of motivation to perform well. In fact, based on motivation alone, we would expect to see a lower performance on the retention tests compared with a graded exam, which adds credibility to our findings and suggests that students were putting forth a good effort and were taking them seriously. Although Gilley and Clarkston (2014) used a similar retention test model to the present study, they measured retention within just 3 days following the two-stage exam, which shows only very short-term effects. We did not observe a repeated testing effect because all subjects in the study were tested the same number of times (under different conditions—individual or collaborative), yet the results reflected normal distributions, not skewed ones.

From a theoretical perspective, it makes sense that two-stage exams would improve student learning. The format of these exams mirrors several well-known psychological effects. The testing effect is a phenomenon where repeated testing of subjects increases their performance on subsequent exams (McDaniel, Anderson, Derbish, & Morrisette, 2007). Indeed, the crossover design of this experiment was developed to attempt to isolate the testing effect from any effects caused by two-stage exams. Active engagement with course material is another important feature of two-stage exams and also improves learning (Freeman et al., 2014). Students in these exams are forced to articulate and explain their understanding of the material to peers. Observations of the exams show that the vast majority of students participate in group discussions. Timely feedback on performance has long been held up as a best practice in education and many other human endeavors (see Anderson, Corbett, Koedinger, & Pelletier, 1995, or Chickering & Gamson, 1987). Two-stage exams provide very good feedback much more quickly than most other exams; such feedback becomes perfect if the exam uses scratch cards such as IF-AT cards provided by Epstein ().

This study has strengths and limitations that should be considered. Strengths include the use of two classes that used identical protocols, allowing for a larger, and thus more representative, sample size to be used in analyses. The two classes were compared to ensure that no statistical differences existed in performance between the groups before they were combined. As well, consideration of Bloom’s taxonomy in the development of collaborative test versions ensured a comparable cognitive challenge between individual and collaborative groups. Limitations include the use of data from students enrolled in one of two courses, both of which are part of a single-degree program, which limits generalizability across disciplines and levels of undergraduate and graduate education. As well, the study is also limited by the inclusion of a comprehensive midterm review, which may have confounded the ability to obtain true long-term retention results. Future research should seek to explore differences in collaborative testing outcomes between different disciplines and programs of study in a broader context and to clarify long-term retention effects across time with minimization of confounding variables that may influence knowledge accretion.

Conclusion

Collaborative testing has been proven to be a useful assessment strategy with strong pedagogical benefits in a variety of disciplines (Bloom, 2009; Cortright et al., 2003; Gilley & Clarkston, 2014). This study confirmed previous research that demonstrated better performance by students on multiple-choice questions when they worked together and showed that performance on long-answer questions is similarly improved when two-stage testing is used. As well, this study showed that collaborative testing maintains short-term and long-term retention of material, although there is a lack of clarity regarding the true effect of collaborative testing due to the inclusion of an in-class comprehensive midterm exam review. Collaborative testing provides considerable benefits to both students and instructors and can be easily implemented into a variety of classrooms and disciplines to enhance the learning experience.