Interdisciplinary Ideas

What biases are in my internet searches?

What biases are in my internet searches?

By Raja Ridgway

Bringing other subjects into the classroom

During my first year of teaching, I decided to have my eighth-grade students use the internet to do some basic research about rollercoasters for an energy transfer project. I knew students had to be careful when considering information on the internet and we had discussed criteria for determining whether a website was credible. Recent research (e.g., Manjoo 2018; Noble 2018), however, has begun to demonstrate that I wasn’t doing enough to educate my students on their usage of internet search engines.

As educators, we are taught (and learn through experience) that information presented on the internet is subject to a number of biases. National standards across many content areas emphasize the need for students to be aware of biases, including their impact on accuracy and credibility. We often consider potential biases when reviewing particular websites that students are accessing, but not necessarily when considering the tools that students are using to find that information. Given the frequency with which our students use search engines to inform both their academic and personal lives, it is time that we teach them about the biases that exist within the algorithms that power those search engines.

Algorithmic biases

An algorithm is “a step-by-step process [used] to complete a task” (K–12 Computer Science Framework 2019). Algorithms are central to almost every aspect of computing and technology (Garcia 2016) and are both built and refined using data sets (Kirkpatrick 2016). Algorithmic biases develop when the data and decisions being used to build the algorithm reflect the biases of the developers (Bhargava 2018). These algorithmic biases can have relatively innocuous effects, such as presenting inaccurate weather data, or truly horrific effects, such as predicting crime based on race (Garcia 2016). Algorithmic biases that exist within search engines can also have a dramatic effect on how stereotypes are perpetuated (Noble 2018).

Perpetuating stereotypes of scientists

There is a significant body of research documenting the stereotypes that students believe about scientists (e.g., Chambers 1983; Kelly 2018). The Draw-a-Scientist Test (DAST) was developed by David Chambers in 1983 and has been used many times since to demonstrate that students often picture scientists as older white men. Additionally, students consistently picture scientists’ work in limited ways, often imagining isolated individuals in lab coats mixing chemicals. Such depictions have changed somewhat over time, but the majority of students both in the United States and in other countries continue to hold narrow views of scientists and their work (Christidou, Bonoti, and Kontopoulou 2016). Students tend to have similar perceptions of computer scientists, who are often depicted as white men who wear glasses and work on computers (Pantic et al. 2018).

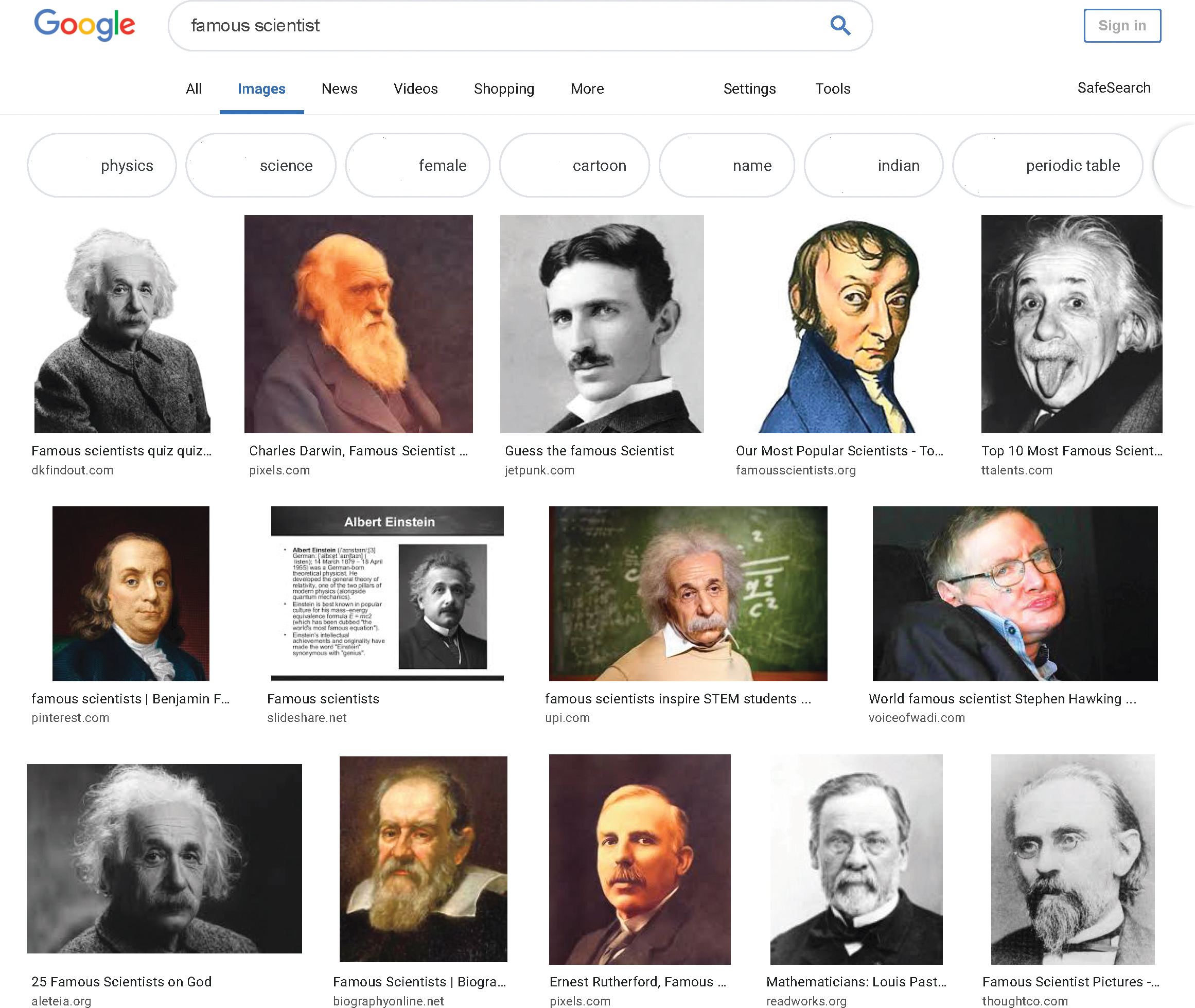

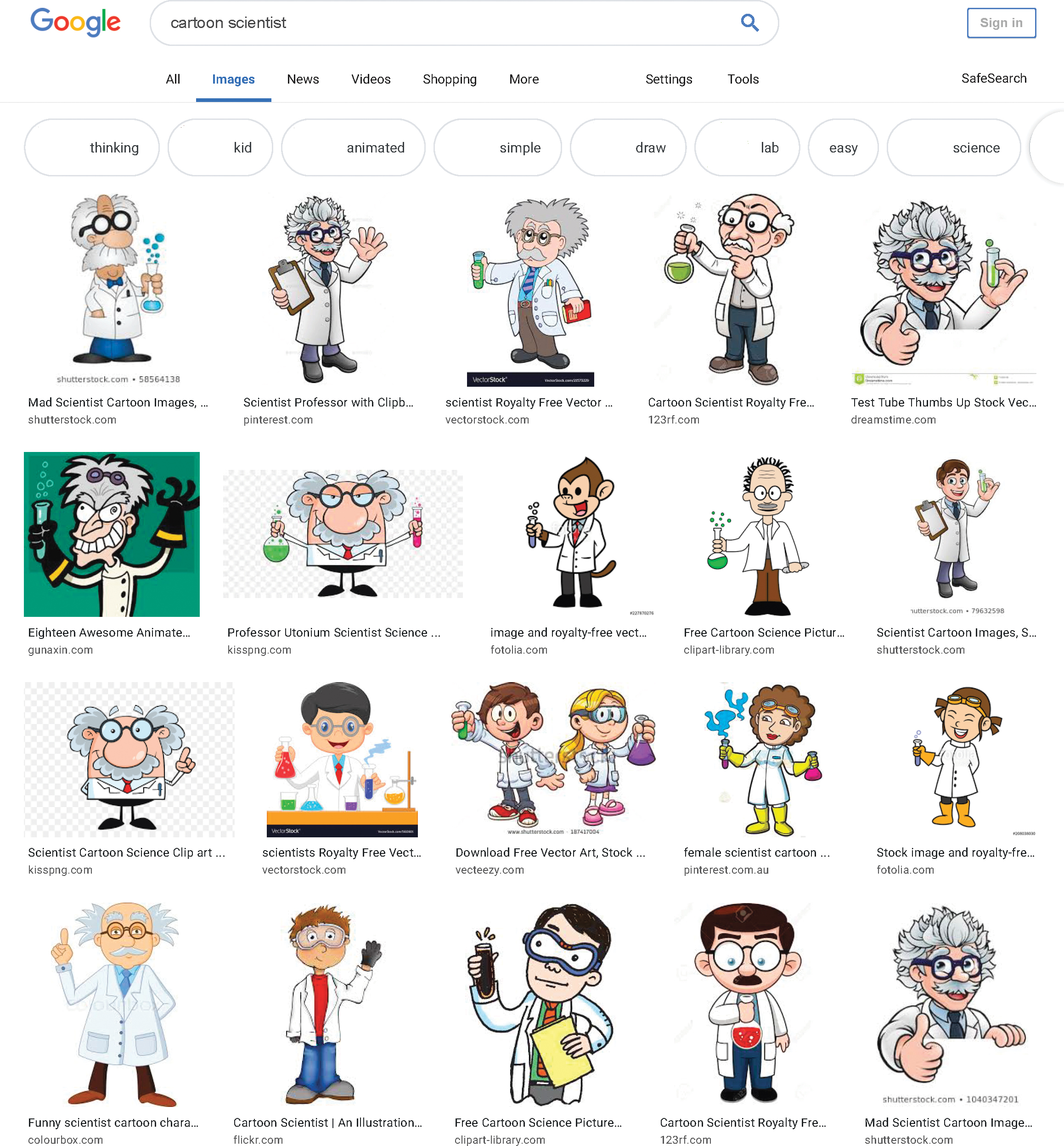

When students use a search engine to find images of scientists for projects, they are likely to see images that confirm and perpetuate these stereotypes. For example, compare the images seen when Google Image Search is used for “scientist” (Figure 1), “famous scientist” (Figure 2), and “cartoon scientist” (Figure 3, p. 26). Although students see some diversity in terms of race and gender in Figure 1, this diversity quickly disappears in Figures 2 and 3. In Figure 2, only one of the first 19 scientists is female (although depictions of Albert Einstein appear six times). Additionally, in Figure 3, only two of the first 20 images appear to be female. It should be noted that the user needs to look all the way to the thirteenth image to find a depiction of a female scientist in Figure 3, even though the eighth image is that of a cartoon monkey dressed up as a scientist.

Counteracting algorithmic bias

There is work being done to counter algorithmic bias in internet searches, including efforts to diversify the perspectives within companies that develop the algorithms (Garcia 2016) and to make the algorithms themselves more transparent (Kirkpatrick 2016). Given the widespread use of algorithms and the inherent biases that have permeated the data sets used to train those algorithms, however, it is essential that we as educators support our students in recognizing the biases they see when they are searching the internet.

Although there are a number of ways to support students in pushing back against the stereotypes of scientists, such as the match-up activity, which has students match individuals’ pictures to their professions (Dyehouse 2010), and interacting with diverse groups of scientists via roundtables and field trips (Kelly 2018), we also need to educate students about how the internet works. Educating ourselves and our students to be aware of the biases that exist on the internet and within searching is the first step (Baeza-Yates 2018), and we can do so without becoming experts in how searching algorithms work (see “Counteracting Algorithmic Bias in Action” for a sample mini-lesson agenda). Just as we continually prompt students to think about the biases and credibility of internet websites, we can also ask them to question the biases underlying their search results.

Conclusion

Integrating computer science principles and concepts into your science classroom can happen in many different ways. Supporting your students with building their understanding of the internet, including how search engines work and the biases associated with their underlying algorithms, is one way to intentionally integrate computer science. Just like us, our students use these tools to answer questions on a daily basis and deserve to understand how they work. To be prepared for the 21st century, students must be able to think critically about the results they get when they search on the internet.

How else are you integrating computer science into your middle school science classroom? Do you have a great resource or experience to share? Let me know via e-mail!