feature

Self-Regulated Learning Strategies for the Introductory Physics Course With Minimal Instructional Time Required

Journal of College Science Teaching—May/June 2022 (Volume 51, Issue 5)

By Stephanie Toro

Self-regulated learning (SRL) is the metacognitive aspect of learning that goes beyond learning content and skills. With SRL, students are aware of their content understanding and learning progress and use advanced thinking skills to create goals and improve their academic achievement. In this action research, SRL strategies were integrated into the instruction of an Introduction to Physics I university course so that students could better understand their learning progress and development throughout the course. Some of the strategies to develop SRL skills included diagnostic tests with group review, exam wrappers, and metacognition checks, as well as providing structure for office hours and learning plans for students. These strategies not only taught students SRL skills but also shifted the focus of learning to a more individualized perspective that emphasized growth mindset processes rather than attention to having the right answers. As a result, with the implementation of these simple strategies, students’ performance and self-efficacy improved, they earned higher average scores on tests and exams, and their attendance increased significantly throughout the semester.

Self-regulated learning (SRL) is the metacognitive aspect of learning that goes beyond the learning of content and skills. With SRL, students are cognizant of their understanding of content and their learning progress, as well as capable of using advanced thinking skills to create goals and enact learning strategies to achieve outcomes for a specific learning context and environment, all while maintaining motivation and engagement in the learning process. In other words, SRL means knowing how to learn for highly contextualized and individualized situations and being able to modify studying and learning plans based on reflections on one’s progress. All paradigms of SRL, regardless of the educational researcher, have three common phases: planning, monitoring, and evaluating. SRL improves academic performance and can close the achievement gap because the difference between academic success and failure often is not a student’s cognitive ability, but the SRL skills that provide the metacognitive aspect of learning.

Numerous studies have shown that SRL, which includes the knowledge of how to learn, is correlated with higher academic achievement (Pintrich & DeGroot, 1990; Zimmerman, 1990, 2008). Particularly in middle school education, SRL development has been shown to be an indicator of success in school because explicit teaching of SRL led to increases in motivation and academic achievement (Cleary & Zimmerman, 2004). By looking for the differences between high-achieving students and non-achieving students, some researchers have found there was a significant difference in the SRL knowledge and skills between the two groups of students (Borkowski & Thorpe, 1994; Zimmerman et al., 2002). In a study that assigned students to receive an SRL intervention related to a math course, those in the experimental group receiving the SRL intervention had a 25% higher pass rate on a national gateway math exam than students in the control group (without the treatment; Zimmerman et al., 2011). Additionally, researchers found that the experimental group had high self-efficacy beliefs before solving a problem and were able to evaluate their own performance after solving problems. As important as SRL is to academic success, it should be acknowledged that SRL is not connected with a student’s intelligence level. All students are capable of using SRL so they can become effective and efficient in their study strategies (Zimmerman, 2002). SRL development has been shown to be an indicator of students’ academic achievement regardless of their mental abilities (Pape et al., 2002). Because SRL is a “developable aptitude,” Winne (1996, p. 330) advocates that all students should receive SRL training due to a need for a tool kit of strategies.

SRL is an informal process that is based on the individual and his or her previous experiences. Because of the individualized nature of SRL, it is challenging for students to develop these skills in the formal education setting of the classroom. Learning SRL strategies requires specificity. Strategies should be presented to students within the appropriate context and based on how they learn and what they are learning (Schunk & Ertmer, 2005). They need to learn when to use those specific strategies. Even though students learn some of these concepts in a formal classroom setting, learning is contextual and involves background knowledge and experiences of the students, so the learning experience is unique to the individual. At any given time in the classroom, a teacher must present several strategies and methods for all of the different student learning experiences. Students need to be taught not only SRL strategies but also how to adapt the strategies to new learning situations. Unfortunately, students do not always learn how to transfer strategies to new contexts (Newman, 2002; Winne, 1996). Research has shown that when teachers promote SRL in their classrooms, students become more independent and motivated learners who are strategic with using learning strategies (Paris & Paris, 2001; Perry et al., 2002). Teachers can integrate SRL into their classrooms by engaging students in complex tasks, giving them academic choices, teaching them domain and strategic knowledge, challenging students appropriately, and giving opportunities for students to evaluate themselves and their peers (Paris & Paris, 2001; Perry et al., 2002). Students in classes that engaged in a full intervention of SRL strategies every day, not a partial intervention in which strategies are only emphasized prior to a test or exam, had significant increases in classroom participation, homework completion, and test scores (Andrzejewskie et al., 2016). In the same study by Andrzejewskie and colleagues (2016), of all the students with academic gains in all four of their course subjects, more than half were students of teachers who had embraced a full intervention of SRL strategies. Almost all of the students with academic losses throughout the study were in classes that did not fully adopt SRL interventions.

In the university setting, many professors falsely assume their students know how to study because they finished high school. College students are not always taught how to study and learn at the college level. In fact, between 65% and 80% of college students answered “no” to the question “Do you study the way you do because somebody taught you to study that way?” (Kornell & Bjork, 2007; Hartwig & Dunlosky, 2012). Students do not make decisions based on controlled experiments, but rather based on whether a strategy worked well enough for the desired outcome. Students often select strategies based on what feels best from methods with high fluency or response time, meaning the perception of the ease to quickly recall information. Students do not learn best practices by the “trials and errors of living and learning” (Bjork & Benjamin, 2011). Students often make study decisions that contradict the empirical results. They choose methods that do not have active engagement; for instance, 67% of students choose to only reread as a study method (Kornell & Bjork, 2007; Kornell & Son, 2009). Students believe rereading is a superior study strategy despite the low learning and retention that occur with this method (McCabe, 2011; Dunlosky et al., 2013; Fritz et al., 2000; Rawson & Kintsch, 2005). They do not choose more effective strategies; as one example, only 11% of University of Washington college students reported practicing recovery, a study strategy (Karpicke, 2009). Only 18% of students in another study reported that they believe self-assessment leads to learning; students underuse self-assessment as a learning tool (Kornell & Bjork, 2007; Kornell & Son, 2009). Students not only fail to make decisions based on best practices when choosing specific strategies or methods for studying but also do not use best practices when deciding how to study, the amount of time spent studying, how much content they cover at a time, and when they study. Students tend to prefer studying in large single chunks of time (massing) rather than small chunks spaced over several weeks (spacing). Across multiple experiments by Kornell (2009), 90% of the college student participants had better performance after spacing than after massing practice. However, 72% of the participants reported that they felt massing was more effective than spacing. Much can be gained by adopting an SRL approach and integrating SRL strategies into our teaching.

Pedagogical methodology

In collaboration with a professor teaching a calculus-based Introductory Physics II course for science majors, we designed a plan to integrate SRL strategies throughout the semester. For this particular class, we determined that SRL strategies were needed due to the assessment and grading structure of the course, which was standardized among as many as eight different professors in a given semester; the course included only two major tests and a final exam, but no other forms of smaller assessments. Thus, this class had limited opportunities for students to receive feedback on their learning, and assessments were high stakes. The collaboration was part of the physics professor’s participation in a 1-year reflective teaching program for STEM professors. The professor had an instructional goal to include SRL strategies so students could better understand their learning progress in the course and reflect on their development, making changes as needed to improve. The professor adopted diagnostic tests with group review, exam wrappers, and metacognition check-ins, as well as providing structure for office hours and learning plans with her students.

Diagnostic test with group review

According to the current structure of the course, students would not receive any feedback until they were one third of the way through the semester, on an assessment that represented 30% of their final grade. The professor felt it was often too late for students to receive feedback and guidance on their study strategies, as it is difficult for students to recover from a poor performance. Thus, the professor designed the complementary problem sessions, facilitated by graduate teaching assistants, to include many diagnostic opportunities for students. The professor carefully planned the sessions to align with the content, skills, and difficulty level of the course.

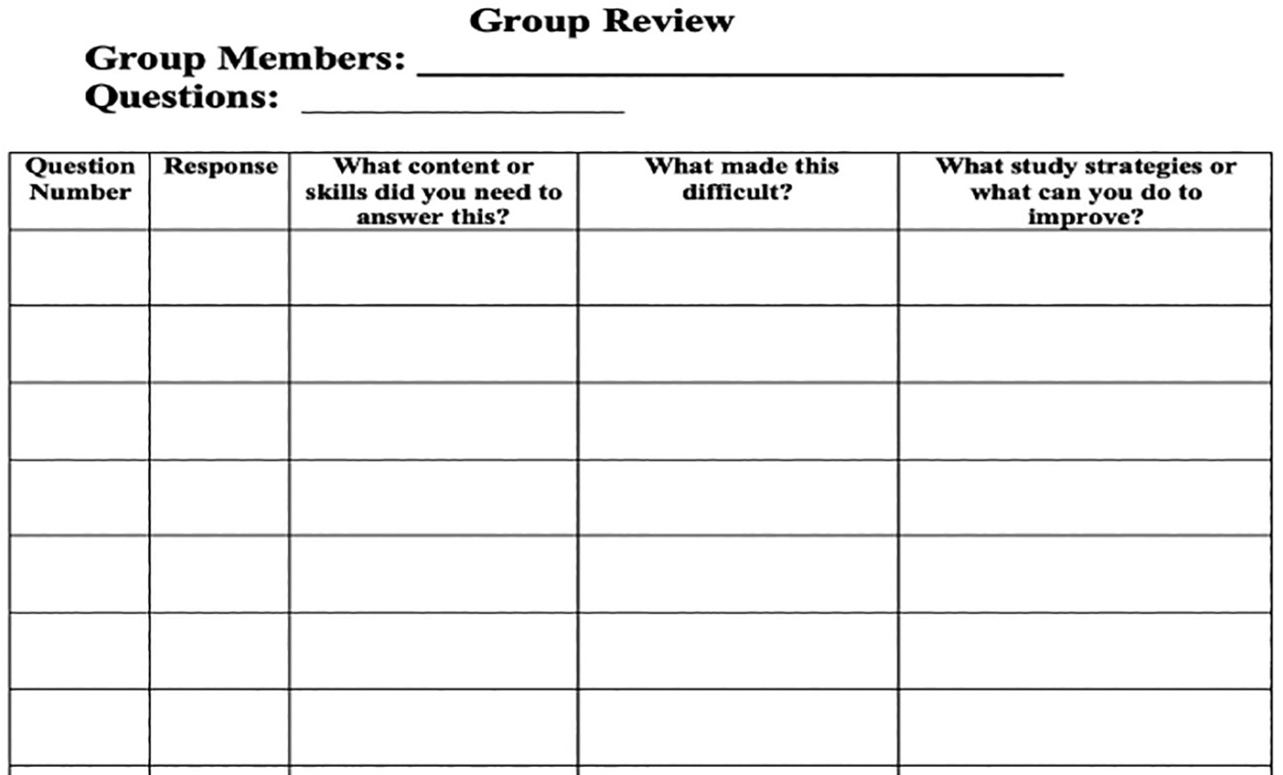

Students completed problem sets on their own. During the complementary session, students worked in groups to review the problems without being provided correct answers; they were only encouraged to work as a group to evaluate the problems. The emphasis was not on correct answers. Students used a table (see Figure 1) that required them to focus on the metacognitive aspects of problem solving. Students identified the content skills they needed to answer the questions and determined which factors made this problem difficult to solve. They discussed study strategies for improving their problem-solving in the future. This last component is critical, as much research has shown that students are more likely to listen to their peers about how to study rather than to an expert. The professor provided the graduate teaching assistants with potential study strategies to help facilitate the conversation (see Figure 2 for a sample resource). Groups held a debrief in which students shared the main ideas of their group discussion. Again, the emphasis was on the process of solving the problems and the skills to study and practice, not on finding the correct answers.

Group review worksheet used with diagnostic problems.

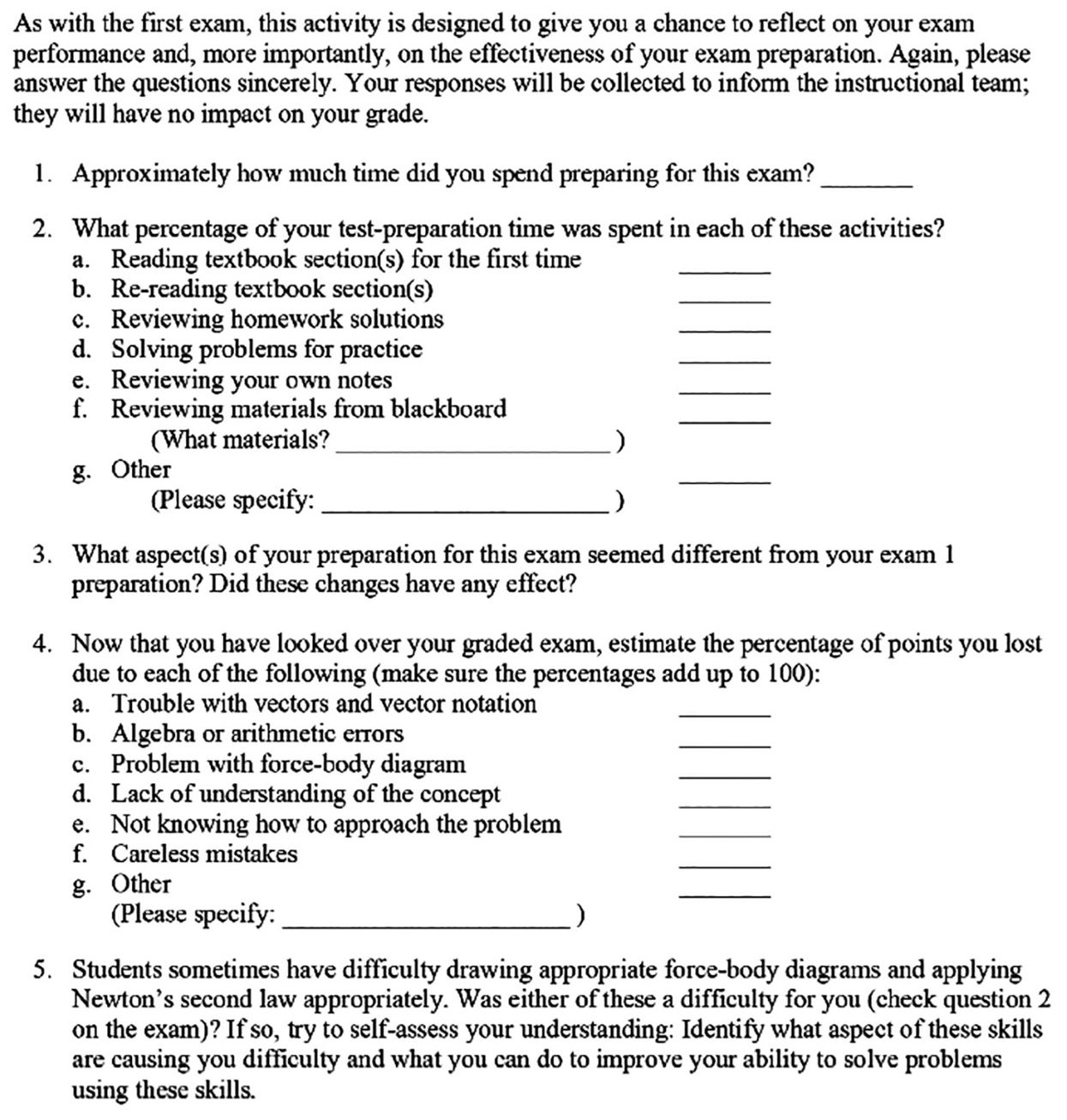

Example of a study strategy resource shared with students.

Prior to each of the two tests during the semester, students were encouraged to review their diagnostic problems and create study plans. They were asked to review what skills and content they needed to demonstrate mastery, as well as to identify the specific study strategies needed. The ultimate goal of this strategy was to encourage students to be metacognitive about their problem-solving abilities and to be active learners, as well as to help them monitor and evaluate their performance by having opportunities to make adjustments and apply new study strategies based on feedback from classmates.

Exam wrapper

After each of the two major tests, the professor wanted to do more than the traditional “review the test” during a class session. She observed that students focused on finding the correct answers but often failed to understand how or why they made mistakes. Furthermore, students did not reflect on how to improve on these areas of weaknesses and continued to make the same mistakes throughout the semester. The professor wanted a formalized structure to help students reflect in a meaningful manner, so she used an exam wrapper after each test (as shown in Figure 3).

Example of an exam wrapper used in an introductory physics course.

An exam wrapper is a strategy for helping students review their tests and evaluate their performance beyond the actual grade they receive. It requires students to reflect on their performance and identify the mistakes they made and the reasons for these mistakes. The questions help students identify any gaps in their theoretical content knowledge, practical problem-solving skills, and drawing vector diagrams; test-taking errors such as reading the problem or not identifying givens or what to solve for; and careless errors such as performing calculations incorrectly or transferring incorrect numbers from the problem. Students are then asked to think about strategies for immediate and sustained improvement. In this course, students had to identify strategies they could implement that week, as well as what they could do differently to prepare for the next test.

Metacognition check-ins

The professor wanted to do more than just have students reflect individually on their performance before the test (using the diagnostic problems in their complementary sessions) and after the test (using the exam wrappers). She wanted to provide individualized support to students so they could develop their own learning plans. Prior to this semester, few students had taken advantage of the professor’s office hours, and when they did attend, they often were focused on grades. The professor used a four-question metacognitive check-in to encourage students to attend office hours.

After each test, students answered four simple questions:

- In what areas or aspects of this class am I doing well? (positive reinforcement)

- In what areas or aspects of this class do I need to improve? (identifying growth)

- What actions can I take to improve on the areas I mentioned in response to Question 2? (accountability)

- How can my professor or graduate teaching assistant support me? (empowerment)

The first question helps students focus on the positive and acknowledge the good habits and study skills they currently have. These positives can be as simple as always attending class on time or completing all of the assignments. The second question helps the student establish the growth mindset that we can always improve and get better; the area for improvement is personal and self-directed, so the student is responsible for identifying the growth that is most important to them. The third question reminds students that they are accountable for their actions and encourages them to reflect on what is within their control to improve. The final question empowers students to seek help from others and requires them to think specifically about ways they can use both their professor and the graduate teaching assistant as allies in their learning process.

The professor then encouraged all students to schedule a meeting with her 1 week after they completed the metacognitive check-in. Students were told to bring their responses to the four questions for discussion. The professor also told students she would help each of them create a personalized study plan to help them improve their performance on the next test. Of the 77 students in the course, almost all of them scheduled and attended at least one session with the professor during the semester. This was a first, as she rarely had students come to office hours before.

Having a metacognitive check-in as the focal point of the meeting cultivates positive conversations between professors and students for several reasons. First, it reduces anxiety students may experience when talking with professors. For many students, attending offices hours or even engaging in a one-on-one conversation with a professor is intimidating. They may be nervous about what to say or what to ask. Having the metacognitive check-in allows the student to know in advance the topics of the conversation. In fact, if they are very nervous, they do not have to initiate the conversation; the professor can begin by reviewing the document and then ease the student into the conversation with a positive perspective. Another reason students may not attend office hours is because they do not know what to ask or talk about during those sessions. The metacognitive check-in provides a structure for the conversation. We often forget that students are never taught how to use office hours for their benefit. The final reason is that students may feel they are personally being judged during office hours. Since the focus is on the document, the professor can use language that directs attention to the responses. For example, the professor can say, “It says here on the check-in that …” This reduces the focus on the student and directs the attention to a “third party,” the document itself. Regardless of the reason a student may be nervous about meeting with the professor, this strategy can help facilitate conversation.

Impacts

This collaboration was not initially intended to be an action research by the professor, so Institutional Review Board and ethics approvals were not obtained to conduct a full analysis. I did want to share some of the observations and changes that occurred during the semester in which these strategies were adopted. From a quantitative perspective, the grades on the high-stakes assessments improved. The university does not return tests to students, and professors typically reuse tests each semester. Thus, the students received a test with the same questions as tests from previous semesters. For this particular professor, the average score for the first test was 3.03 out of 5.0 for all semesters prior to integrating SRL strategies. During the semester in which she used SRL strategies, the average test grade was 4.04 out of 5.0. Even without performing a statistical analysis, it is still evident quantitatively that these strategies had an impact, as was the 4.0 average test score.

From a qualitative perspective, it was perceived that student morale was higher than during previous semesters. Normally after the first test, attendance steadily declines until the last day of classes for the semester. Students stop attending because they do not see the value of class sessions. One scheduled observation of the course, which took place during the 11th week of the 16-week course, had an attendance rate of approximately 90%, which is impressive considering the trend for attendance to decline and because this class started at 6:30 a.m. on a Friday. The professor had greater attendance during office hours, with almost all students attending office hours at least twice during the semester, if not more. Student participation during class was much more active as well. The professor perceived less stress and anxiety from the students. One student even came up to the professor after one of the tests to thank her because the student felt it was a great test that allowed them to demonstrate learning easily and with no stress.

Conclusion

It is important to think about the hidden curriculum and opportunities for explicit instruction regarding study strategies and being a reflective learner at the university level. With less structure at this level, there are many opportunities to guide students to consider the metacognitive aspects of their learning. Many professors feel instructional time is a barrier to explicit instruction of SRL. However, all of these strategies occurred outside of lecture hours, during complementary sessions, students’ individual time, or office hours. The professor only needed to provide brief reminders during class. These strategies not only taught students SRL skills but also changed the focus of learning in the course to a more individualized perspective that emphasized processes with a growth mindset, rather than focusing specifically on finding the correct answers. Students’ performance and self-efficacy improved with the implementation of these simple strategies.

Stephanie Toro (theteacherstoolbox501c3@gmail.com) is the director, educational researcher, and teacher mentor for the Teacher’s Toolbox.

Assessment Learning Progression Teaching Strategies Postsecondary