Research and Teaching

Less Text, More Learning

A Modest Instructional Strategy That Supports Language-Learning Science Students

Journal of College Science Teaching—September/October 2020 (Volume 50, Issue 1)

By Benjamin Wiggins, Hannah Jordt, and Kerri Wingert

English language learners are increasingly entering classrooms using student-centered instruction that places a greater emphasis on spoken language. While active learning is beneficial for a range of student outcomes, these practices force language learners to carry a heavy cognitive load in a complex and challenging environment. This experiment varied the complexity of the learning experience across a large, multi-section active classroom by presenting different versions of an in-class worksheet to each section. This experiment was controlled for instructor, topic, content, and environment, and treatment groups varied only in lexical density and amount of the language used on a worksheet. This modest variation in instruction corresponded to significantly improved learning as demonstrated on a pre- and post test for international students who represent a conservative proxy group for language learners. These data imply that the effectiveness of active learning strategies can be enriched for English language learners by designing learning materials that are simplified in language.

Introductory science, technology, engineering, and mathematics (STEM) courses in undergraduate coursework are often considered problematic gate-keeping mechanisms for historically underrepresented, capable students as they attempt to pursue postsecondary science learning and careers. This loss of capable scientists is deeply problematic both in terms of social inequities and also for the scientific enterprise; diverse teams perform better across a wide range of human activity (Hunt et al., 2015, Levine et al., 2014, Perryman et al., 2016). Aspects of scientific instruction that induce or perpetuate disparities among student groups, whether at the large institutional level or the second-by-second instructional scale, are likely to result in the overall loss of human scientific capacity. “Active learning” emphasizes collaborative, problem-based learning environments (e.g., Prince, 2004) and these strategies are argued to build more inclusive classrooms. In science education, active learning often means classrooms are less teacher-directed and there is more space for students to make sense of their learning through group work, sense-making discourse, and more open-ended problem-solving time (Bang et al., 2016).

One particularly vulnerable group of college students are those who are non-native English speakers. The characteristics of this population varies among U.S. institutions, but is likely to continue to grow rapidly (Institute of International Education, 2017a). In English-only undergraduate institutions, these students can be at a disadvantage when it comes to accessing content in English-language STEM courses because they are still gaining facility in the language in which the course is taught (Rosebery, 2008; Stoddard et al., 2002; Buxton & Lee, 2014; Jantzen, 2008). These impacts are likely exacerbated in time-intensive, lecture-focused, large introductory classrooms where grades are primarily attained via high-stakes exams. These courses often form the first large gatekeeping barrier to college persistence and retention (Setati et al., 2002; Nutta et al., 2011; McNeil & Valenzuela, 2001; Tsui et al., 2002).

Active learning and related instructional methods are hopeful models for more equitable postsecondary science learning; they have been shown to better support learning for underrepresented students in a wide range of studies (Haak et al., 2011; Eddy & Hogan, 2014). These methods generally decrease the speaking time of the single instructor, increase student engagement with course material in class, and require students to collaboratively build understanding. Active learning methods improve learning overall (Freeman et al., 2014), but the differences in efficacy for different postsecondary students are only starting to be understood. Kim et al. (2002) found that students of different sociocultural backgrounds learn differently based on the mode of instructional practice, and that this impact is present even in the children of immigrants who were born and raised in a primarily English-speaking country. Other studies show improved benefits of active learning for students of color, women in science, and first-generation college students, although these studies focus on active learning broadly and not on specific types of interventions (Freeman et al., 2014; Eddy et al., 2014; Eddy et al., 2015).

As nonlecture methods are increasingly taken up at the postsecondary level, the importance of understanding the interactions of classroom materials with student language is increasingly more important to success for all students. This study is particularly aimed at understanding the effects of reduced linguistic complexity in a modeling-rich task in introductory biology on the learning gains of English language learners.

Some research has been done on language learning and text complexity in science classrooms. In a recent national consensus report, science and language education scholars agreed that it is essential for educational institutions at all levels to consider issues of accessibility of their coursework for learners who are still gaining proficiency in the English language (NASEM, 2018), yet the instructional language of science has been noted as a source of difficulty for language learners because of its complex grammar and technical vocabulary. Reforms in science education and active learning both recommend, instead, focusing on building students’ conceptual understandings in science through the use of “epistemic tools” such as models and diagrams (NASEM, 2018, p. 66–67). Moreover, the NASEM report also indicates that an overt focus on specialized vocabulary without these epistemic tools is a source of inequity (2018, p. 98). Working with epistemic tools such as conceptual and diagrammatic models is a boon to language learning as it supports language and science learning simultaneously (Lee et al., 2013), and it supports learners to build richer, more conceptually rooted understanding in science (NRC, 2012). Our study is undertaken against the background of this theoretical framework, where language learning and science learning is supported through deep engagement with conceptual models in biology. We applied this conceptual framework in our design by providing learners with epistemic tools and reducing the explicit vocabulary instruction in one task. Specifically, we sought to compare two versions of the same instructional worksheet: one with complicated language and one with simplified language, in order to better understand the ways that complex language might be supporting or hindering international students’ learning of biology content when epistemic content is included.

In this study, we tested whether reducing the complexity of written items on an in-class worksheet would have an effect on international students’ achievement on future test items related to the content of the written item, with linguistic complexity being quantified by lexical density (Fang & Schleppegrell, 2008). Not all international students are non-native English speakers or would consider themselves as having low levels of English proficiency. However, because international students represent a high degree of diversity in linguistic repertoires, we consider this category of students to be our best available, though imperfect, proxy for students who are non-native English speakers. We hypothesized that reducing the linguistic complexity of an in-class activity worksheet would increase the achievement of this group of students for whom English is likely not their native language.

Experimental design

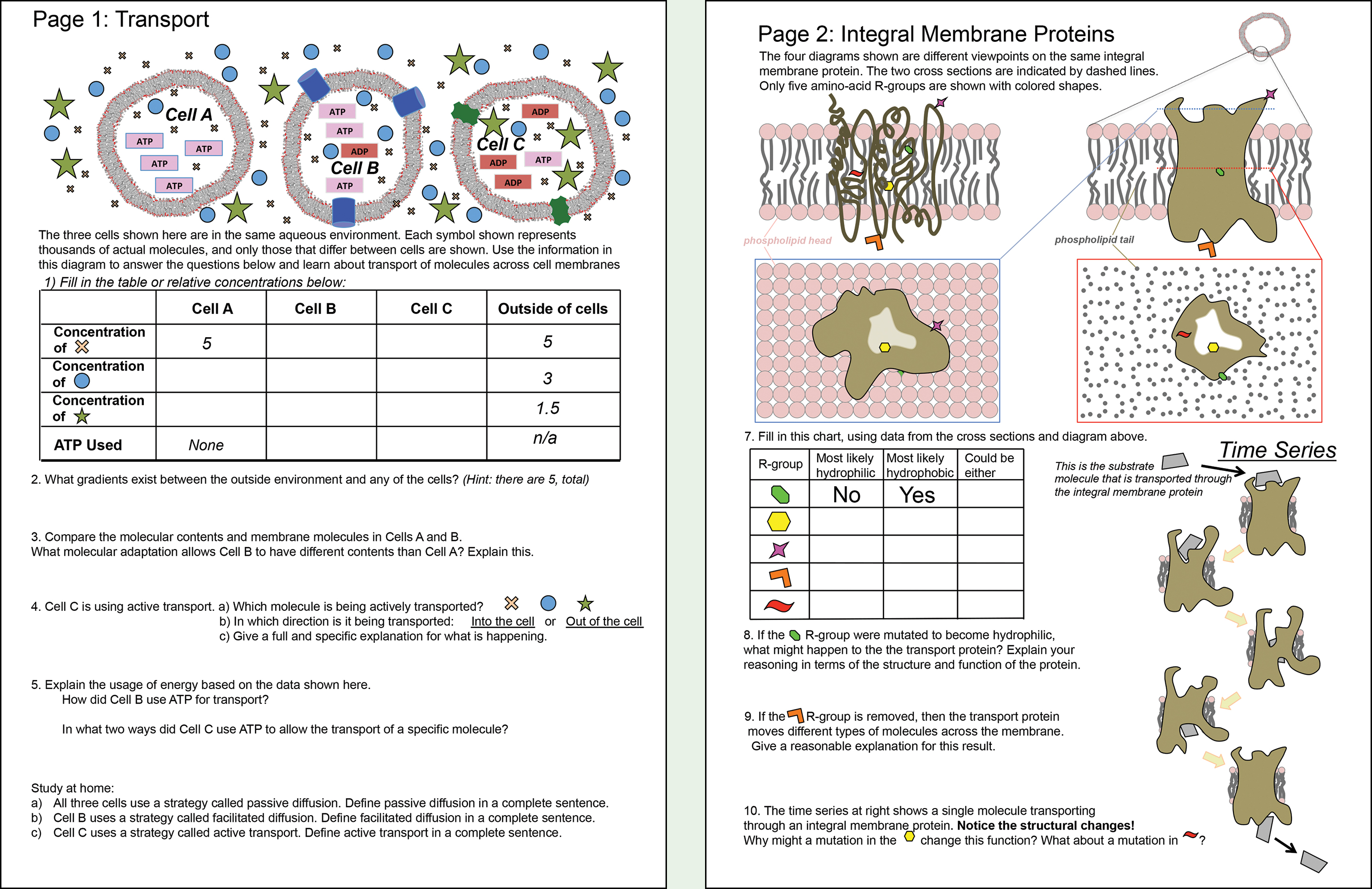

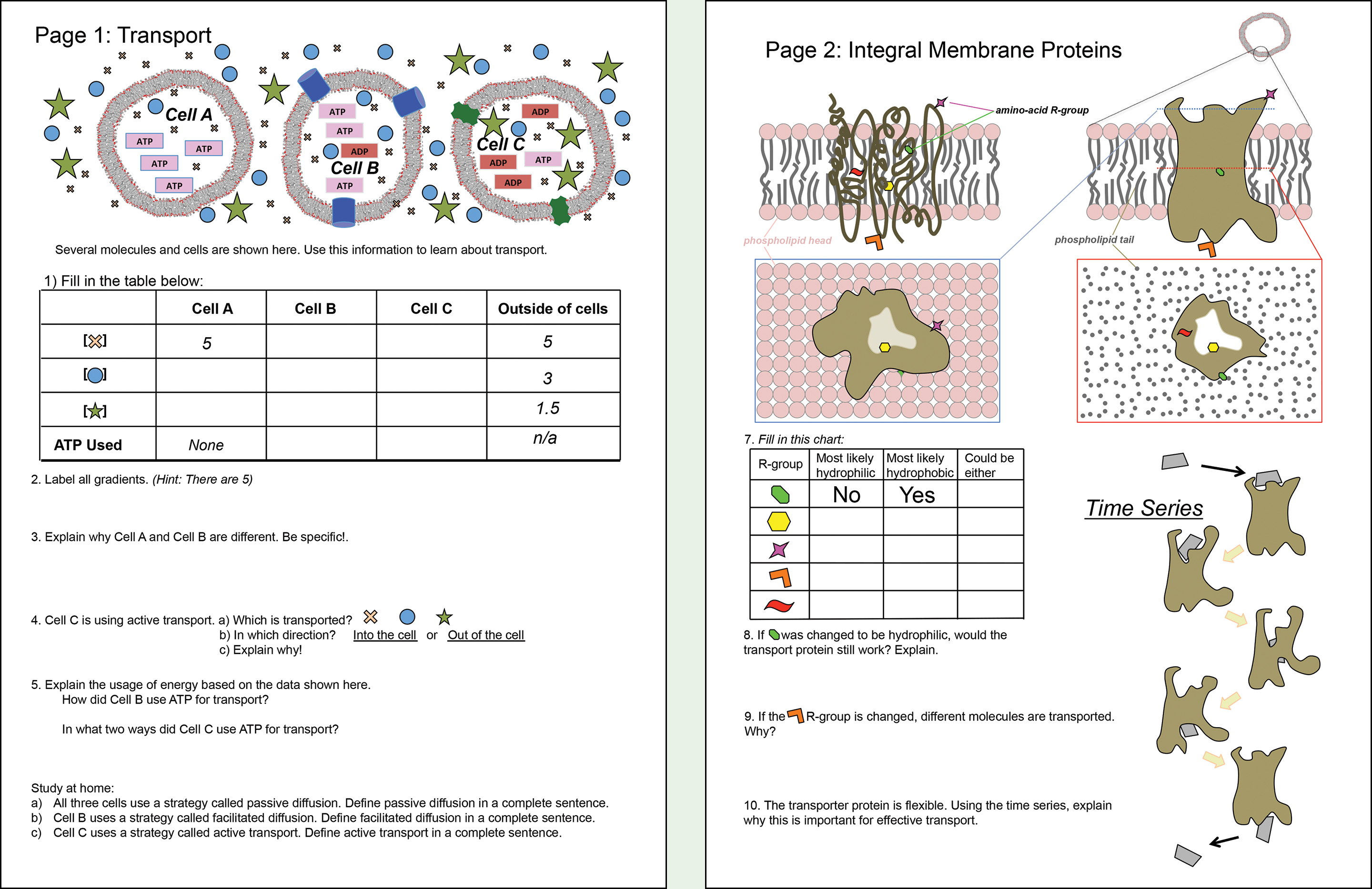

The treatments in this experiment were two versions of an in-class activity worksheet (see Figures 1A and B). Both versions covered the same material, used the same visual diagrams, had the same flow of topics, and had been previously tested in focus groups and wider classroom use before this experiment. The “high-complexity reading” version of the worksheet (Figure 1A) had complex descriptions throughout the two-page document, while the “low-complexity reading” version of the worksheet (Figure 1B) had the overall reading load decreased while maintaining the same meaning. The lexical density of each version of the worksheet is calculated in Table 1.

“High-complexity” version of the in-class worksheet. Students engage in sense-making around membrane transport and integral membrane proteins as part of an introductory college science course.

“Low-complexity” version of the in-class worksheet. Note that scientific models and data are identical to the high-complexity version shown in 1A, and changes are limited to simplified phrasing in the text.

| Table 1. Lexical density of two instructional worksheets. | |||||||||

|---|---|---|---|---|---|---|---|---|---|

|

Specialized vocabulary and lexical density are particular features of scientific texts and can be used to understand instructional materials (e.g., Fang & Schleppegrell, 2008). Lexical density is calculated here as the specialized vocabulary divided by the total number of words (Eggins, 1994). The low-complexity worksheet (7% of words were high-complexity words) had a lexical density of less than half of the high-complexity worksheet (17% of words were high-complexity).

As an example, the third question in the high-complexity version of the worksheet directed students to do the following: “Compare the molecular contents and membrane molecules in cells A and B. What molecular adaptation allows cell B to have different contents than cell A? Explain.” The corresponding wording in the low-complexity version of the worksheet said, instead: “Explain why cell A and cell B are different. Be specific!”

This adjustment was consistent with the same process as corresponding changes throughout the worksheet. Designers tried to capture the intent of the high-complexity version in as few (and where possible, as simple) words as could be used without losing meaning. Here several words with specific scientific definitions (molecules, adaptation, etc.) were eliminated so that the low-complexity version had fewer specifics and technical language. While this might give students fewer details and a vaguer set of instructions, it may also have promoted a focus on the epistemic tools of science, namely the scientific representations, models, and visualizations that characterize science communication (NASEM, 2018, p. 66–67; p. 124–125). In this case, these representations are the diagrams of cells and processes depicted at the top of the page).

The experiment was conducted in a large undergraduate introductory course in molecular/cellular biology at an R1 institution. Students registered into one of two 50-minute lecture sections, with class sizes of 386 and 375. This experimental model controls for instructor, course level, topic, date, and closely matched time, pressure on student outcomes, and previous instruction within the course. Crucially, this large number of students allowed analysis of the smaller subpopulations about which we had hypothesized. Students were well-accustomed to active learning instructional techniques including the use of worksheet-based activities both in this class and in the prerequisite course. On the day of the experiment, each section worked through the activity in the context of groupwork and other active learning methods.

Based on registrar-provided, self-reported, demographic statistics, the student population in the course was estimated to be 61% female, 35% Asian American, 11% underrepresented minority students (URM) (URM includes African American, Native American, Native Hawaiian/Pacific Islander, and Hispanic), 44% Caucasian American, and 7% international students.

To assess learning on the topic material, students took online, low-stakes, multiple-choice pretests and posttests. The pretest was in the 24 hours prior to the class session and the posttest was in the 24 hours after the class. Tests were made up of identical sets of eight challenging items, which had been validated for conceptual complexity and accuracy by several faculty. The experimental day of class was the only class session on this topic. Students also answered affect items after the topical posttest items; these affect items were not analyzed for this study, but increased the length of the test. Students were only included in the analysis if they completed both the pre- and posttest. Ninety-four percent of students met this requirement, including 364 and 354 students in the classes experiencing the high- and low-complexity worksheet, respectively. We obtained data on student gender, ethnicity, international-student status, first-generation college student status (students are considered first-generation college students if neither of their parents completed a four-year college degree), and prior University of Washington grade-point average (UW GPA) from the University Registrar. Consent for the use of their data was obtained from all students in the course, and thus for all the data used in our study (University of Washington Human Subjects Division, Application #44438).

Statistical methods

To test the effect of worksheet type on our outcome variable (student posttest scores) we used proportional odds logistic regression to model the log odds of a student scoring one point higher on the posttest. This type of generalized linear model treats the outcome variable as an ordered categorical variable, and was necessary due to the distribution of posttest scores being left-skewed and tightly bounded from 0 to 8.

Importantly, we added covariates to our model, which allowed us to assess which of these covariates predicted student success on the posttest and whether different sub-populations of our students responded differently to the two worksheets types.

Selecting a model: The initial covariates in our model were chosen prior to running any analyses with no additional covariates added posthoc, and each was tested for inclusion in the model in a systematic way using backwards model selection (see below). These covariates included worksheet type (high versus low complexity), pretest scores, prior UW GPA, student gender, first-generation college student status, and student background (a categorical variable, which binned students into one of four types: Caucasian-American, Asian-American, URM, or international student).

We included pretest scores to control for prior knowledge of the relevant subject area and prior UW GPA to control for a measure of typical student performance. We included gender, first-generation college student status, international student status, and ethnicity because we were interested in determining whether any one of these particular groups would respond differently to the worksheet types. To test for disproportionate effects of our treatments on these sub-populations, in our starting model we included interactions of (1) gender and worksheet type, (2) first-generation status and worksheet type, and (3) student group and worksheet type. We also included an interaction of worksheet type and prior UW GPA in order to reassure ourselves that there was not a disproportionate number of students with high UW GPAs completing any particular worksheet. The full starting model was: Posttest score ~ worksheet type + pretest score + prior UW GPA + gender + first-generation status + student group + gender × worksheet type + first-generation status × worksheet type + student group × worksheet type + prior UW GPA × worksheet type.

Determining a final model: We used backwards model selection to test which covariates should be retained in our final model. In this method, we removed covariates from our starting model in a stepwise fashion and compared the Akaike information criteria (AIC) value for each intermediate model. A term was left out of subsequent models if the resulting AIC value upon its removal was two or less than the resulting AIC value of the fuller model (two AIC values that differ by less than two are considered equivalent, and in such a scenario the model with fewer terms is preferred) (Burnham & Anderson, 2002). AIC quantitatively weighs the trade-off between additional parameters explaining variation in the outcome and added model complexity. In this way, AIC favors the model that includes the fewest parameters, which explains the most variation. The model with the lowest AIC value was selected as our final model (Burnham & Anderson, 2002).

All analyses were implemented in R (R Core Team, 2018). The proportional odds logistic regression model was fit using the MASS package (Venables & Ripley, 2002).

Statistical results

Our model selection procedure indicated that the following model best predicts our data: Posttest score ~ worksheet type + pretest score + prior UW GPA + student group + student group × worksheet type.

It is useful to note that any covariates included in the model can be thought of as being controlled for when interpreting the regression coefficient of any particular covariate. For example, in the above model, the regression coefficient associated with international students should be interpreted as the effect of international student status on posttest score, controlling for worksheet type, pretest score, and prior UW GPA.

Notably, there was no difference on posttest scores between men and women and between first-generation college students and continuing-generation college students, as indicated by those predictors not being retained in our final model.

In contrast, after completing the high-complexity worksheet (the reference worksheet type), posttest scores of Asian American, international, and URM students differed from those of white students (the reference group) in nonsignificant but meaningful ways, as indicated by these predictors being retained in the final model. Controlling for the other covariates, Asian-American students were more likely to have a higher score than white students: The probability of getting one point higher on the posttest was 0.56. International and URM students were less likely to score as many points as white students: The probabilities of scoring an extra point on the posttest were 0.45 and 0.37 for international and URM students, respectively, compared to white students (Table 2; note that units in the table are in odds ratios, but converted to probabilities in the text for easier interpretation).

| Table 2. Results from proportional odds logistic regression model. | ||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

||||||||||||||||||||||||||||||||||||||||

In regard to the treatment (high-complexity versus low-complexity worksheet), international students were most impacted by worksheet type, scoring significantly higher on the posttest if they completed the low-complexity worksheet. Specifically, the probability of an international student scoring one point higher on the posttest after completing the low-complexity worksheet compared to a white student scoring one point higher on the posttest after completing the high-complexity worksheet was 0.79 (Table 2). There was a positive but nonsignificant effect of completing the low-complexity worksheet for URM students and negative but nonsignificant effects of completing the low-complexity worksheet for Asian American and white students with probabilities of 0.63, 0.44, and 0.48, respectively (Table 2).

We found that students scored significantly higher on the posttest if they had a higher UW GPA or scored higher on the pretest. Specifically, the probability of scoring one point higher on the posttest was 0.69 for every additional GPA point a student has and 0.57 for every additional point a student scored on the pretest (Table 2). While these particular findings were unsurprising, it is interesting to note that completing the low-complexity worksheet increased the probability of scoring an additional point on the posttest for international students more than the average effect of a student either having a whole extra grade point (e.g., a 2.0 to a 3.0 on a 4-point scale) or of scoring one point higher on the pretest (Table 2).

Limitations of this study

Because a quantitative measure of each student’s historical language learning is unavailable, this study used international student status as a proxy for English language learner status. This proxy might miss language learning difficulty in noninternational students as well as include international students for whom English was always their primary language. The composition of international students at this university is primarily of those who have been raised and schooled in East Asian countries with a smaller group of native English speakers from Canada, New Zealand, etc. Because that smaller group is unlikely to be impacted by language-focused interventions, it is likely that international status is a conservative measure for English language learning status and the true impact of this intervention may be larger. Future work to better characterize this impact will require data on student language histories.

We cannot conclude from this experiment whether amount or complexity of language was more or less crucial to the observation.

Additionally, these data were taken from a single comparison between two different class sections. While this included a large number of individual students, it is possible that subtle framing differences from the instructor may have impacted the results. It is also possible that students in one section were inherently different than students in another section in ways that our model was not able to capture. While this analysis implies that classroom activities might be better designed to suit language-learning students, further experiments across topics and instructors will strengthen the reliability of this pilot experiment.

Discussion

The low-complexity worksheet described in this study resulted in increased demonstrated learning for international students as indicated by their scores on a posttest. Many international students are developing science understanding in a non-native language. Observing improved learning outcomes for them in this experiment is exciting in that it may speak to subtle curricular changes that may help English language learners better engage with science content within active learning classrooms.

We argue that these findings are important on two levels that have varying importance in the pursuit of more effective science teaching in large postsecondary courses. First, we focus on the modifications to the course worksheet. Second, we highlight the importance of reflective, responsive instruction.

In this instructional intervention, we modified a worksheet on membrane transport and channel proteins to see if the complexity of written instructions was related to the learning of certain groups of students. We found that international students, the group of students with the most diversity in native languages, achieved higher scores on the posttest if they had completed the low-complexity worksheet. The magnitude of this beneficial effect was greater even than the effect of scoring an additional point (out of eight) on the pretest or having an extra point on your cumulative GPA for all college courses taken up to that point. While this impact size might be expected for a larger structural change, an improved instructor, or an extremely motivated student, it is observed here only with small wording changes to a related worksheet. This suggests an opportunity to use simple adjustments to classroom design to help heal disparities in student outcomes that contribute to loss of diversity in the skilled workforce.

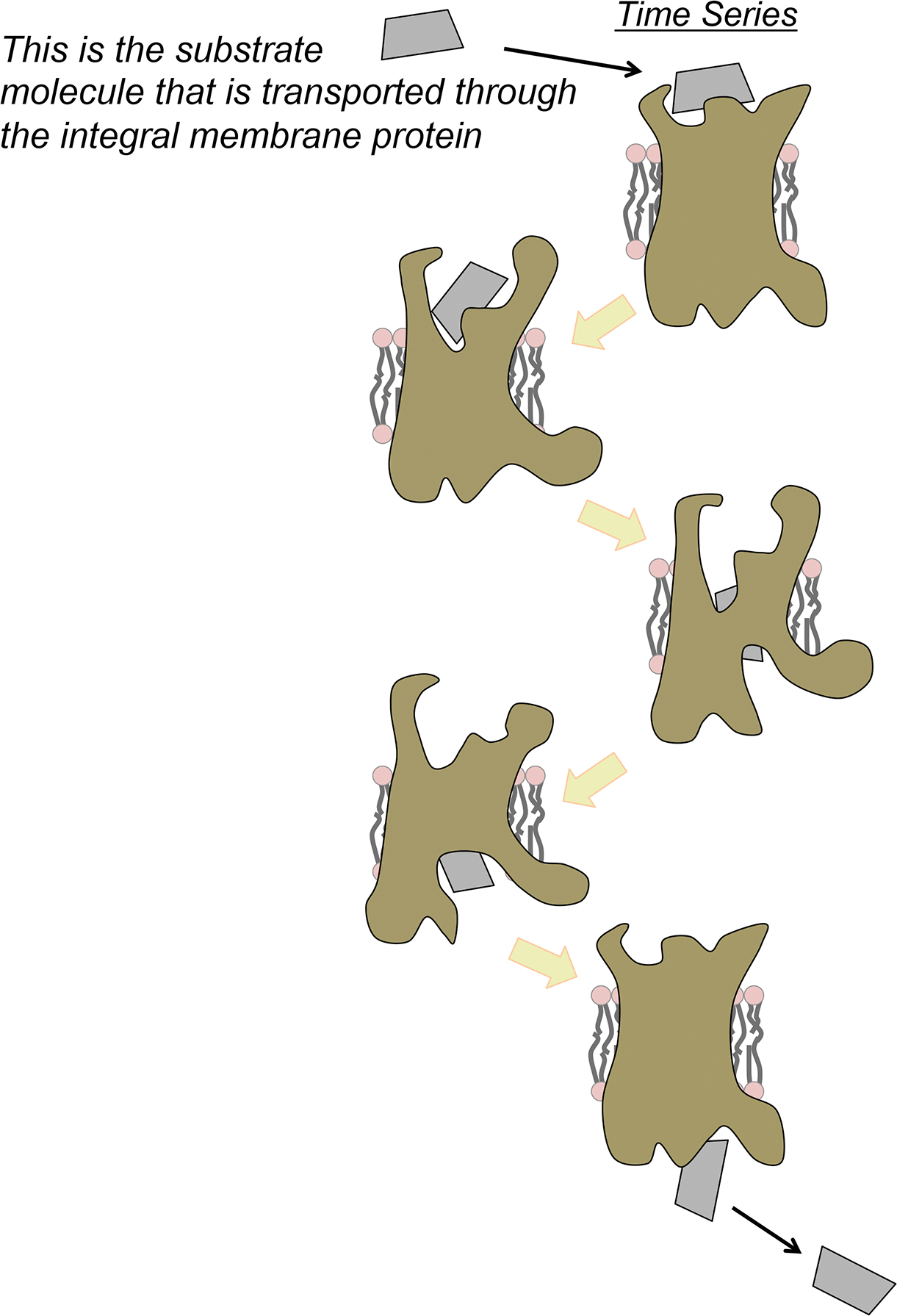

To understand what might explain such a strong difference in learning gains, we drew on learning theory in the sociocultural and cognitive traditions that shows that students gain language skills through authentic sensemaking opportunities (e.g., Lightbrown & Spada, 2003) and that science learning is achieved through sense-making (NASEM, 2018, p. 60). As the adage goes, “telling is not teaching,” and we designed and tested course materials that do less “telling.” On our worksheet, we removed unnecessary, dense language and focused student attention on the images of biological processes, or the epistemic tools of science communication (NASEM, 2018, p. 66). Our “simplified” language did not mean that we simplified biological concepts (see example in Figure 2). Rather, because the results indicate students did significantly better on the reduced-lexical density worksheet, we hypothesize that the decreased lexical density may have allowed students to focus on the images of cellular transport and gain a deeper understanding through their increased attention to these images. Visual representation is an important way that communities of scientists construct and share their enterprise (Latour, 1995). Using visual representations of very small phenomena encourages students to think about mechanism, cause and effect, structure, and function in ways that are difficult to represent in text alone, but which are essential in gaining deep understanding and expertise in biology (Jee et al., 2015). Furthermore, the use of text and images is widely regarded as a way to support STEM learning for non-native English speakers (NASEM, 2018). Our findings may support these ideas; international students demonstrated improved scores when text complexity was reduced, making the focus of the worksheet the images and the processes they represent.

The concept of dynamic flexibility in protein structure/function is demonstrated in this cartoon model. Students use this model as data from which to infer that small and important structural differences allow transport of a substrate molecule through the otherwise-impermeable membrane. Students learn this challenging concept without explanatory text.

We focused this analysis on just one classroom activity in a very large, ongoing analysis of a biology curriculum that teaches thousands of students annually. We found that learning gains occurred for international students when we attended to something very simple, but broadly impactful: the wording in a worksheet that can be reused over many iterations of a class session for hundreds of students. While further study is warranted, we feel that this result may be generalizable to a wide variety of active learning class materials that use both written text and illustrations. The implications for this finding extend into methodology and instructional practice; learning gains improved in this experiment after such a simple change, but we would have missed this effect completely if we had not tested the data for effects related to historically under-served groups.

Thus, we emphasize a continually reflective stance for postsecondary course designers. We should be asking whether women, students of racially nondominant backgrounds, first-generation college students, and English language learners are able to achieve their highest levels, then gathering data to analyze in terms of group membership, and making responsive instructional changes. We advocate for the continued sharing and publication of effective instructional strategies that can influence postsecondary science education at scale. The goal of this reflective, iterative instructional process should be to eliminate the predictive ability of race, gender, ethnicity, nationality, first-generation college student status, and socio-economic status on any student’s science learning.

Preservice Science Education Teacher Preparation Teaching Strategies Postsecondary