Test Blueprints in the Science Classroom

Foundational work for formative assessment and data-based decisions

The Science Teacher—October 2019 (Volume 87, Issue 3)

By Kurtz Miller, Terri Caprio, Tonie Smarr, Kathleen Guest Bledsoe, and Katie Pettinichi

Sitting in a fall staff meeting, you lean over to tell a science teacher colleague you have settled in for the school year. You have learned your students’ names, your seating chart has changed, and your first evaluation date has been set. Your principal interrupts, “We will be walking through classrooms to check for formative assessment, differentiated instruction, and learning targets.” Your heart sinks at the thought of keeping your learning targets updated for three separate classes, not to mention the other components! There must be an easier way to keep the curriculum organized for planning, teaching, and assessment purposes…

In 2012, while teaching at the University of Dayton, I attended a short course on formative instructional practices (FIP) through Battelle for Kids, a national nonprofit organization focused upon helping school systems in 45 U.S. states. Battelle for Kids was supported by federal Race to the Top funding and collaborated with the Ohio Department of Education (ODE) to develop training modules to introduce Ohio teachers to research-based FIPs. The FIP training modules were developed based on decades of scholarship on effective teaching, including research by Robert Marzano, Arthur Costa, Bena Kallick, and dozens of others (Chappuis et al. 2012; Chappuis 2014). In the modules, the core components of FIP are described as clear learning targets, student ownership, effective feedback, and collecting evidence of student learning (Chappuis et al. 2012).

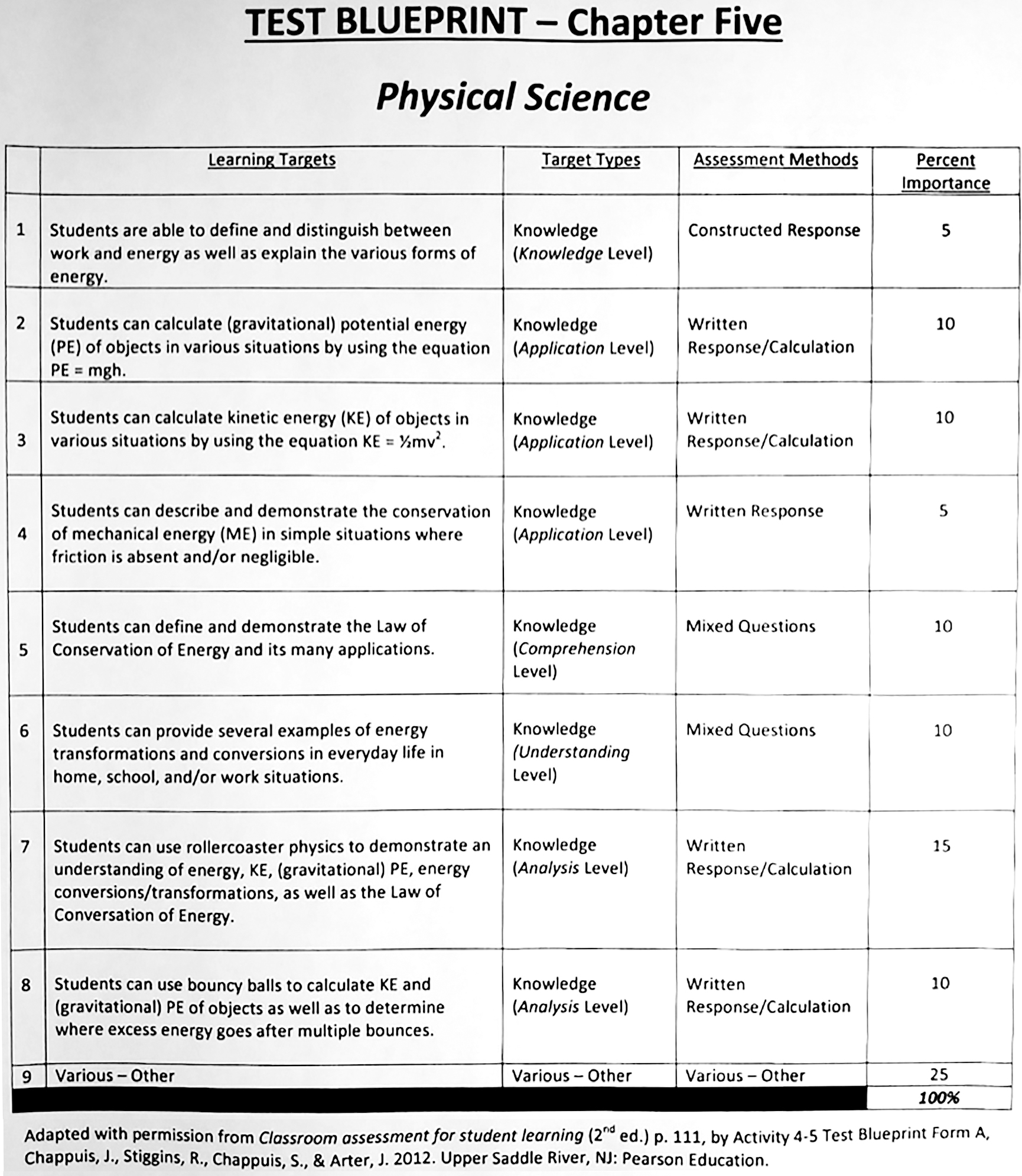

The starting point for implementing FIP in a classroom was writing what were called test blueprints, a way of organizing learning targets into a single document for instruction as well as assessment purposes. After five years out of the science classroom, I returned to teaching for the 2017–2018 school year, during which I wrote physical science and physics test blueprints prior to teaching each chapter (Figure 1). The test blueprints empowered me to share with students what they would be learning as well as provide guidance about formative and summative assessments.

Implementation

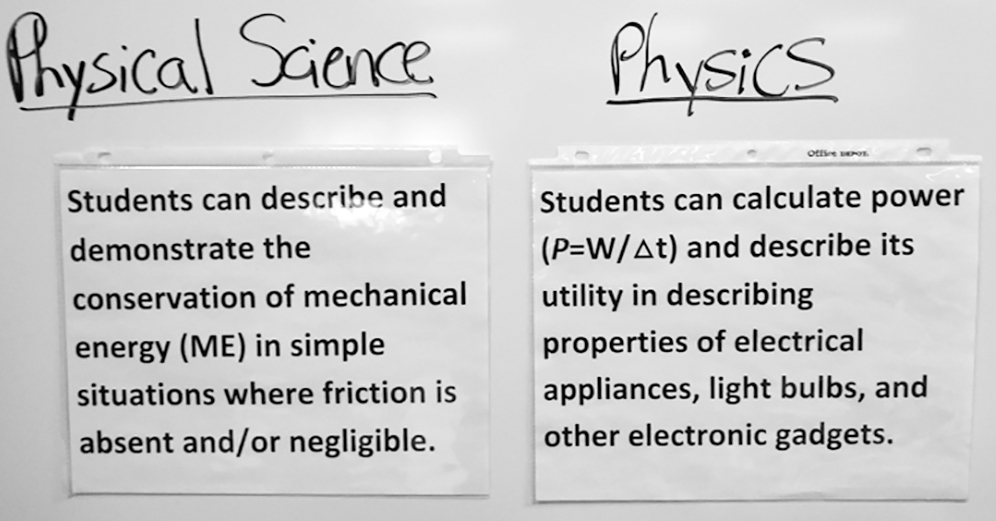

At the beginning of each chapter, students received a hard copy of the test blueprint, which included a complete list of the learning targets being taught and assessed. Before instructional activities and lab exercises, I verbally identified each learning target by pointing them out on the blueprint (Figure 1) and on the front board (Figure 2). I expected students to independently use the test blueprint to identify their weaknesses and determine how to study for assessments. From student survey data collected from physical science students, freshmen students, since they are still developing metacognition, did not make the best, independent usage of test blueprints.

During the following year, the 2018–2019 school year, I attempted to more explicitly teach students how to use the blueprints by helping students reflect upon the targets. A brief survey administered to 11th and 12th-grade physics students found that teachers can help students develop metacognitive strategies to reflect upon learning. Written student feedback (Figure 3) from physics students provides evidence that such documents have potential to improve student learning. Further, survey data indicates individual teachers, teacher teams, and administrators found multiple advantages of using test blueprints (Figure 4).

Discussion

Deconstructing standards, writing learning targets, and building test blueprints can be a sizeable investment in time that may reap huge dividends in terms of being more organized and purposeful, benefiting from curricula alignment, aiding in collaborative teacher discussions, having the tools in place to support formative and summative assessment, and improving differentiated instruction. Whether teaching solo or working in teams, using learning targets and test blueprints can potentially be a win-win for both students and teachers, particularly when students acquire the ability to become reflective, self-regulated learners. Learning targets and test blueprints are high-leverage, 21st-century strategies that teachers might consider implementing.

Assessment Teaching Strategies High School