special section

Using an Instructional Team During Pandemic Remote Teaching Enhanced Student Outcomes in a Large STEM Course

Journal of College Science Teaching—January/February 2022 (Volume 51, Issue 3)

By Susan D. Hester, Jordan M. Elliott, Lindsey K. Navis, L. Tori Hidalgo, Young Ae Kim, Paul Blowers, Lisa K. Elfring, Karie L. Lattimore, and Vicente Talanquer

The unplanned shift to online instruction due to the COVID-19 pandemic challenged many instructors teaching large-enrollment courses to design learning environments that actively engaged all students. We looked at how one instructor used her instructional team—a group of student assistants with diverse, structured responsibilities—to adapt her large-enrollment (>500 students) introductory chemistry course to a live-remote format, as well as the impact the team’s involvement had on students’ reported experiences of online learning. We found that the instructional team’s involvement was instrumental in adapting the course to the live-remote online format. The integration of the instructional team had a significant positive impact on students’ experiences in the course, including their perceptions of social and cognitive engagement and teacher presence. Students in the section with the integrated instructional team also outperformed students in other sections of the same course on standardized course exams and final course grade. These results suggest that a structured instructional team composed of students can be a mechanism for promoting positive student experiences and learning in large-enrollment, remote STEM courses.

The unplanned pivot of large-enrollment courses to the online format due to the COVID-19 pandemic presented many instructors with a difficult challenge: How could they meaningfully engage large numbers of students and promote learning in an online format? For many instructors teaching with undergraduate-supported instructional teams (such as groups of learning assistants), these teams proved valuable in the quick transition online in spring 2020 (Emenike et al., 2020). In the ensuing semesters, with large-enrollment courses remaining online at many universities, instructors had the opportunity to deliberately adapt course structures to incorporate their instructional teams, but they also faced the challenge of establishing course cultures and norms within the online setting without the benefit of beginning the semester with students in person.

At the University of Arizona, many STEM faculty members (23 to date) using instructional teams have participated in the Instructional-Teams Project (I-TP), a National Science Foundation–funded effort that seeks to support instructors’ implementation of high-quality active-learning strategies in large-enrollment STEM courses through use of structured instructional teams. Instructional teams at many institutions rely on the participation of undergraduate learning assistants to actively engage students in course activities (e.g., Otero et al., 2006; Goertzen et al., 2011; Talbot et al., 2015; Jardine & Friedman, 2017). The I-TP builds on this model by introducing specialized roles for team members to provide targeted aid for course and classroom management (instructional manager role) and formative assessment (learning researcher role; Hester et al., 2021; Kim, Cox et al., 2019).

The I-TP model was initially developed for in-person course settings. Its benefits include smoother course operations; feedback for instructional planning and responsive teaching; and increased trust and reliance on the instructional team, leading to greater integration of the team into teaching practices (Hester et al., 2021). The model was later adapted for asynchronous online courses, where it was found to facilitate more responsive teaching and a greater sense of community (Kim, Rezende et al., 2021). With the unplanned pivot of large-enrollment courses to an online format due to the COVID-19 pandemic, we asked how a highly structured instructional team could support a large-enrollment STEM course’s adaptation to the online format and examined the impact of the team’s involvement on students’ experiences and performance in the course. We sought to answer the following research questions in a large-enrollment (more than 500 students) introductory chemistry course, taught with a large instructional team, that was adapted from an in-person to a live-remote online format:

- Research Question 1: How was the instructional team incorporated into the online course format?

- Research Question 2: How did the instructional team’s involvement impact students’ experiences?

- Research Question 3: How did the instructional team’s involvement impact students’ course performance?

Methods

Setting

The study took place at the University of Arizona, a large, public, research-intensive university in the southwest United States. We collected data in sections of the second semester of the university’s two-semester general chemistry sequence for science and engineering majors. The course is offered in multiple large-enrollment sections taught by different instructors, all of whom follow the Chemical Thinking curriculum (Talanquer & Pollard, 2010, 2017). All instructors (a) use the interactive Chemical Thinking textbook; (b) follow the same unit structure (key concepts, ideas, and skills emphasized); (c) use a standard class slide deck (which includes suggested in-class activities) as a blueprint for their own class notes; (d) assign identical weekly homework assignments; (e) administer the same (collaboratively written) unit exams and final exam on the same schedule; and (f) use the same point breakdown for exams, quizzes, homework, participation, and laboratory work. Despite these similarities, instructors have some freedom within this standardized framework. They can use the textbook in different ways—for example, by assigning specific readings or generally directing students to use it as a reference. Instructors may modify class activities, determine how class time is structured and run, and decide how class participation is evaluated. They determine the number, content, and evaluation of periodic quizzes. Finally, each instructor implements their own supporting structures, including the makeup, structure, management, and involvement of their instructional team.

Data collection and analysis

To answer our research questions, we collected data in spring 2021 in sections of the second-semester course in the general chemistry sequence. Most data were collected from the section taught by “Dr. Lock” (pseudonym). We chose this particular section because (a) prior to the move online, Dr. Lock habitually worked with an instructional team that is unique in its size, structure, and extent of involvement in the course; (b) Dr. Lock had incorporated her instructional team into the live-remote online course structure in a deliberate way; (c) roughly half of the students in the course had taken the preceding course in the live-remote format with Dr. Lock and her team in fall 2020 and roughly half had not, setting up a natural experiment; and (d) Dr. Lock was interested in collaborating in the study. Different sets of data were collected and analyzed to answer each of our research questions.

Research Question 1: How was the instructional team incorporated into the online course format?

To answer Research Question 1, we carried out an instructor interview and online course observations to build a description of course structures and team involvement. Through informal communication (email), Dr. Lock confirmed the descriptions reported here.

- Instructor interview: The first author conducted an hourlong, semistructured remote video interview with Dr. Lock, which focused on how she was structuring and incorporating her instructional team into the live-remote online course and the intentions behind her decisions. We used the interview to “sketch out” an initial understanding of course structures and the team’s involvement.

- Course observations: We observed a subset of Dr. Lock’s class meetings throughout the spring 2021 semester by either logging in to the live-remote Zoom meetings or watching the recorded class meeting videos posted for students. We also observed the course chat server (Discord) for the amount and types of activity multiple times over the 2 semesters during and outside of class meeting times and explored resources (syllabus, assignments, videos, announcements, etc.) on the course learning management system. During observations, we took notes and directly annotated the “sketch” of the course structures and team involvement, adding to and verifying description components.

Research Question 2: How did the instructional team’s involvement impact students’ experiences?

To address Research Question 2, we administered an adapted Community of Inquiry (CoI) survey (described in the following paragraph) to students in Dr. Lock’s spring 2021 section. All students in the section (N = 584) were asked to complete the survey regardless of whether they consented to participate in the study. The first question of the survey described the study and provided a link to the full Institutional Review Board–approved consent document. Students opted into or out of the study by choosing either “Yes. I consent to the use of my survey responses in the study” or “No. I do not consent to the use of my survey responses in the study.” The pre-course survey was submitted by 377 students; 558 students submitted the post-course survey. Responses from 189 students who consented to participate in the study, completed all items on both the pre- and post-course surveys, and could be matched for the pre- and post-course surveys were included in the CoI survey and free-response analyses. An additional 28 students consented to participate in the study and answered the free-response questions, despite not completing all pre- and post-course survey items. These students’ responses were included in the qualitative analysis of the free-response items, for a total of 217 responses.

The CoI survey (Arbaugh et al., 2008) is a 34-item survey that measures students’ perceptions of teaching presence (13 items), cognitive presence (12 items), and social presence (9 items) in an online course. Each item contains a statement and asks for a response on a scale of 0 (strongly disagree) to 4 (strongly agree). The survey is based on a CoI framework (Garrison, Anderson et al., 1999, 2010) initially formulated to facilitate the investigation of elements that promoted effective remote learning environments to inform and assess instructional design and practices. The CoI describes interacting component elements of such environments: teaching presence, cognitive presence, and social presence. Since its initial publication, the CoI framework has been applied extensively and is accepted as an important lens for analyzing remote learning environments: In 2018, Stenbom identified more than 100 peer-reviewed journal articles describing studies that used the CoI survey.

We gave adapted versions of the CoI survey to students in the first two weeks (pre-course) and last week (post-course) of the semester via the online survey tool Qualtrics. We adapted the pre-course survey to ask students to reflect on their experiences in online courses up to that point. (Courses at the university were held remotely in fall 2020, so students were expected to have had relevant experiences.) The post-course survey asked students to reflect on their experiences in the particular course. Both surveys were adapted to reference “instructor/instructional team” in place of “instructor.” Similar adaptations are commonly used and have not been found to impact the validity of the results (Stenbom, 2018). At the end of the post-course survey, we included four free-response questions. The first of these, which asked students to reflect on their learning experience in the course, was analyzed for this study. (Readers can contact the authors for the adapted versions of the surveys, including all free-response questions administered with the post-course survey.)

- Analysis of CoI survey responses: We split pre- and post-course data based on students who had taken the preceding course with Dr. Lock and her fall 2020 instructional team and students who had not. We averaged responses across four different categories of items for each student, pre- and post-course: overall (responses to all items) and each of the three constructs measured by the CoI survey (teaching presence, social presence, and cognitive presence). To compare pre- and post-course average item responses for a category within a group of students, we performed paired t-tests; to compare survey results across groups of students, we performed two-sample t-tests.

- Analysis of free-response survey question responses: We developed and applied a coding scheme (Table 1) to analyze students’ written responses to the free-response question “Compared to your other courses, how would you describe the learning experience in this course? Please highlight anything that stood out to you—what about the course affected your learning experience?” The “focus” and “team-related reasons for positive learning experiences” categories and codes emerged from the data, guided by Research Question 2. Each response was independently coded by three authors (Susan Hester, Jordan Elliott, and Lindsey Navis). We calculated Fleiss’s kappa (a measure of intercoder reliability) for the emotional valence category and for each non–mutually exclusive code (treated as a binary category); all values were between 0.82 and 1, indicating excellent agreement. In instances of disagreement, the three coders reached consensus through discussion.

Research Question 3: How did instructional team involvement impact students’ course performance?

To address Research Question 3, we collected measures of course performance and academic data from students from all sections of the course in the spring 2021 semester. We collected data from a total of 887 students across all sections of the course.

- Student performance: We collected the average score on all course exams (including the four unit exams and the final exam) and the final course grade for students who completed the course.

- Academic index: We collected the academic index (a measure of students’ college readiness based on high school grade point average, class rank, and standardized ACT and SAT test scores) from the University of Arizona Analytics System, when available, for students who had completed the course.

- Analysis of student performance data: We linked students’ performance data and academic index and removed identifiers from the data prior to analysis. We calculated the average scores on all course exams and average course grades, broken down by academic index, for Dr. Lock’s section (N = 427) and all other sections of the course combined (N = 450).

All data collection and analyses were part of projects approved by the University of Arizona Institutional Review Board (IRB 1409498345; IRB 2101358224).

Results and Discussion

How was the instructional team incorporated into the online course format?

Our first goal was to describe how the instructional team was incorporated into the course in the live-remote online format. Central course components—three 50-minute live-remote class meetings per week, assignments, and exams—were largely determined by requirements of the university and the standardized curriculum. The way in which Dr. Lock ran the live-remote class meetings and several of the structures that she put in place to support students, however, relied on her instructional team.

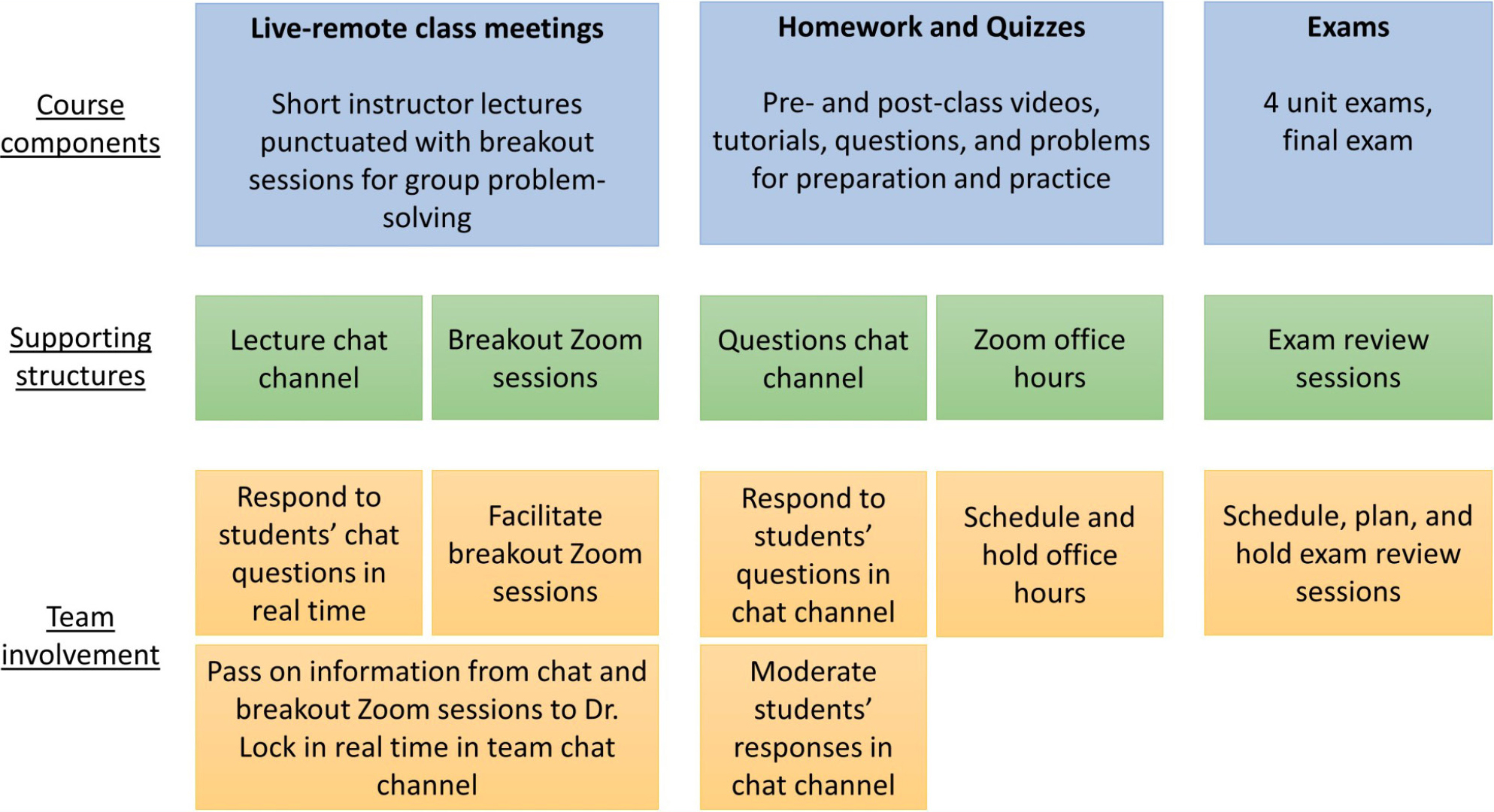

Each semester, the instructional team is made up of Dr. Lock and approximately 50 undergraduate student assistants. Instructional team members’ responsibilities are diverse and include engaging student groups in class activities and holding office hours; managing the classroom environment; collecting and communicating information to facilitate responsive instruction (e.g., sources of confusion, how students are thinking about a particular concept, or whether an activity is paced well for the students); and giving feedback on planned course structures and activities from a student perspective. In moving the course online, Dr. Lock adapted course structures and instructional team roles to the live-remote online format. She also introduced new lines of online communication to foster student-student and student-team interaction, with the goal of promoting a course community and providing an accessible way for students to ask questions and receive feedback. Figure 1 shows ways in which student team members were incorporated into supportive course structures.

Incorporation of the instructional team into supportive course structures.

A significant decision that Dr. Lock made in transitioning to the online format was to adopt breakout Zoom sessions facilitated by two or three instructional team members. During the live-remote class meetings, students would join the breakout sessions multiple times to work together on assigned activities. Students were initially assigned to breakout sessions and later allowed to choose which session to join. While in the sessions, they would also have a chance to ask questions of the team members and each other. Dr. Lock chose this structure to replace her usual in-person approach in which students worked on class activities in groups of a few students while team members circulated in assigned “zones,” checking on groups and facilitating discussions when needed. Her decision to assign team members to breakout sessions was driven by the availability of her large team and the anecdotal struggle to encourage people to speak out and interact in breakout rooms in the absence of active facilitators.

Because she valued the sense of community fostered in her usual in-person classes and was concerned that the online format would be isolating, Dr. Lock wanted to open up additional lines of communication for students outside the live-remote sessions. With input from members of her instructional team, she set up a course chat server where students could ask course and content questions, chat with each other, and even post (course-appropriate) memes and jokes as a way to connect with each other. Dr. Lock and the rest of her team responded to students’ questions and moderated students’ posts. The large, invested instructional team ensured quick response times, as members of the instructional team were available to respond at most hours of the day.

Dr. Lock also adapted instructional team roles for supporting responsive instruction in the online format. To aid appropriate pacing of class activities and “catch” common issues, team members facilitating activities reported progress on activities in real time through the team chat channel. Designated members of the instructional team (learning researchers; Kim, Cox et al., 2019; Hester et al., 2021) moved between different breakout sessions to collect evidence of student thinking, questions, and challenges; they reported these through a voice chat with Dr. Lock. In this way, Dr. Lock could respond to student thinking and challenges when the whole-class session resumed. A group of team members also acted as a survey task force. For each class, they administered a brief optional survey to collect students’ feedback on course operations and content, then reported the results to the rest of the instructional team as input for the upcoming class.

Finally, Dr. Lock maintained certain elements of team involvement and structure typical of her in-person courses. Several team members scheduled and held remote office hours, and some team members also planned, scheduled, and held exam review sessions. Ten team members met with Dr. Lock remotely each week to contribute to course planning by providing feedback on planned course activities and brainstorming ways to respond to challenges and opportunities. She communicated with the remaining team members through the chat server on channels restricted to the instructional team. In the chat, she shared slides and activities for the upcoming class, and instructional team members connected and discussed matters such as their questions about activities or content (which might be answered by Dr. Lock or another team member) or their experiences with students.

Notably, although the instructional team was exceptionally large (anecdotally, the opportunity to continue being a part of the course community and a desire to “pay it forward” to future students in the course drove recruitment of members), the same structures could be implemented with a smaller instructional team or a larger student-to-team-member ratio (e.g., by assigning fewer team members to facilitate each breakout session or creating a schedule for covering the chat channel).

How did the instructional team’s involvement impact students’ experiences?

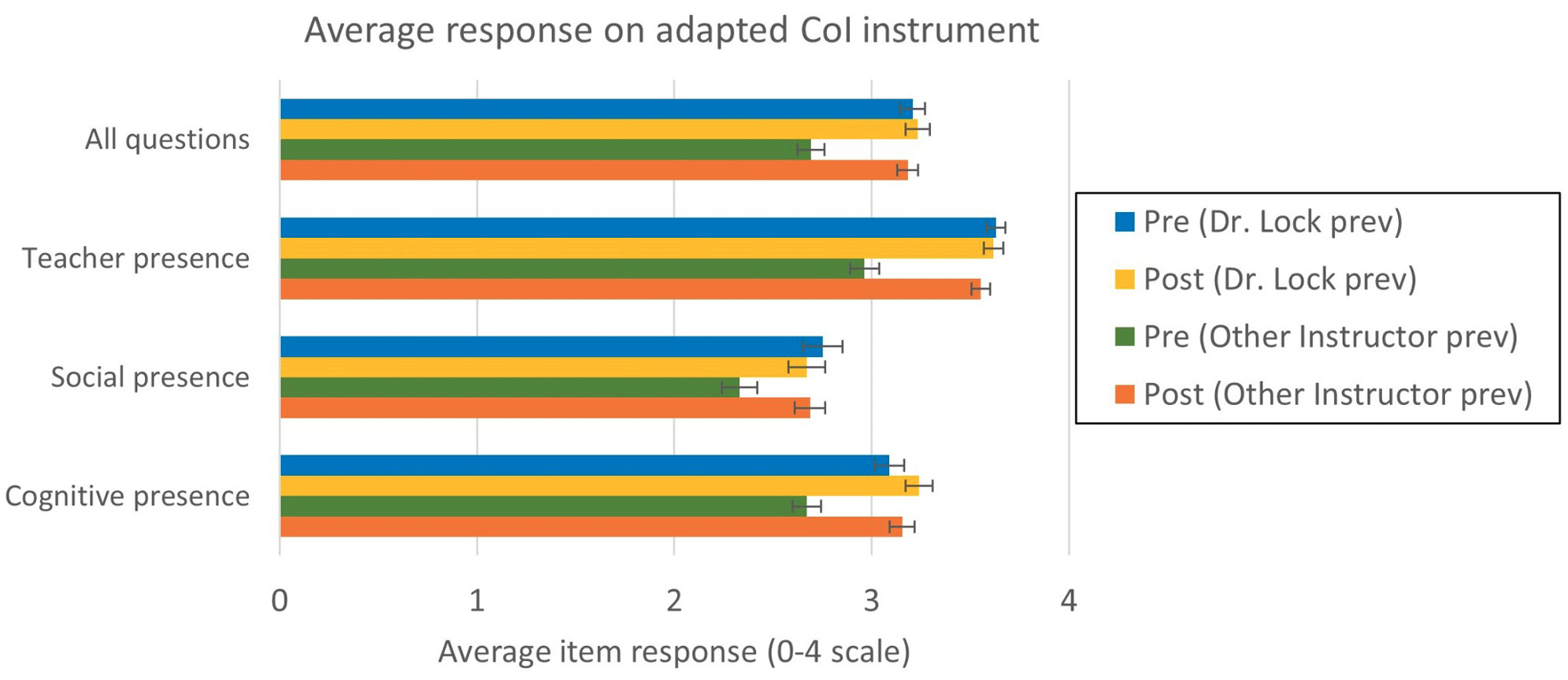

About half of the students had taken Dr. Lock’s section of the first course in the sequence the previous semester (with the same instructional team structure); other students had taken a different section of the preceding course with a different instructor (without a highly integrated instructional team). We took advantage of this “natural experiment” when analyzing the results of the CoI survey to investigate the impact of the instructional team on students’ perceptions. We compared the pre- and post-course responses of those who had taken Dr. Lock’s section the previous semester and those who had not. The averages of pre- and post-course responses overall and for the items measuring teacher, social, and cognitive presences for each of these two groups are shown in Figure 2.

Average student response on the pre- and post-course adapted CoI instrument.

Note. Students who had taken Dr. Lock’s section of the preceding course the previous semester are denoted as “Dr. Lock prev” (N = 82); students who had not are denoted as “Other Instructor prev” (N = 107). Error bars show the standard error of the mean for each data set.

Students who had taken the first semester of the course sequence with Dr. Lock in the previous semester had significantly higher pre-course measures for all items and for each presence than students who had not (statistically significant at the p <.0001 level). By the end of the semester, these measures had increased for students who had not previously taken the course with Dr. Lock to match those of their peers who had. This indicates that the students’ experiences in the course had positive impacts on their perceptions of the three presences in the online learning experience. The high pre-course measures for the group of students who had taken Dr. Lock’s fall 2020 section indicate that the differences in pre- and post-course measures for the other group of students were, in fact, due to experiences in the course and not to differences such as how the pre- and post-course surveys were framed or increasing student comfort with online learning in general.

It is predictable that students’ perceptions of teacher presence would be positively impacted by the high degree of communication and opportunities for contact between students and the instructional team built into the structures of the course. Students’ perceptions of cognitive and social presence were also greater in Dr. Lock’s course than in their previous online courses. This suggests that the instructional team’s involvement promoted students’ social and cognitive engagement in the course. It is also consistent with what others have learned through applying the CoI survey; previous studies have found that strong teaching presence positively influences perceptions of social presence and cognitive presence (Shea & Bidjerano, 2009; Garrison, Cleveland-Innes et al., 2010; Lin et al., 2015).

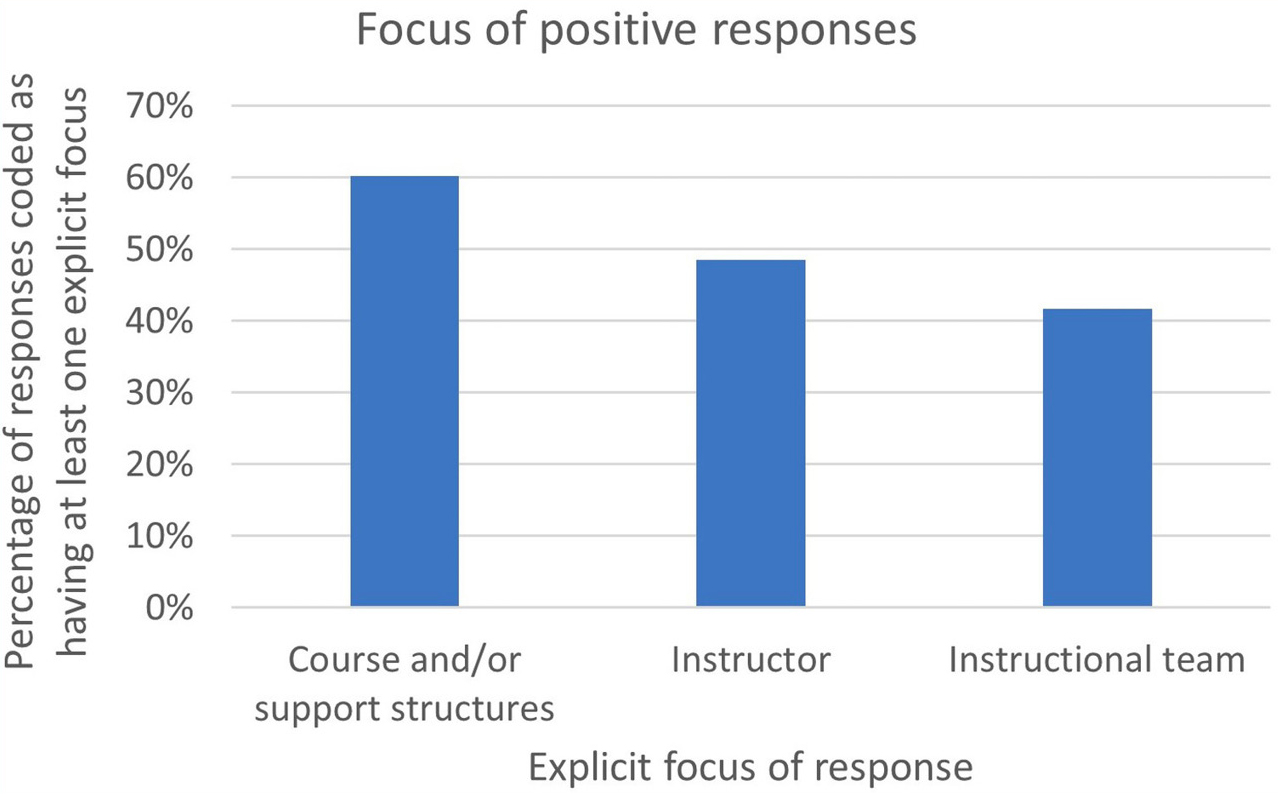

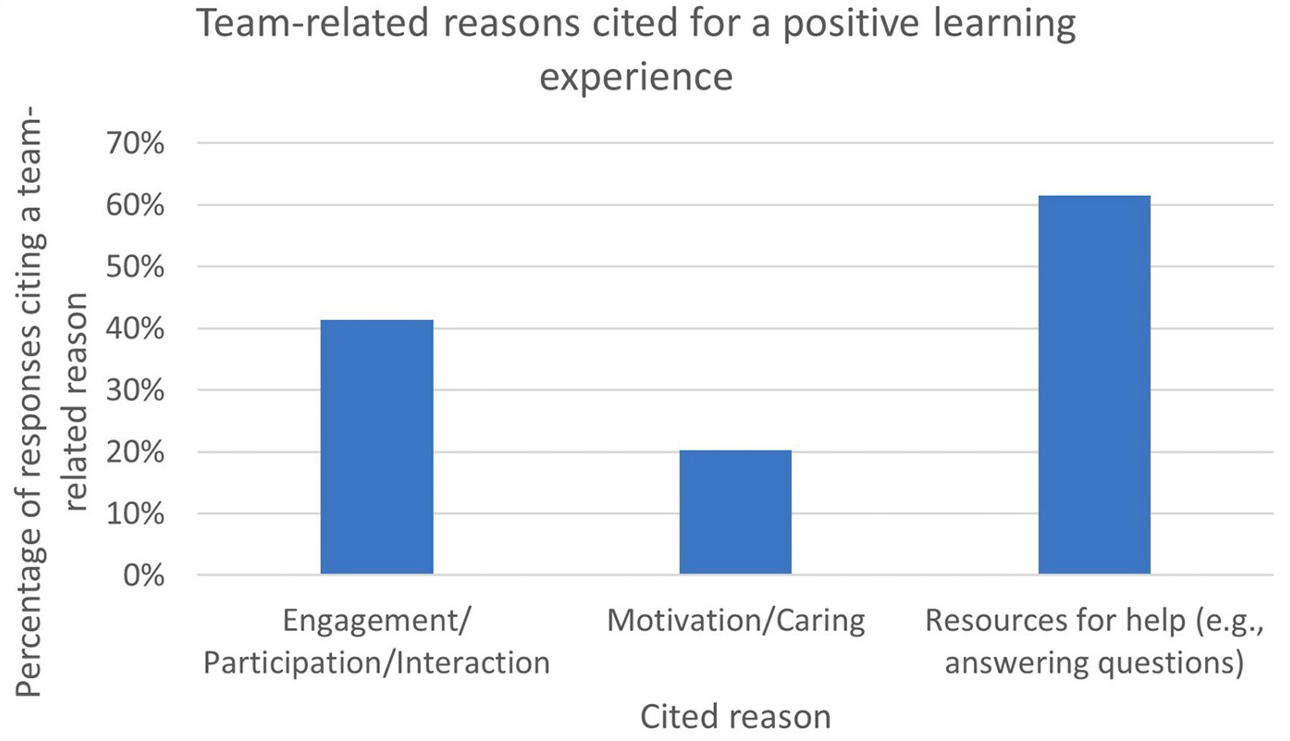

To get a better sense of what course features accounted for students’ positive experiences, we coded responses to the free-response question “Compared to your other courses, how would you describe the learning experience in this course? Please highlight anything that stood out to you—what about the course affected your learning experience?” The coding scheme is shown in Table 1. The overwhelming majority of the responses were coded as positive (positive = 190, neutral = 3, negative = 6, mixed = 17, blank = 2), and coding results indicated a significant impact from the instructional team’s involvement. As shown in Table 1, we coded responses for the explicit focus or foci of their answer, if any. The results of this coding for positive responses are shown in Figure 3. References to the team were frequent in the responses, as the team was cited as a reason for a positive learning experience in the course almost as often as the instructor was. To better understand the ways in which the team positively impacted students’ learning experiences, we coded positive responses for the types of team-related reasons cited for the positive experience (team-related reasons for positive experience category in Table 1). More than half of the positive responses (104 out of 190, 54.7%) cited a reason related to the involvement of the team in the course. The breakdown of the types of reasons given is shown in Figure 4.

Explicit focus of positive responses.

Note. Percentages shown are for responses coded as having at least one explicit focus (161 responses). Percentages add up to greater than 100% because a single response could be coded as having multiple foci.

Team-related reasons cited for a positive learning experience.

Note: Percentages shown are of responses citing a team-related reason for a positive learning experience (104 responses). Percentages add up to greater than 100% because a single response could cite multiple team-related reasons for a positive learning experience.

Students were most likely to express appreciation for practical support in the course, such as resources and access to help from instructional team members. They also remarked on more affective or social impacts, although mentions of these were less common. This is consistent with the CoI scale survey results. Although they do not perfectly align, course structures and resources for help are most closely related with teacher presence as measured on the survey, whereas the other codes are more closely related to cognitive (engagement) and social (interaction, perceptions of a caring team) presences.

Commonly cited reasons for students’ positive experiences that emerged from the data directly related to ways in which Dr. Lock integrated the team into course structures (e.g., to support student engagement in live-remote sessions and provide easily accessible opportunities for help through the informal chat channel and office hours). The course investigated here was distinct from typical remote courses in that it was adapted to the online setting in response to the COVID-19 pandemic. These results, however, point to ways that an instructional team could promote positive student outcomes in remote courses more generally.

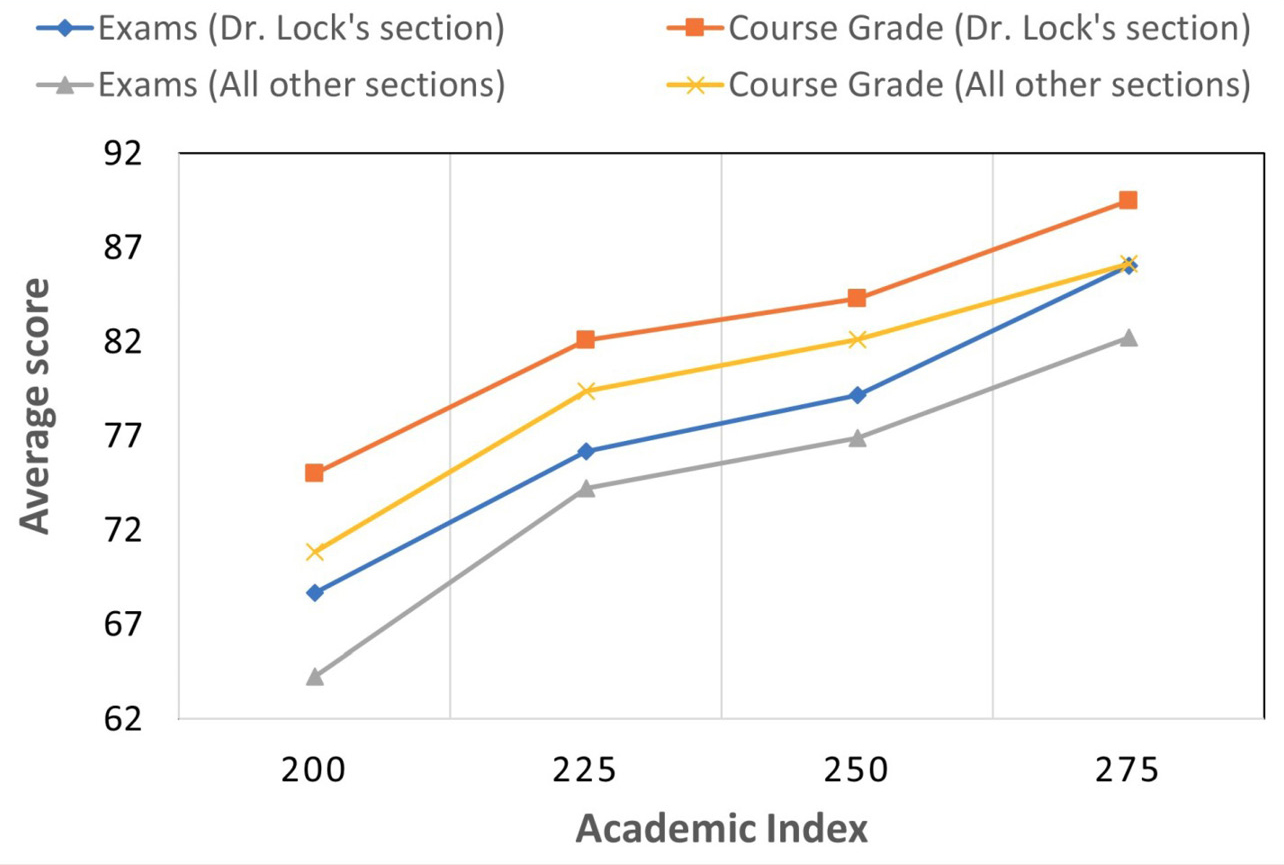

How did the instructional team’s involvement impact students’ course performance?

We would expect students’ learning experiences in a course to have an impact on their learning outcomes. In particular, students’ reported sense of teacher, social, and cognitive presence may predict their perceived learning and actual performance in online synchronous and asynchronous courses in other contexts (Akyol & Garrison, 2008; Rockinson-Szapkiw et al., 2016). Therefore, we predicted that the instructional team’s involvement would positively impact students’ course performance. Because all sections of the course sequence are taught with standardized curricular materials and many of the same team-independent course structures (as described in the Methods section), we compared average student performance on (identical) exams and final course grades (calculated using a standard structure and based largely on standard assignments and exams) in Dr. Lock’s instructional team–supported course and in other sections of the course. We found that, on average, students in Dr. Lock’s section outperformed students in other sections for course exams and final course grade, independent of their level of preparation as measured by Academic Index (Figure 5). This is consistent with a role for the instructional team in positively impacting students’ learning outcomes, in addition to their experiences in the course.

Comparison of student performance on course exams between Dr. Lock’s team-supported section and other sections of the same course.

Note. The average score on all course exams and final course grades are shown by Academic Index, a measure of college readiness based on a students’ high school grade point average, class rank, and ACT or SAT scores.

Conclusion

We investigated the potential of a large, structured, highly integrated instructional team to support students’ learning experiences and outcomes in a remote-live online format. The instructional team’s involvement was key to implementing course structures, including those adapted from the in-person course format and those adopted to address unique challenges of remote learning. The integration of the instructional team positively impacted students’ experiences in the course, increasing their perceptions of teacher presence, cognitive presence, and social presence. Students expressed appreciation for the extensive resources for help provided by the team; the increased engagement, interaction, and participation facilitated by the team; and the team’s caring for and investment in students’ learning. Students in the section of the course with an integrated instructional team outperformed students in otherwise similar sections of the same course, indicating a role for the instructional team in promoting positive student learning outcomes. Overall, the results of this study speak to the potential for instructional teams to promote positive student experiences and student learning in large STEM courses in online formats.

ACKNOWLEDGMENTS

We would like to thank Dr. Lock’s amazing instructional team and students; without them, this study would not have been possible. This work was supported by the National Science Foundation (DUE-1626531) and the University of Arizona Foundation.

Susan D. Hester (sdhester@arizona.edu) is an assistant professor of practice in the Department of Molecular and Cellular Biology; Jordan M. Elliot is an undergraduate student in the Department of Biomedical Engineering; Lindsey K. Navis is an undergraduate student in the Department of Psychology; L. Tori Hidalgo is an assistant professor of practice in the Department of Chemistry and Biochemistry; Paul Blowers is a professor in the Department of Chemical and Environmental Engineering; Lisa K. Elfring is an associate vice provost in the Office of Instruction and Assessment; Karie L. Lattimore is an assistant research administrator in the Department of Chemistry and Biochemistry; and Vicente Talanquer is a professor in the Department of Chemistry and Biochemistry, all at the University of Arizona in Tucson, Arizona. Young Ae Kim is an assistant professor of undergraduate education at the Defense Language Institute Foreign Language Center in Monterey, California, and a research associate in the Department of Chemistry and Biochemistry at the University of Arizona in Tucson, Arizona.

Pedagogy Research STEM Teacher Preparation Teaching Strategies