Feature

MindHive: An Online Citizen Science Tool and Curriculum for Human Brain and Behavior Research

Connected Science Learning March-April 2022 (Volume 4, Issue 2)

By Suzanne Dikker, Yury Shevchenko, Kim Burgas, Kim Chaloner, Marc Sole, Lucy Yetman-Michaelson, Ido Davidesco, Rebecca Martin, and Camillia Matuk

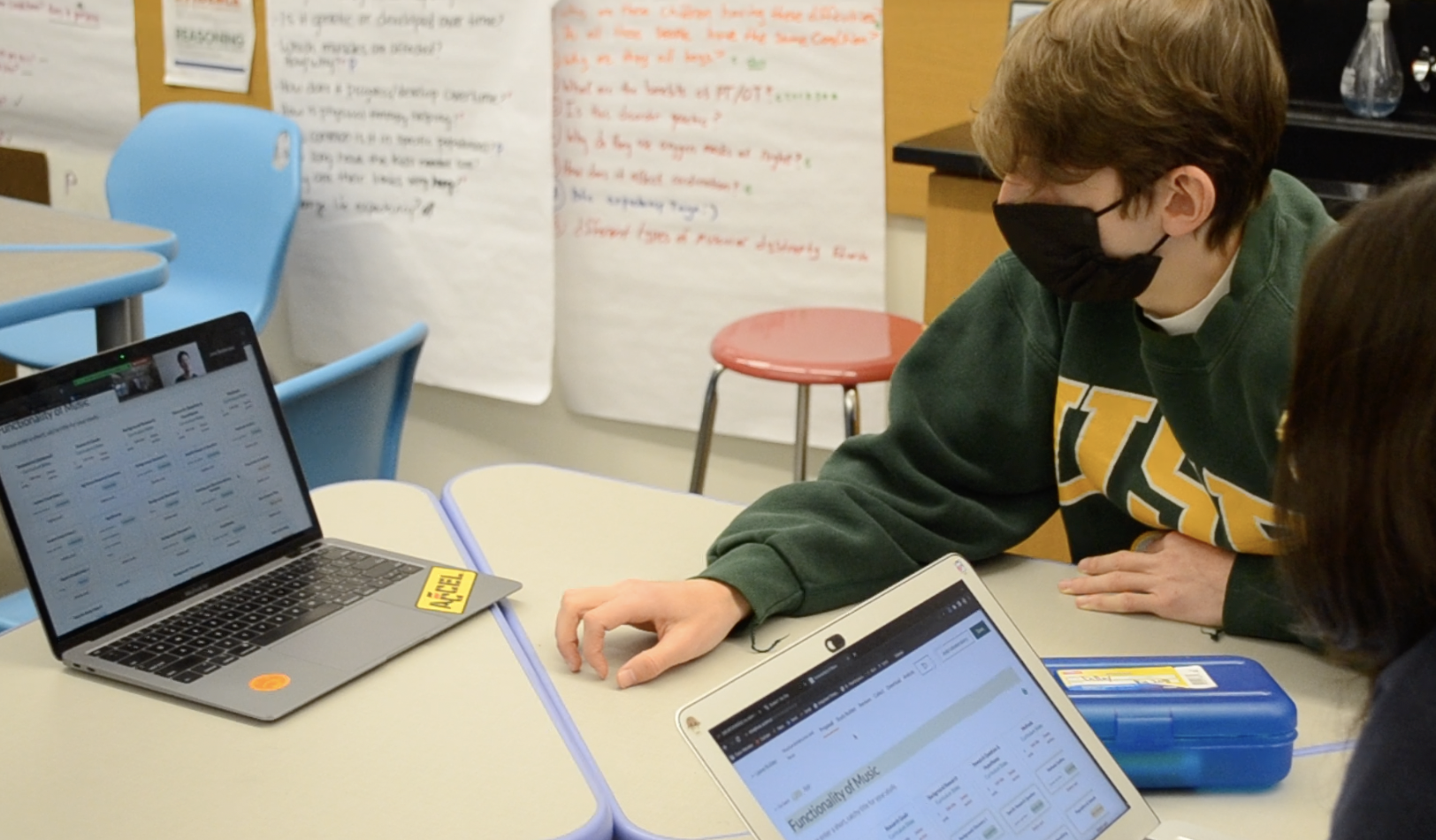

MindHive is an online, open science, citizen science platform co-designed by a team of educational researchers, teachers, cognitive and social scientists, UX researchers, community organizers, and software developers to support real-world brain and behavior research for (a) high school students and teachers who seek authentic STEM research experiences, (b) neuroscientists and cognitive/social psychologists who seek to address their research questions outside of the lab, and (c) community-based organizations who seek to conduct grassroots, science-based research for policy change. In the high school classroom, students engage with lessons and studies created by cognitive and social neuroscientists, provide peer feedback on studies designed by students within a network of schools across the country, and develop and carry out their own online citizen science studies. By guiding them through both discovery (student-as-participant) and creation (student-as-scientist) stages of citizen science inquiry, MindHive aims to help learners and communities both inside and beyond the classroom to contextualize their own cognition and social behavior within population-wide patterns; to formulate generalizable and testable research questions; and to derive implications from findings and translate these into personal and social action.

Leveraging open science to increase science literacy

The COVID-19 pandemic has brought science to the front page of our lives and with it, science literacy challenges. The rapid spread of the virus has been accompanied by a spread of misinformation that has made it difficult for many people to discern scientific evidence from less reliable sources of information (Van Bavel et al. 2020). This aligns with a recent communication by the National Institutes of Health about science literacy, which cites surveys conducted in the United States and Europe that found that many members of the general public do not have a firm grasp of basic science concepts or the scientific process and tend to value anecdotes over evidence. Vulnerable communities in particular often feel disconnected and wary of science, making them not only less likely to participate in research studies but also less likely to adhere to public health recommendations (e.g., see a recent article in The Atlantic about vaccine hesitancy). Suspicion of science and scientists is accompanied by the fact that scientists' relationship with the public has historically been unidirectional, non-transparent, and non-inclusive. For example, human neuroscientists and psychologists conduct research on the public but do not necessarily communicate with them about the research.

To address issues related to replicability, transparency, and inclusion in science, scientists increasingly embrace a so-called “open science” approach. MindHive strives to align itself and familiarize learners with six main open science tenets (Fecher and Friesike 2014):

- make knowledge freely available to all platform users (Democratic),

- make the science process more efficient and goal-oriented (Pragmatic),

- make science accessible to everyone (Public),

- create and maintain tools and services (Infrastructure),

- measure the scientific impact of research (Measurement), and

- support community inclusion and commitment (Community).

MindHive supports this open science approach in various ways. For example, we “practice what we preach” by making the MindHive platform project completely open source: The code of the source code that is used to build the platform can be examined on the code platform GitHub, which should promote transparency and ensure the longevity of the project. Another requirement for open science is the ability to share resources—in our case anonymized data—which can be used for re-analysis and further research. Anonymized data from MindHive studies can be accessed on the platform by authorized users, and all the educational research data is made available via open access data repositories such as The Open Science Framework and the Qualitative Data Repository.

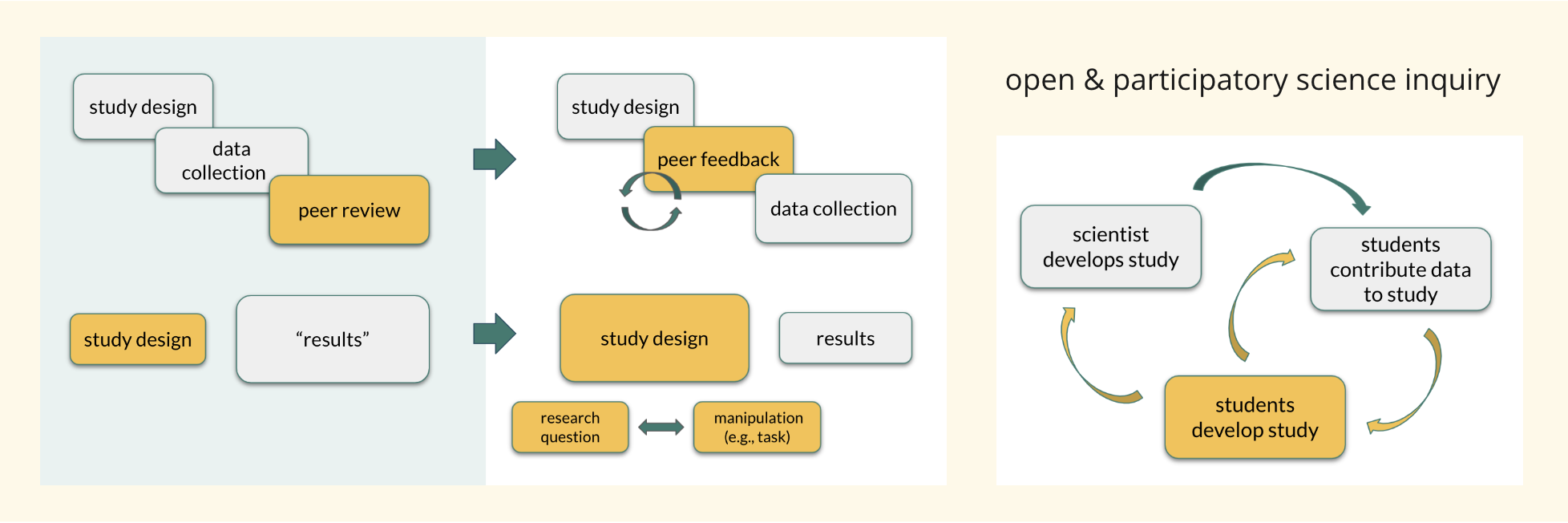

Peer feedback on study designs not study outcomes

In recent years, a number of findings in psychology research have turned out to not be replicable, and this “Replication Crisis” can be quite damaging to the public’s trust in science (Earp and Trafimow 2015). Therefore, many human brain and behavior scientists are now advocating for a fully transparent research model for psychology research that resembles what is already common practice in clinical science: a public pre-registration of how you plan to collect and analyze data. This is also slowly changing how scientific peer review is operationalized: Increasingly, scientific journals invite scientists to submit (and review) research projects for publication before data collection occurs, moving away from a model where scientists give and receive peer reviews after the entire study is completed. This forces scientists to be open and transparent about which steps were part of the research plan from the beginning, and which decisions were made after data collection took place. But it also has another benefit: Scientists are able to improve their research plans based on peer reviews before investing time, energy, and money into possibly flawed studies.

In MindHive, students are also encouraged to give and receive peer feedback on study proposals and not completed studies. Peer review takes place with classmates and, crucially, with students in other classrooms across the country. This process allows students to maximally benefit from the review process: They are not only able to tweak their study design based on feedback from their peers, but the act of giving feedback to peers also likely helps students improve their own study (Li et al. 2010). Second, and relatedly, this process refocuses the emphasis from study outcome to study design. We have found in previous classroom human brain and behavior experiments that students (and professional scientists, for that matter) are very focused on whether their hypotheses were borne out, and the perceived failure or success of a study is often linked to the results alone. This pressure to confirm hypotheses and emphasis on study outcomes over study design can lead to questionable research practices, “over-interpreting” data, and, in extreme cases, fraud. In MindHive, we therefore flip this process around: Students learn that results are meaningless if the research question is not well-formed, or if the study design is not well-aligned with the research question. In the peer review process, students are rewarded for their ideas rather than their study outcomes. As such, we hope to increase fascination with science inquiry and not “just” with science discovery. We would like students to walk away from MindHive with a “Check out my idea! How cool is that?” rather than “Check out my results!”

Citizen science

In addition to promoting data and content to “be freely used, modified, and shared by anyone for any purpose.” Open science advocates have stressed the importance of citizen science (Eitzel et al. 2017; Fecher and Friesike 2014) defined broadly as public engagement in scientific research. Citizen science has been shown to boost science literacy in both formal and informal learning settings (Bonney et al. 2016; Harris et al. 2020), enabling participants of all ages to appreciate science inquiry as an iterative and collective endeavor to which they can provide valuable contributions.

In most citizen science initiatives, the public helps collect data for research designed and analyzed by professional scientists (Bonney et al. 2009). MindHive instead advocates a partnership model wherein experts and non-expert participants are included as stakeholders in all stages of scientific inquiry, including conception and design (see Figure 1; Dikker et al. 2021). MindHive follows a participatory science learning approach (Koomen et al. 2018; NGSS Lead States 2013) by emphasizing authentic problems and the social negotiation of knowledge in the context of open science and citizen science. Additionally, educators are participating in the process and increasing their understanding of how to teach the nature of scientific inquiry as well. In the next section, we discuss how this model can be put into practice.

Click here to view larger image

The MindHive curriculum

All activities on the MindHive platform are supported by curricular materials. The lessons are co-designed with scientists and teachers, ensuring that the vision for application of the curriculum and its integration into a larger school program is relevant to current practice. For example, the content is aligned with the Next Generation Science Standards (NGSS Lead States 2013) and is structured to follow the “5 Es” (Engage, Explore, Explain, Elaborate, and Evaluate; Bybee et al. 2006). The unit is “alive” in that it is iterated on and improved with every implementation, and lessons are stand-alone where possible to serve educators’ varying teaching needs. Due to the wide applicability of research methods and to the relevance of cognitive and social neuroscience perspectives across fields, the program can be integrated into a range of high school science contexts, from Environmental Science to Molecular Biology. In approximately 12–24 lessons, the program guides students in: (1) scientific knowledge generation, (2) citizen science and ethics in human cognitive and social neuroscience research, (3) human brain and behavior case studies, (4) study design, (5) peer review, and (6) data analysis and synthesis.

The MindHive platform

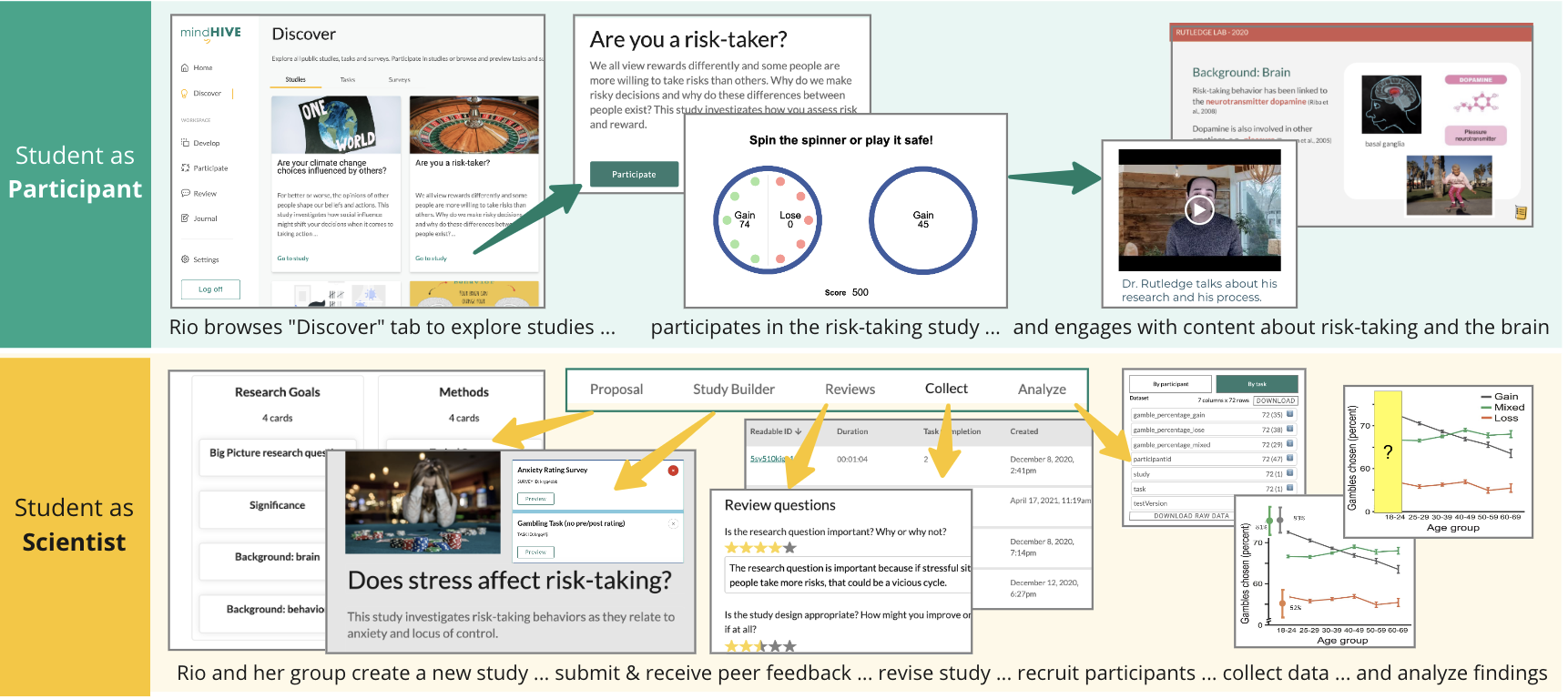

The MindHive platform features tools for developing study proposals and for giving and receiving peer reviews, and a public database of commonly used online cognitive tasks and surveys from which users can drag-and-drop to build research studies aligned with their research questions. To promote iterative research design and to scaffold their own study design, students are encouraged to “clone” and build upon scientist-initiated studies from the platform.

Discover

The Discover area allows students to explore and participate in studies created by cognitive and social neuroscientists. The Discover area also features a section where they can explore and partake in studies created by other students, and try out tasks and surveys that are featured on the platform.

Develop

The Develop area allows students to develop and carry out their own online citizen science studies. The Proposal tab consists of text-based “cards” designed to help students learn to create realistic collaboration plans. Students can assign different sections to themselves and each other (e.g., Anna and Rick flesh out the Background section, Luna writes the Importance section, Hiram and Ember are in charge of describing the Methods, etc.); provide and receive comments from their teachers, peers, and scientists; and toggle between draft and print views of their proposal. This format allows for a variety of learners to engage successfully through complex material thanks to the pre-organized tasks that build toward a successful proposal. The Study Builder consists of an intuitive interface that allows students to create a study page and build an experiment using a block-based design approach: Students can mix, match, and tweak tasks from a database of validated tasks and surveys (described below). Students can read what other students thought of their study in the Review tab. Finally, the Collect and Analyze tabs allow them to manage and analyze the data collected in their study.

Public Task and Survey Bank

The public task and survey bank includes well-established and well-validated psychological tasks and surveys. For example, the Stroop Task is a widely used task to probe a person’s cognitive control, in this case their ability to ignore contradictory information. Participants are asked to identify the color of words, the meanings of which sometimes match their color (e.g., the word red printed in red), and sometimes do not (e.g., the word green printed in red). The survey bank features questionnaires that are widely used to probe people’s emotional states, personality traits, demographic info, etc. For example, the Big Five Personality Inventory is a personality trait questionnaire that is commonly used by scientists and that students can implement in lieu of popular but not scientifically validated “personality tests” they might otherwise choose for their studies. Other questionnaires ask about participants’ mood and anxiety, coping strategies, perceived status in society, etc.

Figure 2 exemplifies how an 11th grader, “Rio,” engages with the MindHive platform to learn about human brain and behavior science, and combines existing tasks and surveys to create a study about risk-taking and coping.

Click here to view larger image

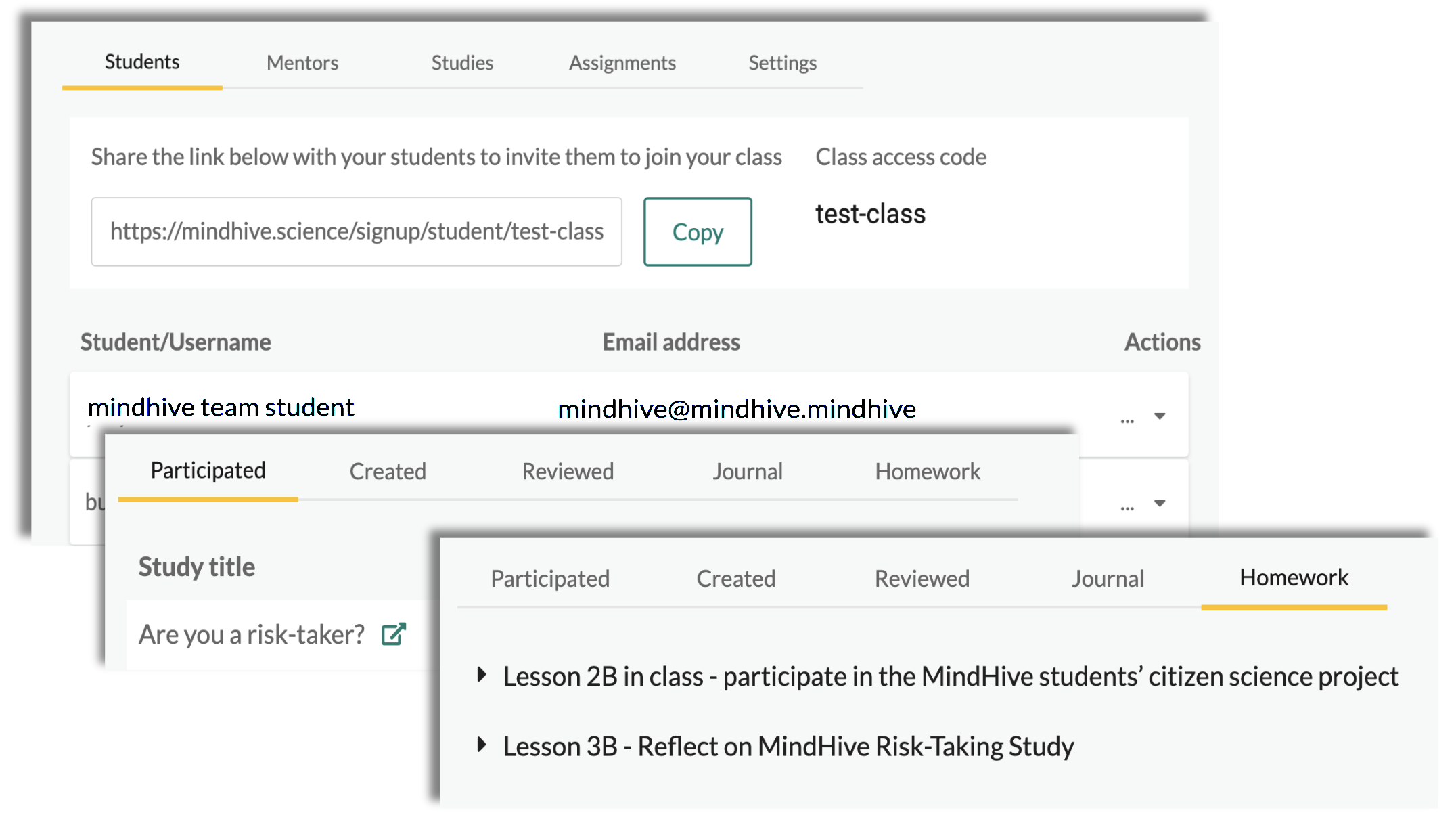

The teacher experience

To assist teachers in supporting their students, MindHive provides the basic infrastructure of a Learning Management System. Teachers can create classes and add students; create class networks with other teachers; keep track of the studies that their students have participated in, reviewed, or developed; create study proposal templates and comment on them; and create and manage assignments and group chats (see Figure 3). Teachers are supported in facilitating the program through multiple resources including access to research, researcher support, and guidance from the mentor on the MindHive team. Detailed activities, rich discussion prompts, and thoughtful student explorations are included so that teachers can choose how to optimize their classroom practices with the material. Teachers can further guide and support students through the inquiry process by incorporating external resources. For example, Frontiers for Young Minds and Columbia University’s brainSTEM program both host scientific articles targeted at teen and adolescent readers, and can be used as inspiration for students’ research questions and as support for their background research.

Click here to view larger image

Protecting student data

Since the MindHive program is centered around human behavior, data protection is integral to the platform and to the students’ learning experience. Students learn about the importance of ethics in human brain and behavior research, engage in class discussions around data protection and privacy, and experience firsthand what these data protection practices mean for them and for their study participants.

The platform has an authentication system with multiple levels of authorization that depend both on the user role (teacher, student, scientist, or participant) and on individual preferences. For example, only teachers and classmates will see student names; only researchers with official approval from their institutions Internal Review Board (IRB) can see contact details for their study participants; students have different “avatar” usernames depending on whether they are study participants or students so that their teachers and peers cannot readily access their study data; and if a student indicates that their data should only be used for educational purposes, that data will not be displayed to researchers but will only be available within the scope of their class. Importantly, contrary to many data platforms in the United States, MindHive users own their own data. In compliance with General Data Protection Regulation (GDPR) standards, European Union GDPR users can request that any of their data be deleted at any time.

Flexible implementation

Both the structure and curriculum content can be flexibly implemented in both formal and informal learning environments. For example, Human Brain and Behavior lessons (see Table 1 in Supplemental Resources) are constructed as case studies that can be “mixed and matched,” and teachers can choose to put emphasis on what they deem most important: study design, peer review, data collection and analysis, or all of the above. This flexibility allows teachers to use functionalities of the platform to frame and support, as opposed to detract from, required (standards-based) course content, as much of the MindHive curriculum focuses on crosscutting concepts (e.g., cause and effect) and widely applicable science practices (e.g., planning an investigation). Having said that, as a full-fledged curriculum, MindHive is a better fit for an elective or a class based on the NGSS, as opposed to preparation for standardized examinations, such as the Regents examinations of New York State.

The Study Builder is designed to enable for both group-based and individual student projects, and peer feedback can be arranged both between classmates and between students from other classes (across or within schools). As a result, the program is suitable for full remote, hybrid, or in-person contexts both within formal and informal learning contexts. For example, in addition to guiding in-class projects, the program can support the development of extracurricular projects, such as science fair submissions, by enabling students to design, receive feedback on, and run their own studies outside of the classroom. As discussed in the next few sections, the MindHive platform and program are designed to increase students’ research skills while teaching them about the scientific process and human brain and behavior content. As described below, this makes MindHive accessible to different age ranges (9th to 12th grade so far) and classes (Environmental Science, Biology, Neuroscience, after school research clubs, etc.).

Benefits of an online platform

MindHive’s flexibility in implementation is in part made possible by the fact that the platform is browser-based. Students do not have to download anything, and they can access the platform through any device that is connected to the internet, although it’s important to note that not all the functionality is suitable for mobile devices. Beyond easy access, MindHive is designed as an online platform to allow students, teachers, and scientists to work on science inquiry in an iterative and collaborative manner. Studies and data sets continue to live on the platform beyond individual implementations, allowing students to “clone” scientist-initiated studies and ask follow-up questions, contribute data, or even adopt student-initiated studies and continue data collection and analysis. Second, MindHive emphasizes collaboration between schools. Since the launch of MindHive in 2020, students have engaged in study participation and peer review between geographically and demographically diverse schools across the United States, including both private and public schools ranging from New York City to Tennessee. Third, the online setup facilitates remote student-teacher-scientist partnerships. This is especially attractive for students who may not live near research universities, and who may not have easy access to in-person science mentorship programs. Finally, as described more in detail below, the remote nature of MindHive has made it possible to continue to support students in their science inquiry throughout the COVID-19 pandemic, and also in other online learning environments, which are part of an increasing market.

Implementing MindHive during a global pandemic

Since its inception in the Spring of 2020 through Spring 2022, MindHive has been implemented in 15 classrooms, serving around 350 students. Students and scientists have together designed or drafted about 250 studies for which 1600 data sets have been collected.

Beyond classroom implementations, the MindHive platform has been used to promote STEM engagement and identify community needs by supporting local citizen science projects. In the Brownsville Sentiment Equity Project, the MindHive team worked with six local community organizers and residents, researchers from UC Berkeley, and not-for-profit organizations. Public sentiment to co-design a cognitive and social neuroscience citizen science project centered on cognitive and social-emotional outcomes linked to pandemic-related changes in the community of Brownsville, Brooklyn, one of the hardest-hit areas in New York City (the Brownsville Sentiment Equity Project).

Scientists, students, and communities entering a lockdown together

MindHive was first launched in March of 2020 as part of a pilot implementation with 17 Environmental Science students in Manhattan. New York City was the epicenter of the COVID-19 pandemic and the MindHive team and students entered the U.S. lockdown together. The curriculum was (re)framed to use COVID-19 to illustrate scientific discovery in an ongoing crisis (e.g., Should the vaccine be rolled out fast or should we await clinical trial outcomes? Which research questions are important now and which will be important beyond the pandemic?), science communication (e.g., What is the value of releasing study outcomes before they have been scrutinized by other scientists?), and human behavior (e.g., Why do college students decide to go party in Miami in the middle of a pandemic? Are you more likely to adopt socially desirable behaviors from your peers or from your parents?). Alongside these lessons, students participated in scientist-initiated studies on the platform that illustrated risk taking across the age span and social influence from peers vs. parents.

Using the global relevance of the pandemic, students then created their own studies, in groups of four, focusing on human brain and behavior in relation to COVID-19. Students asked research questions about mental health and social isolation, remote vs. in-person learning, and how social behavior can make or break public health directives. For example, students asked whether personality traits might predict how well a student thrives in “Zoom school” (see Figure 4). Read an account of this implementation from the teacher perspective here.

After implementation, NYU scientists incorporated the students’ research questions into a study entitled “How do you cope during the pandemic?” (henceforth referred to as the Pandemic Citizen Science Study), for which data was subsequently collected from high school and university students through Fall 2020 and Spring 2021. Findings from 206 students suggest that personality traits indeed affect how connected students felt to their peers and teachers in in-person vs. remote learning environments. Furthermore, there was a mismatch between students’ remote learning preferences and what they were offered: While students overwhelmingly preferred asynchronous learning (e.g., being assigned materials they could complete at their own pace), none were offered asynchronous learning models at their schools or colleges.

Collaborative inquiry: study design and peer review

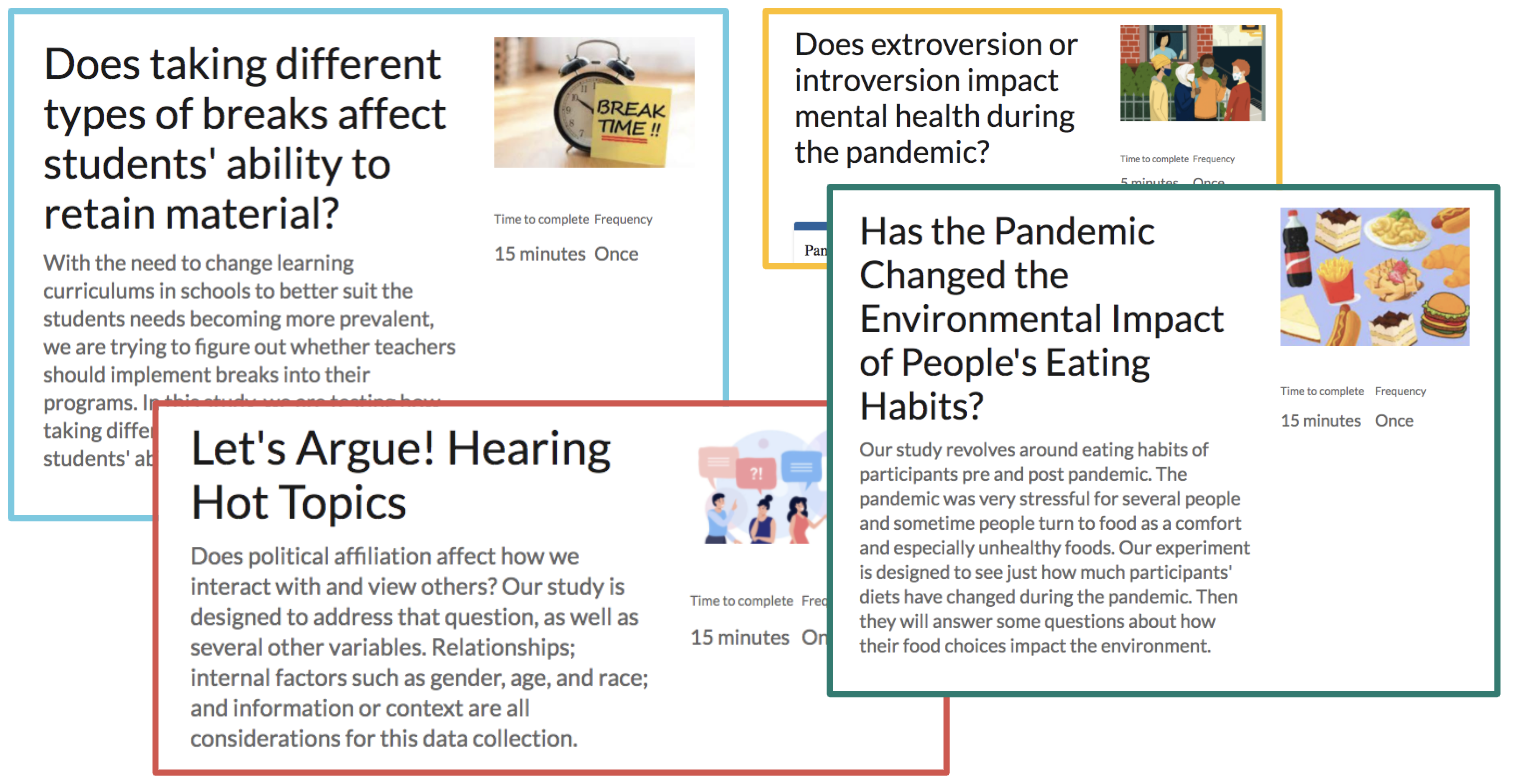

In the 2020–2021 school year, MindHive was implemented by six teachers at five different schools across the United States, reaching approximately 240 students. Students participated in the Pandemic Citizen Science Study (see previous section) in addition to scientist-initiated studies on the topics of risk taking, social influence, and mindfulness. As in the Spring of 2020, students then designed their own studies, either in groups or individually (this varied by implementation). Unlike the Spring 2020 implementation, not all student studies were focused on the pandemic, but students still gravitated toward personally and socially relevant topics such as learning, mental health, climate change, and political polarization (see examples below). Students and teachers were supported in their study design by a team of neuroscientists and psychologists from different research institutes and at different career levels (ranging from recent BA graduates to tenured faculty). Additionally, each teacher was matched with another teacher to create a “class network” to allow students to review and participate in studies developed by other students from other classrooms.

What students are learning

Across implementations, students report an increased appreciation of and fascination with science after participating in MindHive. For example, one student remarked that the experience was valuable for helping them “to think critically, which is really important throughout science and life as a whole… just being able to again delve beneath the surface of a certain question…. and then also just seeing how asking a question can develop into this huge research study.” Importantly, students indicate that they learned to better appreciate the collaborative nature of science and the value of different perspectives in generating both ideas and conclusions. Students further demonstrated that they acquired skills related to the process and challenges of creating a scientific study and developed concrete strategies to improve their own studies and research proposals. When asked in a survey what they learned from developing a proposal on the MindHive platform, one student responded: “I learned that you need to be very thorough, in your instructions as well as your explanations of the experiment and the science behind the experiment. I also learned that it is very valuable to have your peers review your work because looking at the proposal from a fresh pair of eyes will show you which parts you need to work on.” These and other findings are reported in more detail in (Matuk et al. 2021).

Examples of studies designed by students

In Supplementary Resources, we have included four examples of studies created by MindHive students in the 2020–2021 school year. MindHive Example Studies 1, 2, 3, and 4. Click on the links in each PDF to explore each study.

You can find more studies at the MindHive Discover Page.

Challenges

Many students reported gaining a deeper understanding of the thought and time it takes to design and implement a research study. While this learning outcome is beneficial as it indicates a comprehension of research processes in the real world, it also emphasizes a larger challenge present in designing curriculum and tools to support authentic scientific inquiry for students. Each aspect of the research process—from writing a proposal to engaging in peer review—requires both time and support that can be difficult to accommodate in a classroom setting. As MindHive continues to develop, it is increasingly important to focus on the ways that different parts of the research process (proposal development, data analysis, peer review, etc.) can be modularized, combined, and meaningfully integrated into different aspects of a curriculum so that the curriculum and design process is manageable within the constraints of a classroom for both students and teachers. Additionally, the time constraints of a classroom setting means that sometimes students do not get the chance to analyze and report on data collected through the project they designed. While our goal is for students to value the process of study design over the end results, we have learned that it is important for students’ self-efficacy to give them a sense of closure, which comes from following through every stage of the research process.

Another challenge for MindHive relates to community building, scaling, and sustainability. Overall, the flexibility and accessibility of MindHive’s online platform and resources offer the potential for students, scientists, and communities to work together and engage in scientific inquiry across a variety of contexts. However, more work needs to be done to discover how we can best foster a community of scientists and participants beyond individual classroom implementations, and continue to support meaningful partnerships between students and scientists beyond the project’s funding.

Conclusion

MindHive is an online citizen science initiative that can be used both inside and beyond the STEM classroom to help learners and community members engage in authentic human brain and behavior science inquiry. It offers flexible tools that help bridge the gap between in- and out-of-school STEM learning (e.g., by facilitating scientist-student-community partnerships). All studies and platform activities are paired with content where personally and socially relevant issues—such as the COVID-19 pandemic and climate change—are used as anchor phenomena. These serve not only to support human brain and behavior science learning (e.g., risk taking, memory, social behavior) but also to illustrate issues related to the “making of science,” such as research ethics, the difficult balance between rapid and rigorous scientific discovery, and the cultural shift in the scientific community toward open science practices.

Open science, among other goals, includes improving the public-scientist relationship by improving transparency and science communication. In line with these goals, MindHive adheres to a participatory science learning approach and emphasizes student-scientist-community partnerships in human brain and behavior science inquiry: The platform and program is a co-design effort by and for teachers and students, and by and for community representatives. As such, MindHive sets itself apart from other neuroscience and psychology STEM learning experiences by supporting learners and community members to make sense of and be active stakeholders in human brain and behavior science as it relates to their everyday lives.

More information can be found at the MindHive information page for educators.

Acknowledgments

MindHive is made possible by the teachers and students who have made the project come to life, Sushmita Sadhukha, Engin Bumbacher, Veena Vasudevan, Joey Perricone, Felicia Zerwas, Mike Lenihan, The Brownsville Sentiment Working Group, Robb Rutledge, Sarah Lynn, Henry Valk, Laura Landry, Omri Raccah, Adree Songco Aguas, Benjamin Silver, Shane McKeon, Kim Doell, Sarah Myruski, Itamar Grunfeld, and Alex Han. MindHive is supported by NSF DRK-12 Award #1908482.

Suzanne Dikker (suzanne.dikker@nyu.edu) is an Associate Research Scientist at New York University in New York City. Yury Shevchenko is a postdoctoral fellow at University of Konstanz in Konstanz, Baden-Württemberg, Germany. Kim Burgas is an independent researcher in New York City. Kim Chaloner is a Dean of Faculty and Science Teacher at Grace Church School in New York City. Marc Sole is an 11th-grade Science PBAT teacher at East Side Community High School in New York City and a member of the New York Performance Standards Consortiumis. Lucy Yetman-Michaelson is a Junior Laboratory Associate at New York University in New York City. Ido Davidesco is an Assistant Professor at University of Connecticut Neag School of Education in Storrs, Connecticut. Rebecca Martin is a postdoctoral fellow at New York University in New York City. Camillia Matuk is an Assistant Professor at the Educational Communication and Technology program, Department of Administration, Leadership and Technology, Steinhardt School of Culture, Education and Human Development at New York University.

citation: Dikker, S., Y. Shevchenko, K. Burgas, K. Chaloner, M. Sole, L. Yetman-Michaelson, I. Davidesco, R. Martin, and C. Matuk. 2022. MindHive: An online citizen science tool and curriculum for human brain and behavior research. Connected Science Learning 4 (2). https://www.nsta.org/connected-science-learning/connected-science-learning-march-april-2022/mindhive-online-citizen

Citizen Science Inquiry NGSS Research Technology High School Informal Education